AI & The Cognitive Barbell

The AI Orchestrator thesis says the human’s job is orchestration. True, but incomplete. I need to tell you another part of the story.

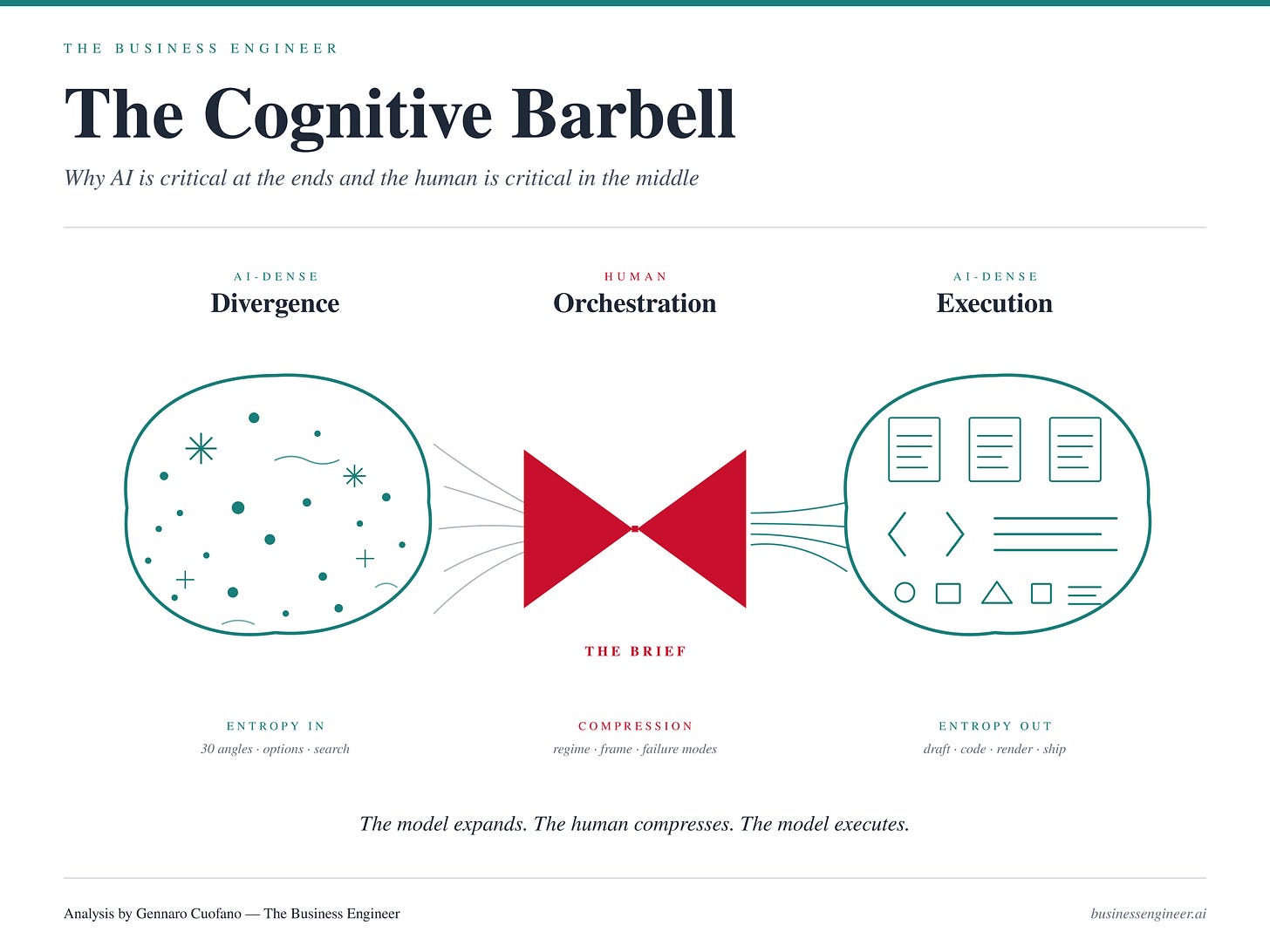

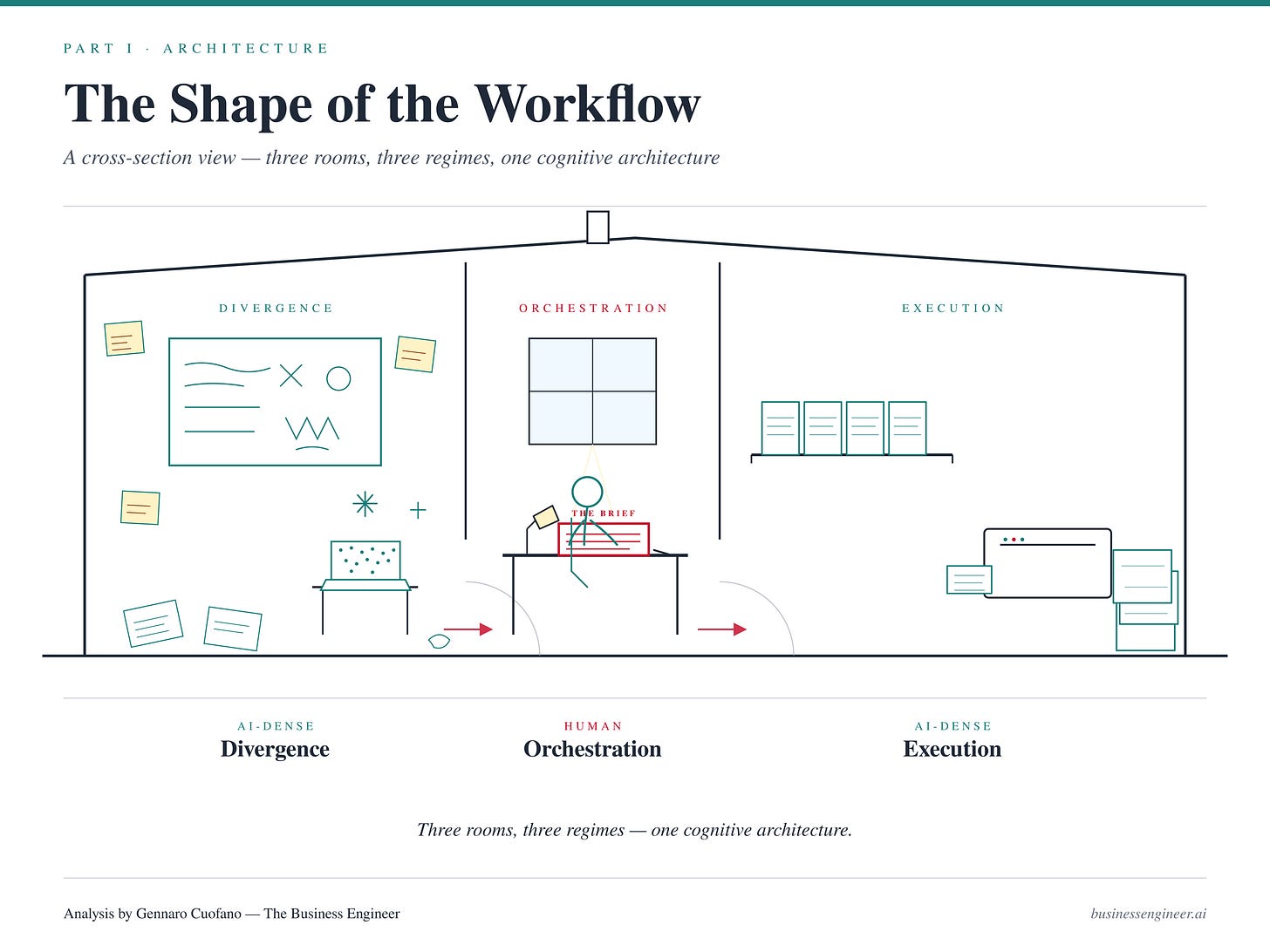

The missing move is locating where in the workflow AI is critical and where the human is. The shape that falls out is a barbell — AI-dense at the two ends, human-dense in the narrow middle. Getting this shape wrong is the single most common failure mode in current AI use, and it explains almost all of the consensus slop filling the internet.

For Exec Members, The Full BE Agent Harness Is Now Available!

The Portal collapses seven assets I have built separately over the past decade into one substrate.

The Shape of the Workflow

Every knowledge task moves through three phases.

Divergence — generating options, exploring variations, externalizing half-formed thoughts, clearing mental noise, expanding the possibility space.

Orchestration — selecting among the options, writing the brief, conditioning the execution, naming the specific wrong answer, deciding when it is done.

Execution — producing the artifact. Drafting, coding, rendering, formatting, running the playbook to completion.

AI is structurally strong at phases one and three, and structurally weak at phase two. This is not a limitation of current models. It follows from what a language model is.

The divergent end rewards dense consensus sampling. When you want 40 angles on a problem, the model’s prior — calibrated to the bulk of written human knowledge — is exactly the right instrument. The model generates variations faster than you can think them, and because the task is additive (more is better, you will filter later), consensus density is a feature, not a bug.

The convergent end rewards deterministic production. Once the brief is set — specs clear, constraints explicit, format defined — the model runs the playbook. Drafting, coding, rendering. The agentic loop compounds correctness in this regime because each step has a ground-truth check: either the output conforms to the spec or it does not.

The middle is different. The middle is compression. Reading the divergent output and identifying which branch lives in the tail. Compressing tacit understanding of the real-world regime into an explicit brief the model can condition on. Deciding the frame. Naming the failure mode. These are not search problems, and they are not production problems. They are problems of judgment — and judgment, in Extremistan domains, requires standing outside the distribution the model is trained on.

Drawn on a page, the shape is unmistakable: two wide zones where AI compounds, connected by a narrow waist where the human sits. A barbell.

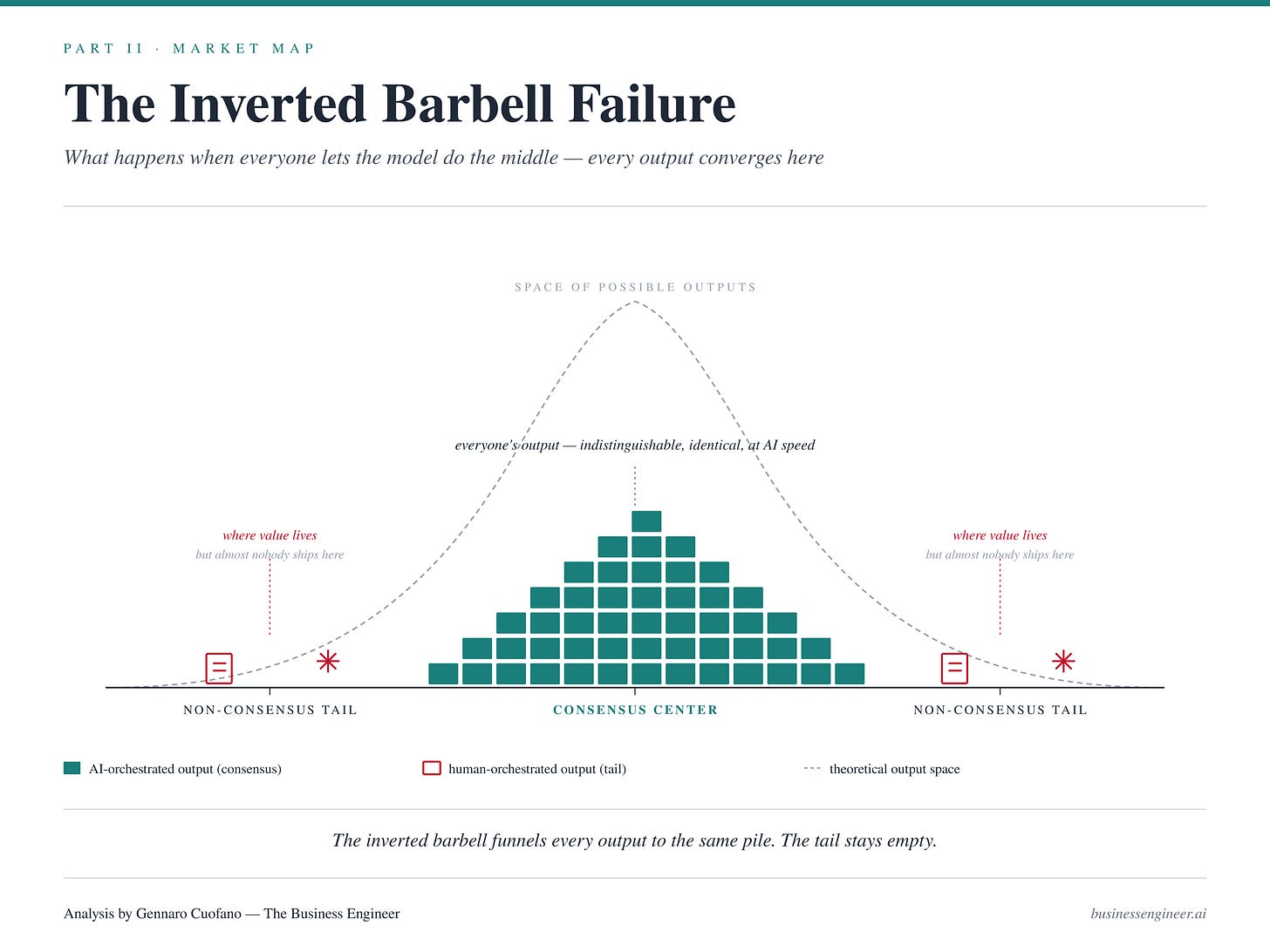

The Inverted Barbell Failure

Most knowledge workers, right now, are working the barbell backwards.

They manually brainstorm. They stare at a blank doc trying to think of five angles. They write the draft themselves. They format the deck by hand. And when it comes time to orchestrate — to read the regime, choose the frame, condition the agent — they delegate that to the model. “Write me an article about X.” “Make me a strategy for Y.”

This is the inverted barbell: human effort spent at the ends, model effort spent in the middle. It is exactly the wrong allocation. The human is competing against the model at the two things the model is built for (divergent search and convergent production), and conceding to the model the one thing the model structurally cannot do — identify which region of its own distribution is correct for this specific case.

The market consequence of the inverted barbell is visible everywhere. The proliferation of consensus content. The explosion of competent-looking but indistinguishable output. Decks that read like every other deck. Analyses that land on the same three takeaways everyone else landed on. Threads that sound like every other thread. This is not a failure of the models. It is a failure of orchestration — humans letting the model sample from the dense center without a conditioning brief that points it at the tail.

In Mediocristan domains this barely matters. Consensus output is often correct because the average is predictive. In Extremistan domains — which is most of strategy, investing, positioning, creative work, and anything where the outlier dominates — consensus output is systematically wrong. Not mildly wrong. Wrong in the specific direction of missing the event that actually determines the outcome.

The competitive implication: as model access equalizes across the economy, divergent and convergent output converge toward the same baseline. Everyone has the same brainstorming partner. Everyone has the same executor. The only non-commoditized input left is the middle — the conditioning brief, the regime read, the failure-mode naming. That is where all remaining differentiation migrates.

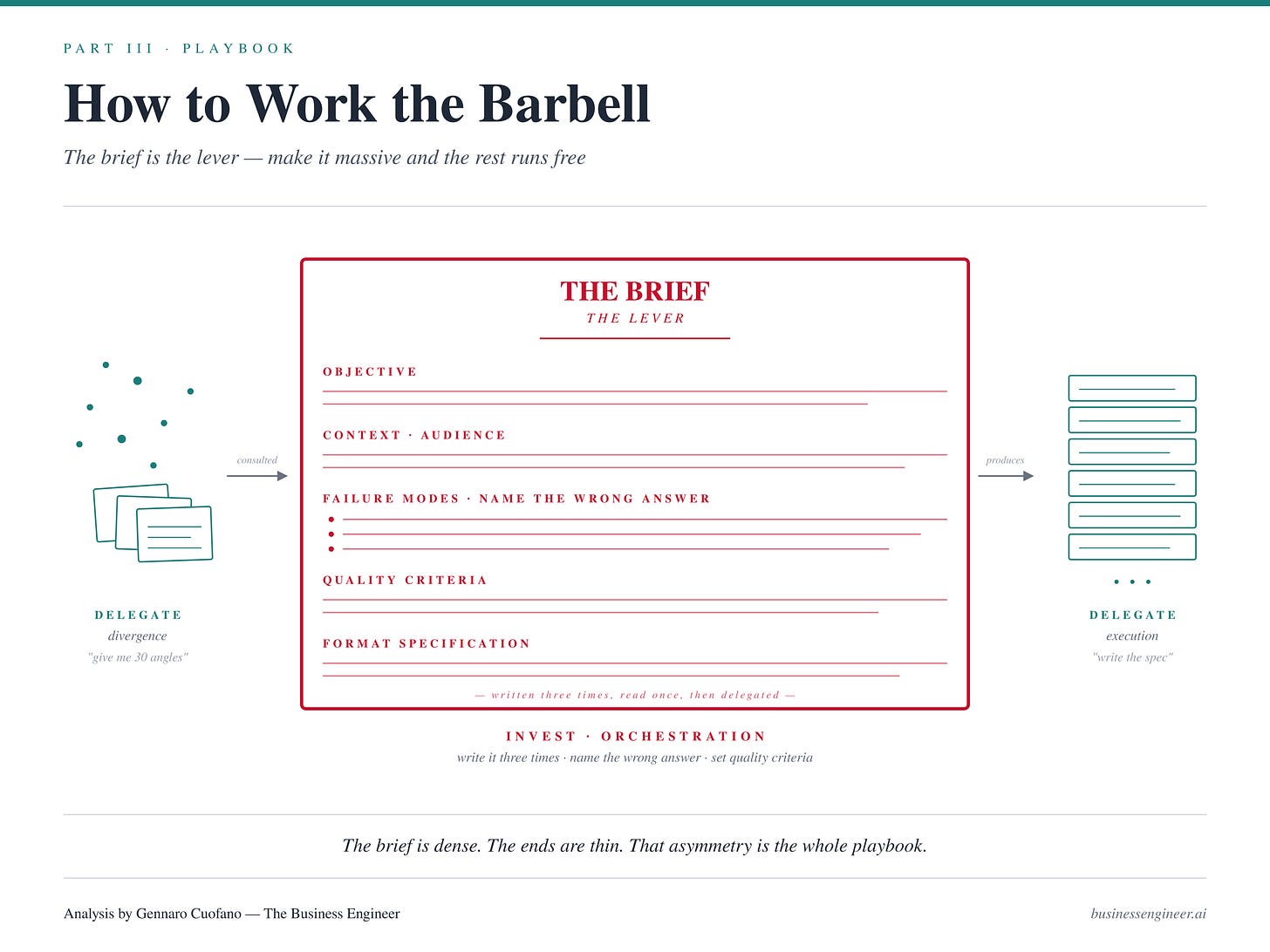

How to Work the Barbell

Three operational shifts.

Delegate the divergent end aggressively. Stop brainstorming alone. Stop trying to generate angles on a blank page. The model is better at divergent search than you are — faster, more exhaustive, less cognitively fatigued. Use it. “Give me 30 angles, 30 failure modes, 30 objections, 30 analogies, 30 ways this could be framed.” Your job is to read the output, not to generate it. The divergent output is raw material for the middle.

Delegate the convergent end aggressively. Stop drafting from scratch once the brief is set. Stop hand-formatting. Stop writing the code the model can write. The execution layer is where agentic loops compound — give it clear specs and let it run. Your job is to write the spec, not to execute against it.

Invest disproportionately in the middle. The middle is where your time should concentrate. The middle is the brief. Not the one-line prompt — the structured conditioning artifact: context, objective, audience, constraints, examples of the right region, explicit naming of the failure modes, format specification, quality criteria. This is the single document that determines whether the divergent and convergent phases produce consensus slop or tail insight.

The behaviors that build the middle are not the behaviors that feel productive. They look like reading before prompting. They look like writing the brief three times before running it. They look like sitting with the divergent output and reading the distribution — which angles live in the tail, which in the bulk, which are consensus dressed up as contrarian. They look like naming, in writing, the specific wrong answer the model will gravitate toward if uncorrected.

The orchestrator’s working day is front-loaded. The briefs take time. The reading takes time. The compression takes time. The execution, once conditioned, takes almost no time at all. This inverts the traditional time distribution of knowledge work, in which execution was where the hours went.

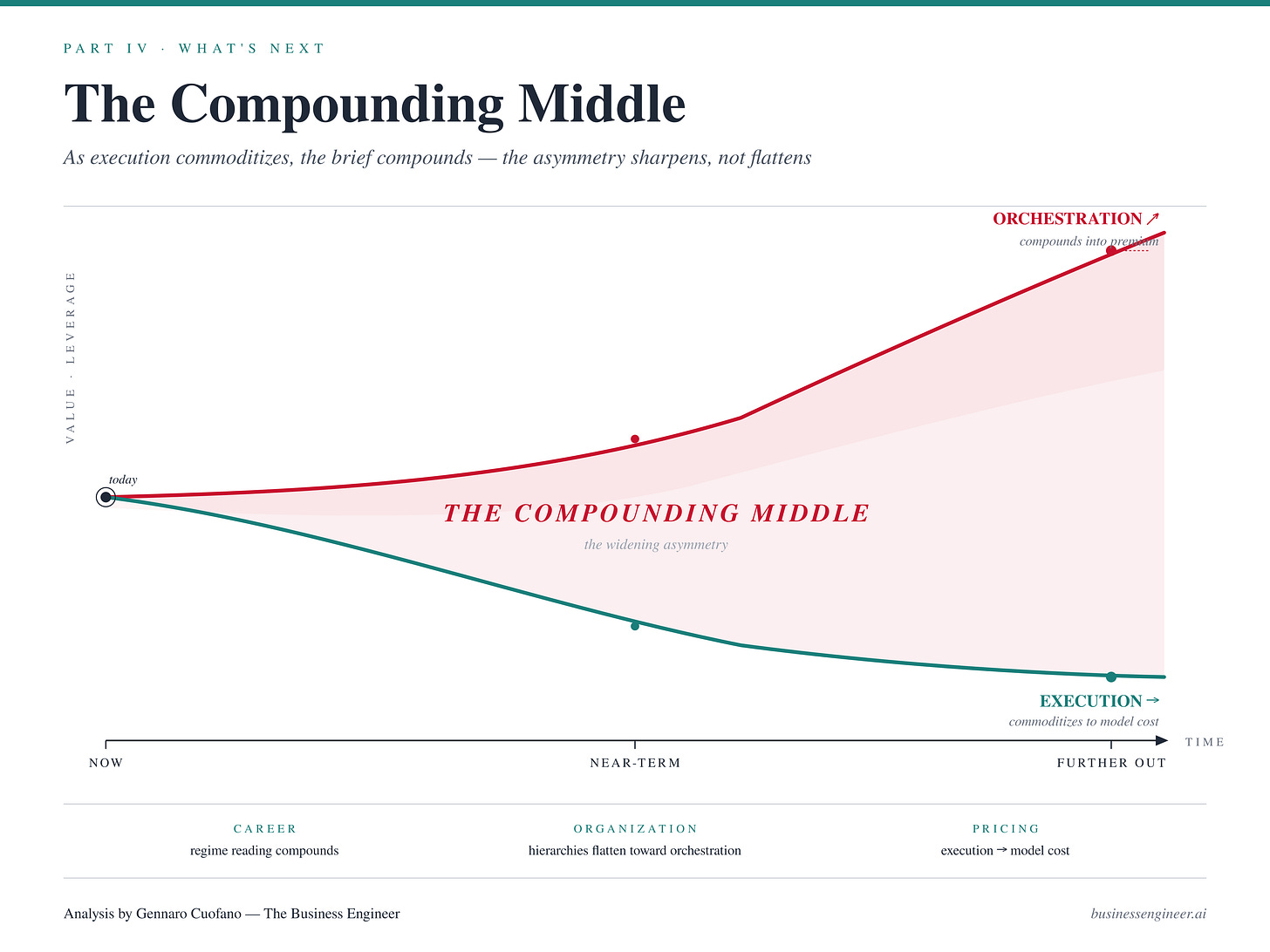

The Compounding Middle

As execution continues to automate, the convergent end of the barbell gets wider and cheaper. Drafting, coding, rendering, deck-making — all pushed further toward zero marginal cost per artifact. The divergent end similarly widens as context windows grow and models get faster at option generation.

The counterintuitive implication: the middle does not shrink. It becomes more valuable.

In Extremistan outcome domains, the quality of the conditioning brief produces disproportionate differences in outcomes. When execution is free, the only remaining lever on output quality is the brief. The narrower the middle gets as a share of total time, the more leverage each minute of it carries. A 10% improvement in brief quality, compounded through an agentic loop that executes at effectively infinite speed, produces power-law differences in final artifact quality.

Three structural consequences follow.

At the career level, the skills that matter are not prompt tricks or tool knowledge. They are the skills that have always governed judgment in Extremistan domains: reading regimes, identifying tail events, converting tacit understanding into explicit briefs, naming failure modes before they happen. These were always the skills of senior professionals. They are now the skills of any professional who wants to produce non-consensus output.

At the organizational level, the relevant hierarchy flattens toward orchestration capacity. It no longer matters who can produce the deck — everyone can. It matters who writes the brief the deck was produced from. Organizations that recognize this will redesign their structure around orchestration bandwidth, not execution capacity.

At the pricing level, services that sell execution are heading toward commoditization at model cost. Services that sell orchestration — the brief, the frame, the regime read — are heading toward premium pricing as the last remaining non-commoditized input in the workflow.

The barbell asymmetry gets sharper, not flatter, as the technology improves. This is the opposite of the dominant narrative that AI erodes human value. AI erodes the value of the ends. The middle becomes irreplaceable.

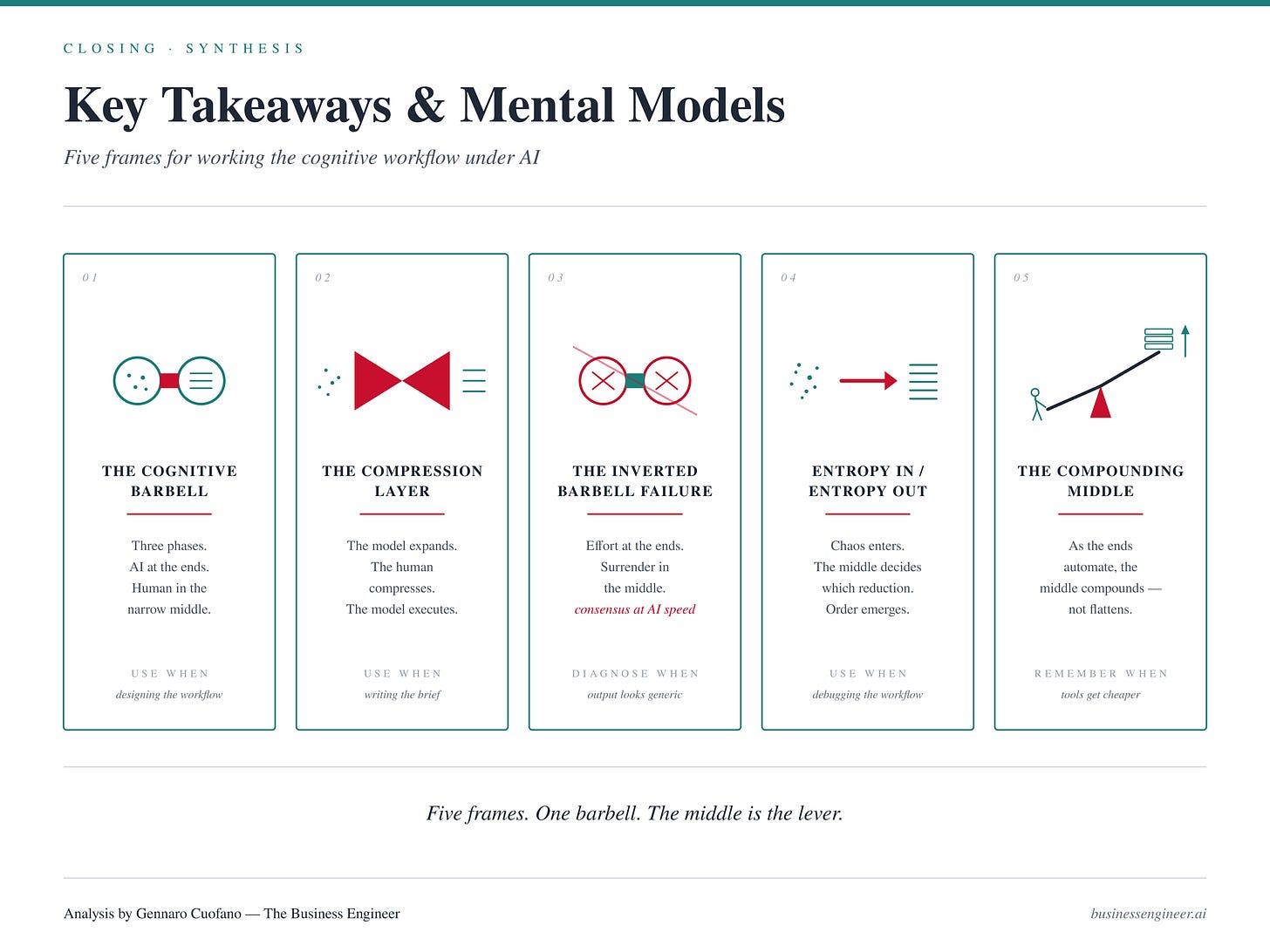

Key Takeaways & Mental Models

The Cognitive Barbell — Workflows split into three phases (divergent, orchestration, convergent), and AI is critical at the two ends while the human is critical in the narrow middle. The shape of cognitive labor under AI is barbell-shaped, not linear.

The Compression Layer — The human’s job is reducing divergent option space to a single conditioning brief. Orchestration is directed entropy reduction. The model expands; the human compresses; the model executes.

The Inverted Barbell Failure — The dominant failure mode of current AI use: humans spend effort at the ends (manual brainstorming, manual execution) and delegate the middle to the model. This produces consensus output at AI speed — the worst possible combination in Extremistan domains.

Entropy In / Entropy Out — Divergent phases add entropy (more options), convergent phases remove it (one artifact), and the middle decides which reduction. Only the human can decide which reduction because only the human stands outside the model’s training distribution.

The Compounding Middle — As the ends automate further, the middle does not shrink in importance — it compounds. Free execution means brief quality produces power-law differences in output. The narrower the middle becomes as a share of total time, the more leverage each minute of it carries.

Recap: In This Issue!

AI does not replace human work uniformly. It reshapes it into a barbell:

AI dominates the edges (divergence and execution)

Humans dominate the middle (orchestration and judgment)

Most failures come from working this shape backwards

The Architecture: Three Phases

1. Divergence (Expand)

Generate options, ideas, variations

AI strength:

fast, exhaustive, non-fatiguing

consensus sampling works here

Function:

Increase possibility space

2. Orchestration (Compress)

Select the right path

Define the brief

Identify failure modes

Choose the frame

Human domain:

judgment

regime reading

tail identification

3. Execution (Produce)

Draft, code, format, render

AI strength:

deterministic output

compounding via iteration

Function:

Turn specification into artifact

The Barbell Shape

Wide ends → AI leverage

Narrow middle → human leverage

Key property:

The middle determines whether the system produces:

insight (tail)

or consensus (average)

The Core Failure: Inverted Barbell

Most people:

brainstorm manually

execute manually

delegate orchestration to AI

Result:

humans compete where AI is strongest

AI operates where it is weakest

Output:

polished, coherent, generic content

The Mechanism Behind Bad Output

AI defaults to center of distribution

Without conditioning:

selects average answers

scales them with fluency

In Extremistan domains:

consensus = systematically wrong

The Competitive Shift

Divergence → commoditized

Execution → commoditized

Orchestration → only remaining moat

Meaning:

same tools

same models

different outcomes

based on brief quality

The Playbook

1. Delegate Divergence

Use AI for:

ideas

angles

failure modes

Your role = filter, not generate

2. Delegate Execution

Once the brief is set:

stop writing manually

stop formatting manually

Your role:

Define specs, not produce output

3. Invest in the Middle

Write structured briefs:

context

objective

constraints

failure modes

format

Behavior shift:

more reading

more thinking

more rewriting briefs

The Compounding Effect

As AI improves:

divergence gets faster

execution gets cheaper

Counterintuitive result:

orchestration becomes more valuable, not less

Strategic Implications

Individual Level

Advantage shifts to:

judgment

framing

compression

Organizational Level

Hierarchy shifts from:

execution capacity

to orchestration capacity

Pricing Level

Execution → commoditized

Orchestration → premium

Mental Models

Cognitive Barbell → AI at ends, human in middle

Compression Layer → human reduces entropy

Inverted Barbell Failure → wrong allocation of effort

Entropy Flow → expand → compress → execute

Compounding Middle → brief quality drives output quality

Strategic Compression

Looks like: AI automates work

Reality: AI redistributes leverage

The ends scale.

The middle decides what scales.

With massive ♥️ Gennaro Cuofano, The Business Engineer