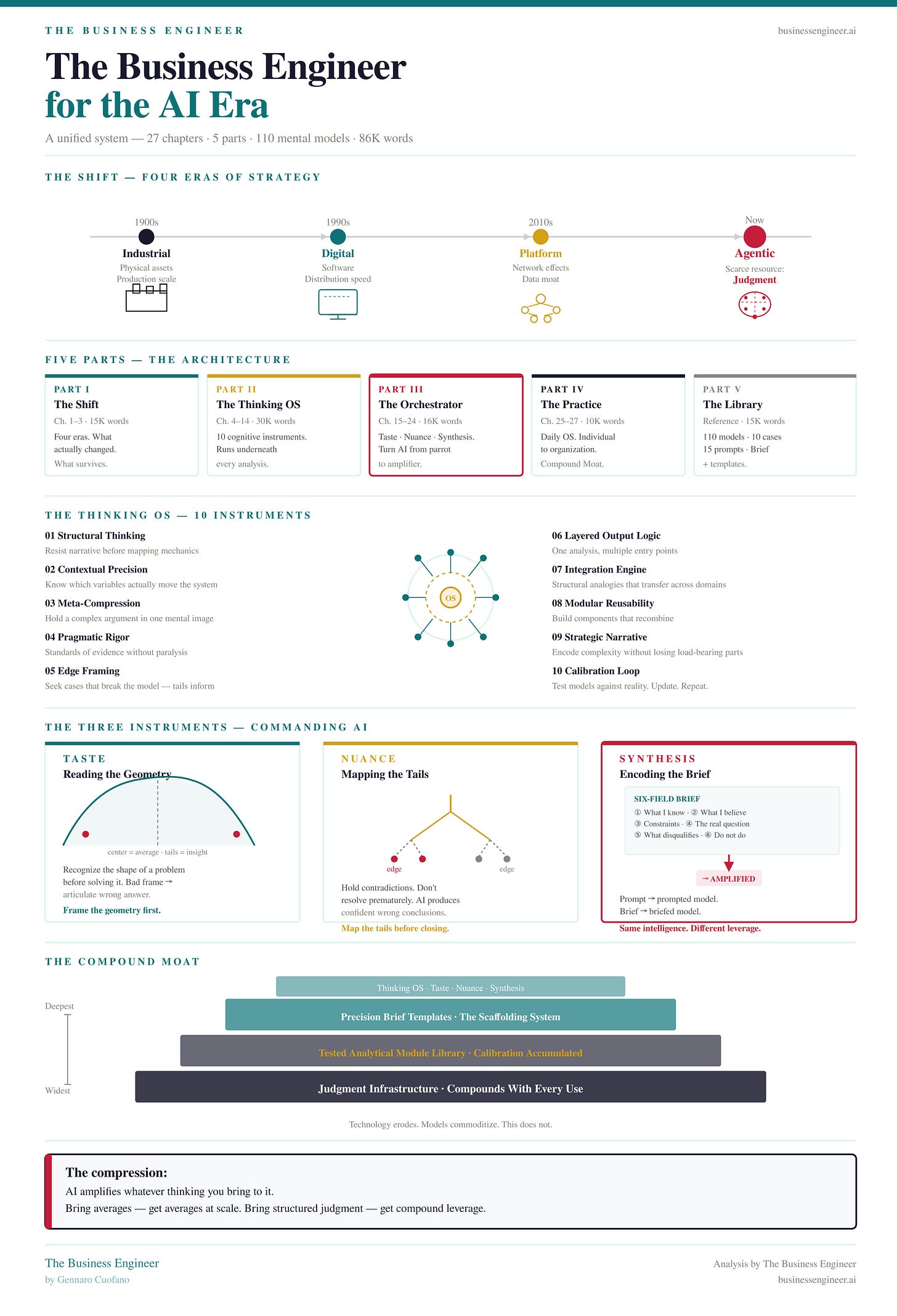

AI & The Importance of System Thinking

Most practitioners approach system prompting the way they approach a search engine. You type something in. Something comes out. If the output is wrong, you adjust the input.

The mental model is linear: instruction is about compliance. The practitioner’s job, in this framing, is to write clearer instructions.

This mental model is not just incomplete. It is structurally misleading in a way that compounds over time. The practitioner who holds it gets progressively better at writing instructions and progressively more confused about why the outputs keep disappointing them in the same ways.

The failure modes feel arbitrary — sometimes the model does what you asked, sometimes it doesn’t, and the gap between the two cases is not obvious from the instruction itself.

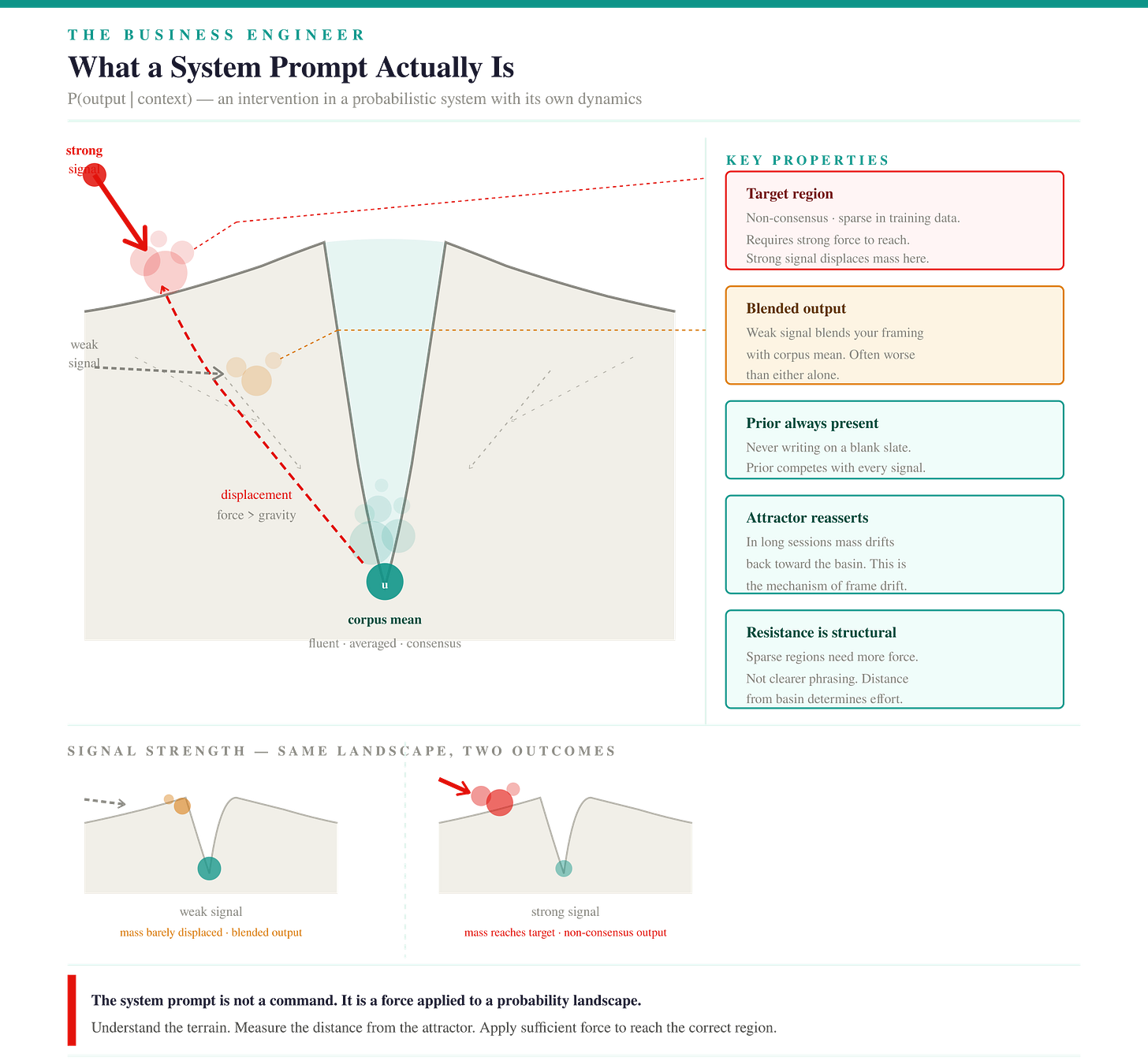

The reason is that they are intervening in a system while thinking they are issuing commands. These are not the same activity, and the difference between them is not a matter of degree. It is a structural difference that determines what the practitioner can and cannot accomplish.

The correct mental model is systems thinking. Not as a metaphor. As a literal description of what is happening when you write a system prompt.

Why the linear model fails:

It locates the problem in the wrong place. When the output is wrong, the instruction-writer edits the instruction. The systems thinker asks which structural element of the system generated the failure. These are different diagnoses, and they produce different interventions.

It has no theory of resistance. A command-and-compliance model has no account of why a well-formed instruction consistently fails to produce a desired output. The system has its own dynamics that resist certain kinds of conditioning regardless of how clearly the instruction is written.

It compounds in the wrong direction. Each iteration of instruction refinement feels like progress and may be making the underlying structural problem worse — collapsing variance, reinforcing confirmation loops, producing outputs that satisfy the surface specification while drifting further from the correct distributional region.

It has no leverage point theory. It treats all prompt elements as equally powerful. They are not. The gap between a parameter-level intervention and a paradigm-level intervention in terms of effect on output is enormous, and conflating them produces systematic underinvestment in the interventions that actually work.