AR as The Remote Control for Agents

Premium Analysis

Three years ago, I introduced the idea of “AI Convergence”: the point at which AI becomes the missing layer that finally enables long-promised technologies to work.

Many of those technologies stalled because they never crossed the chasm from early adopters to real-world utility. Augmented Reality (AR) was one of the clearest examples.

That thesis is now starting to materialize. Recent developments, including OpenClaw, reinforce the argument: we are approaching the inflection point where AR can finally deliver on its promise.

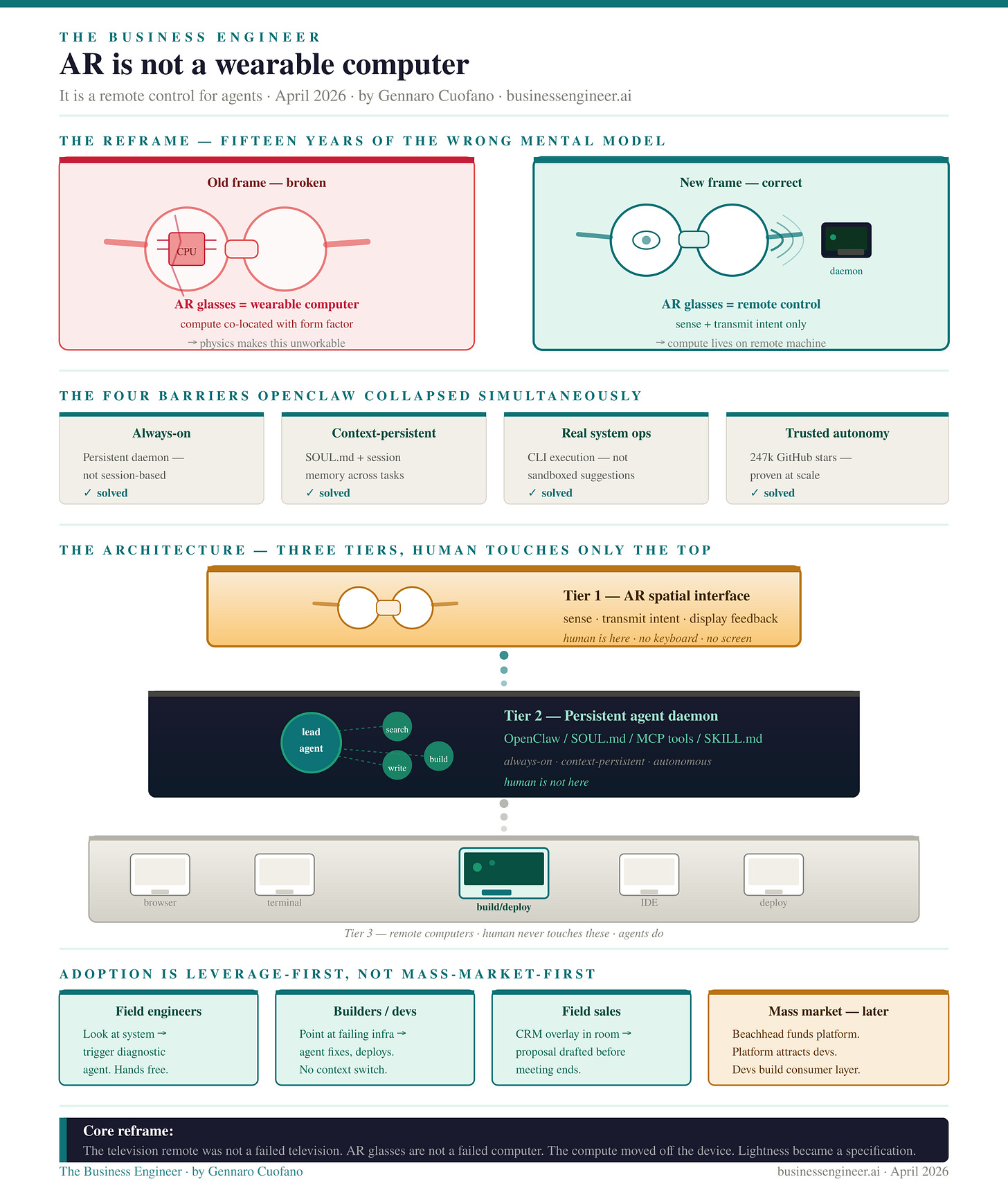

However, this requires a shift in how we frame AR.

AR is not a computing platform, at least not in the next decade. Treating it as such leads to the wrong expectations.

Instead, AR should be understood as a control interface for AI agents. It acts as a remote layer through which agents interact with and operate traditional computing systems on our behalf.

For this transition to take hold, three structural shifts are required:

AI makes AR economically and functionally viable at scale: Without AI, AR lacked a compelling use case. AI provides the utility layer that justifies adoption.

AR is not the computer; it is the interface for agents controlling computers: The value shifts from local computation to orchestration of agent-driven workflows.

AR is not (yet) a consumer device; it is a business tool first: Its immediate impact will be in professional environments, where productivity gains justify adoption before consumerization follows.

Every AR product cycle for the past 15 years has ended the same way. The hardware ships. The reviews note the impressive optics, the uncomfortable weight, the inadequate battery, and the lack of killer apps. Sales disappoint. The team pivots or shuts down.

Google Glass. Magic Leap. HoloLens. Vision Pro. Spectacles. Each one was described as a computer you wear. Each one failed as a computer you wear.

The failure was not engineering. It was the mental model.

The frame was wrong from the start. And the reason it was wrong was not that nobody was smart enough to see the right one.

It was that the right frame required an architectural precondition that did not exist until 2025. Once that precondition arrived — in the form of the persistent agentic daemon — the correct mental model for AR became available for the first time.

The correct mental model is this: AR glasses are not a wearable computer. They are an on-the-go remote control for agents who use computers on your behalf.