Micron & The AI Memory Bottleneck

Premium Analysis

There is a number buried in Micron’s FQ2 earnings that most analysts skipped. It is not the $23.9B revenue or the 75% gross margin.

It is this: FQ3 single-quarter guidance of $33.5B exceeds Micron’s full-year revenue for every year in the company’s history through FY2024.

One quarter. More than a full year has ever been produced.

That number is not a financial achievement. It is a diagnostic.

It tells you the rate at which the physical infrastructure of AI is being built, and therefore the rate at which the constraint on that infrastructure is tightening. Memory is the constraint. And the constraint is accelerating.

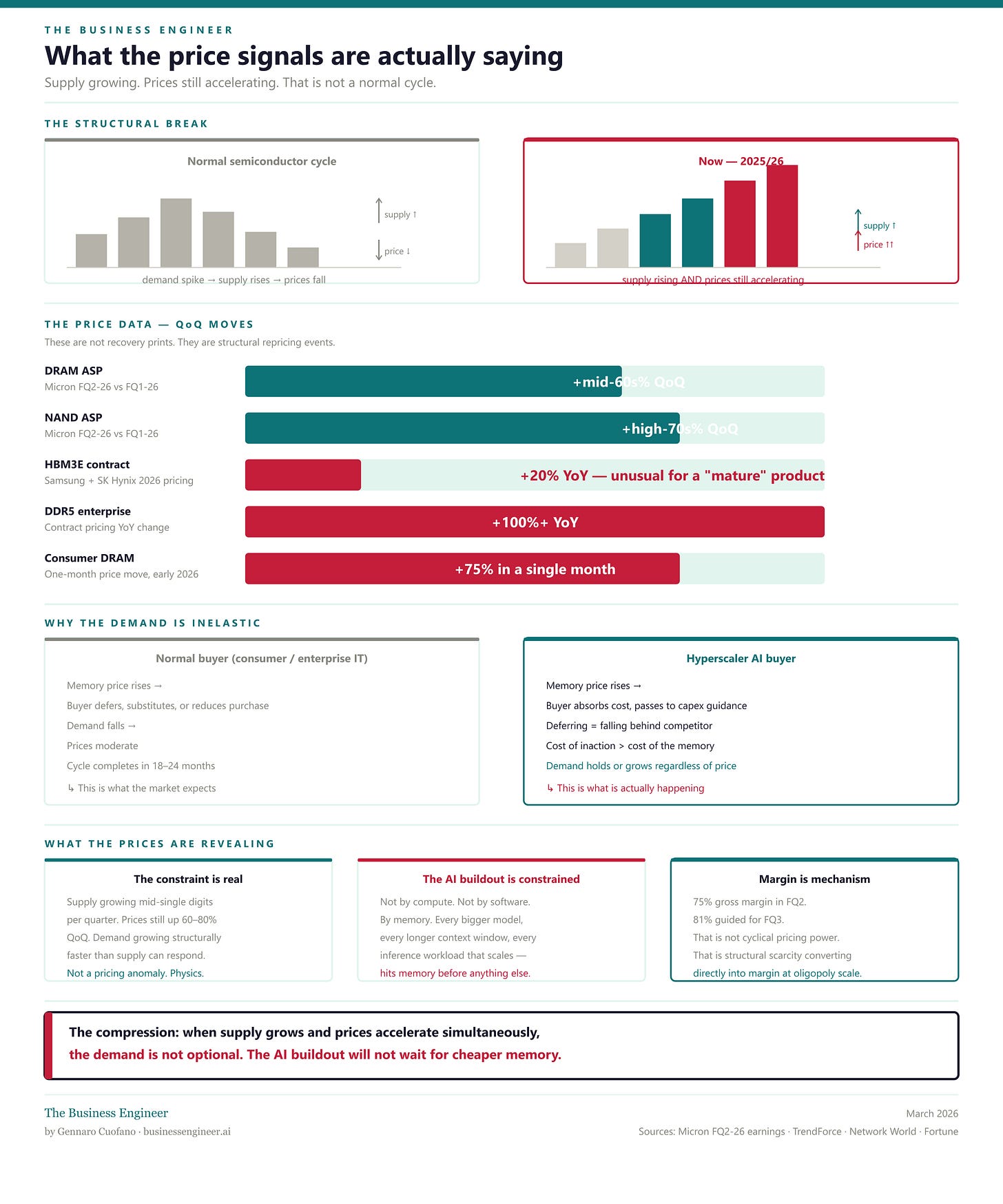

What the price signals are actually saying

Starting with the price data, because it is the most unambiguous signal in the report.

We can peel the onion back to the core.

DRAM average selling prices rose in the mid-60s percentage range quarter-over-quarter. NAND ASPs rose in the high-70s percentage range.

HBM3E contract prices were raised roughly 20% for 2026, even as the product was supposed to be entering a mature phase of its cycle. Enterprise DDR5 contract pricing has doubled year-over-year. Consumer DRAM prices rose 75% in a single month earlier this year.

These are not the price signals of a normal demand recovery. In a normal semiconductor cycle, prices rise as demand exceeds inventory, then moderate as capacity comes online.

What is happening here is structurally different: capacity is coming online — Micron’s DRAM bit shipments grew mid-single digits QoQ — and prices are still rising sharply.

Supply is growing, and prices are accelerating.

That combination occurs only when demand is growing faster than supply can respond structurally, and when demand is inelastic — buyers must have the product regardless of price.

Both conditions apply. Hyperscalers are not deferring data center builds due to higher DRAM costs.

They are absorbing the price increase and passing it into capex.

The AI infrastructure buildout is driven by a logic of competitive necessity, making it price-insensitive in a way consumer electronics demand is not. When your rival is building, and you are not, the cost of inaction exceeds the cost of the memory.

What this tells us about the AI landscape: The AI buildout is not constrained only by compute. It is not constrained by software. It is constrained by memory. Every model that gets bigger, every context window that gets longer, every inference workload that scales — it runs into memory bandwidth and memory capacity before it runs into anything else. The price signals are the market’s real-time acknowledgment of that constraint.