State of AI Fluency

This Week In Business AI [Week #12-2026]

We now have four Anthropic data sources published in under 60 days, forming the most complete empirical picture of human-AI collaboration capability available anywhere.

The question they collectively answer is not whether people are using AI. It is whether they are getting better at it — in what specific ways, with what structural consequences for how work is organized, and with what implications for where this AI paradigm is actually heading.

The answer is yes and no. And the distinction between those two answers is where the strategic insight lives.

Reading the Shape of the AI Paradigm from the Data

Most analyses of AI’s economic impact start from model capability and reason forward. This one starts from observed behavior — what millions of people actually do when they use AI — and reasons backward to the shape of the paradigm. The behavioral data is more constraining than the capability data. It tells us not what AI can do, but what it is actually doing, and how the humans on the other side are responding.

Several structural features of the current AI paradigm emerge clearly from the data stack.

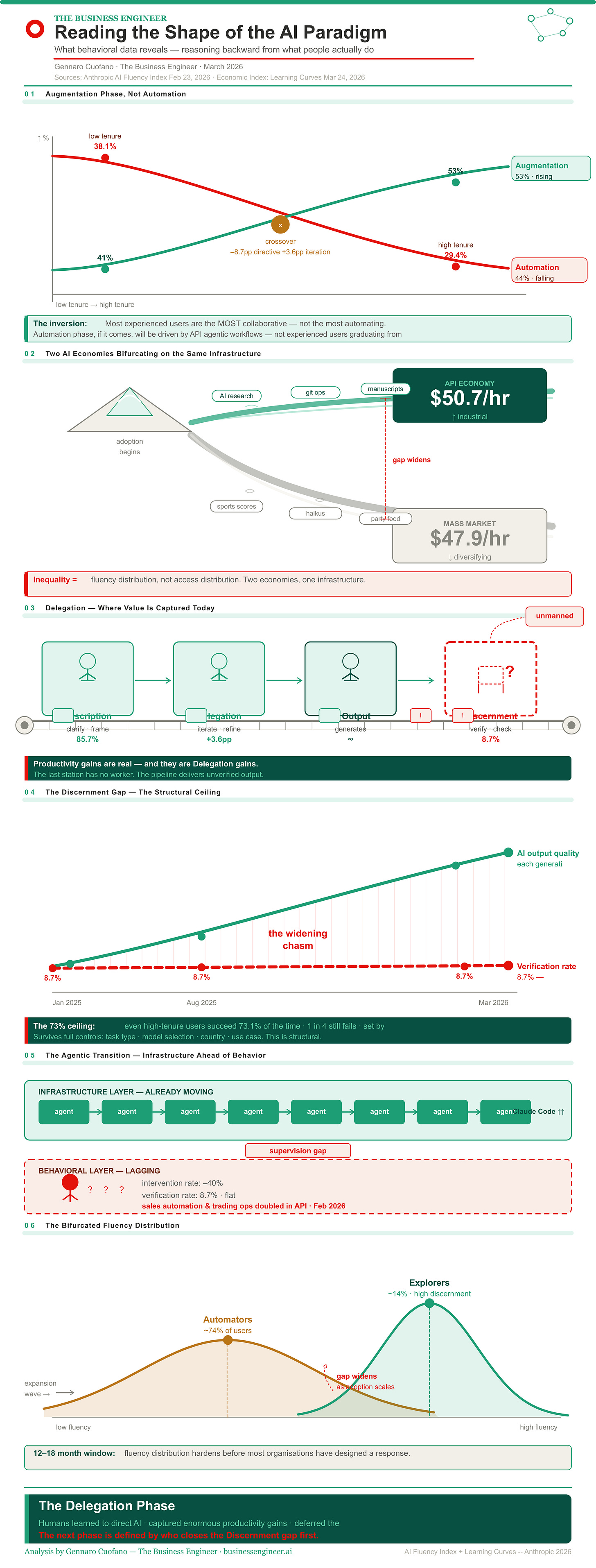

We are in an augmentation phase, not an automation phase — and the data shows exactly why.

The Economic Index classifies conversations into two broad modes: automation (directive and feedback loop interactions, where the human gives instructions and accepts outputs) and augmentation (task iteration, validation, and learning interactions, where human and AI genuinely collaborate). Augmentation sits at 53% and is rising slightly. Automation sits at 44% and is falling.

This is not what the automation narrative predicted. The dominant story about AI in knowledge work was that it would first augment and then automate — that humans would first collaborate with AI and eventually hand over tasks entirely. What the data shows instead is the opposite trajectory: as users gain experience, they become more collaborative, not less. High-tenure users are 8.7pp less directive, 3.6pp more iterative, 3.4pp more in learning mode. The most experienced users are the most augmentative. The least experienced users are the most automating.

This inverts the standard model. The automation phase, if it comes, will not be driven by experienced users graduating from augmentation. It will be driven by API-level agentic workflows replacing entire task categories before users even develop augmentative habits. The consumer-facing platform (Claude.ai) is trending toward augmentation. The developer-facing platform (the API) is trending toward automation and concentration — the top 10 O*NET tasks now represent 33% of API traffic, up from 28%. These are two different AI economies running on the same infrastructure.

Delegation is the dominant behavioral mode — and it is developing faster than any other dimension.

Across the full user base, the most prevalent behavioral signal is iterative refinement at 85.7%. Users are good at directing, prompting, refining, and re-prompting. The tenure data confirms this as a genuine learning curve: experienced users are significantly more iterative, more collaborative, more capable of managing the human-AI interaction loop.

This makes sense as the primary skill to develop first. Delegation is the most visible, most actionable, most immediately rewarded fluency behavior. Better prompting produces visibly better outputs. The feedback loop is tight and immediate. The skill builds through use without any designed intervention.

The implication for how we understand the AI paradigm: the economic value of AI is currently being captured almost entirely through Delegation efficiency. The productivity gains being documented — 30% gains in software development, 25% average labor cost savings in AI-adjacent tasks — are Delegation productivity gains. They are real. They are also incomplete, because they measure the output of the AI pipeline without measuring the quality of what comes out.

Discernment is the absent third pillar — and its absence defines the current paradigm’s limits.

Every AI paradigm has a structural constraint that determines its ceiling. In this one, the constraint is not model capability. It is not adoption rate. It is not infrastructure availability. The constraint is the human capacity to evaluate what AI produces.

Fact-checking at 8.7%. Reasoning questioned at 14.6%. Missing context identified at 20.3%. These are not just low numbers — they are structurally stable low numbers that do not improve with tenure, do not improve with task complexity, and do not improve with output stakes. The Discernment floor is not moving.

This defines the current AI paradigm precisely: a paradigm of highly capable production with systematically insufficient verification. AI can generate outputs of professional quality across an enormous range of tasks. The humans receiving those outputs cannot reliably evaluate them. The gap between what AI produces and what humans can verify is the defining constraint of the current epoch — not a temporary bug to be resolved through better prompting tutorials, but a structural feature that requires designed organizational intervention.

The bifurcation of the user base is the most underappreciated structural feature of the paradigm.

The data reveals two AI economies developing in parallel. In the API — where experienced developers and enterprises build automated workflows — task concentration is rising, task value is rising ($50.7/hr average), and agentic architectures are fragmenting coding work into hundreds of parallel API calls. This is the industrial AI economy: high-skill, high-value, highly automated.

In Claude.ai — where individual knowledge workers use AI conversationally — task diversity is rising, average task value is falling ($47.9/hr, down from $49.3), and personal use is rising from 35% to 42%. This is the mass-market AI economy: spreading across lower-value tasks, broader populations, less specialized use cases.

These two economies are bifurcating. The industrial economy is automating and concentrating. The mass-market economy is diversifying and diluting. The fluency data maps onto this bifurcation precisely: the Explorer cohort is building the industrial AI economy, with complex, work-oriented, high-tenure use. The Automator and Validator cohorts are building the mass-market AI economy, with simpler, more personal, lower-stakes use. The inequality of AI benefit is not going to be primarily about who has access. It is going to be about who has developed the Discernment to use access well.

The agentic transition is already happening at the infrastructure level, ahead of the behavioral level.

Claude Code’s agentic architecture is growing rapidly as a share of API traffic — splitting coding work into smaller, directive API calls that operate with minimal human oversight. Business sales and outreach automation, automated trading and market operations — these are workflow categories that doubled in API share between November 2025 and February 2026. The agentic economy is not a future scenario. It is a current infrastructure reality.

But the behavioral data shows that the humans managing these agentic systems are not developing the skills to supervise them. Intervention rates in agentic workflows have fallen 40%. Verification behaviors are stuck at single-digit percentages. The organizational structures that should be providing oversight — the middle management layer, the senior review function — are being compressed on the assumption that AI-assisted productivity gains make them redundant.

The shape of the AI paradigm that emerges from all of this is specific: a paradigm of accelerating agentic capability meeting decelerating human verification capacity, with organizational structures adapting to the productivity signal while ignoring the quality signal. The next phase of this paradigm will be defined by which organizations build the Discernment infrastructure to close that gap — and which ones discover its cost when the gap closes catastrophically.

The weekly newsletter is in the spirit of what it means to be a Business Engineer:

We always want to ask three core questions:

What’s the shape of the underlying technology that connects the value prop to its product?

What’s the shape of the underlying business that connects the value prop to its distribution?

How does the business survive in the short term while adhering to its long-term vision through transitional business modeling and market dynamics?

These non-linear analyses aim to isolate the short-term buzz and noise, identify the signal, and ensure that the short-term and the long-term can be reconciled.