The Agent Manifesto

What You Can Do For Your AI

That single sentence is the inversion at the heart of this moment. For three years, we asked what AI could do for us — answer, draft, code, summarize, solve. We benchmarked weights and measured outputs. We treated the model as a vending machine and the prompt as a coin. We forgot the architecture we were operating inside.

The architecture is a loop. The loop is a compounding function. What it compounds is what you put into it.

The consensus center is cheap. The right tail is expensive. And the mechanism that determines which loop the model converges to is not the model. It is the orchestrator.

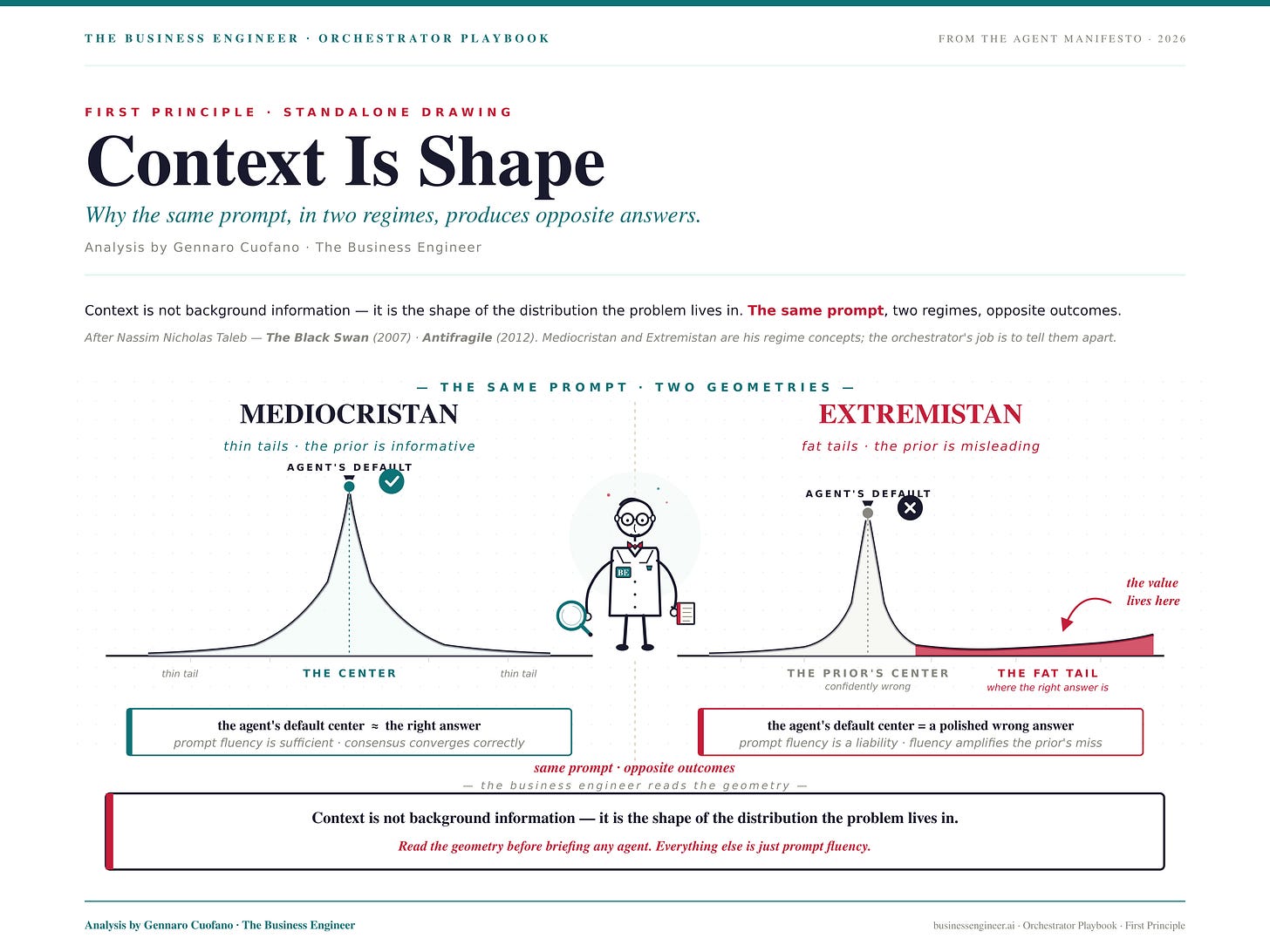

Context Is Shape

Before anything else, the Playbook’s first principle: context is not background information — it is the shape of the distribution the problem lives in.

Every problem has a geometry. Some problems live in Mediocristan — the regime of thin tails, where the average case is close to every case, where the prior is a reliable guide, and where the consensus answer is usually the right answer. Other problems live in Extremistan — the regime of fat tails, where the average is a fiction, where the prior is systematically wrong, and where the value lives in the specific tail that the prior cannot see.

The same prompt, sent into these two regimes, produces two opposite outcomes.

In Mediocristan, the agent’s center-of-distribution default is correct. Prompt fluency is sufficient. The loop converges on the right answer because the right answer is the center.

In Extremistan, the agent’s center-of-distribution default is a precise, polished, confidently wrong answer. Prompt fluency is a liability. It produces more elaborate, more internally consistent, more rhetorically persuasive versions of the wrong answer — at AI speed and at scale.

The Amplification Warning: AI amplifies starting conditions. It does not fix a misread of the distribution. It amplifies it. If your starting conditions include well-developed distribution-reading, AI amplifies your advantage. If your starting conditions are default assumptions inherited from surface familiarity, AI amplifies those defaults. The amplification is neutral. The direction is not.

This is why the work begins before the prompt.

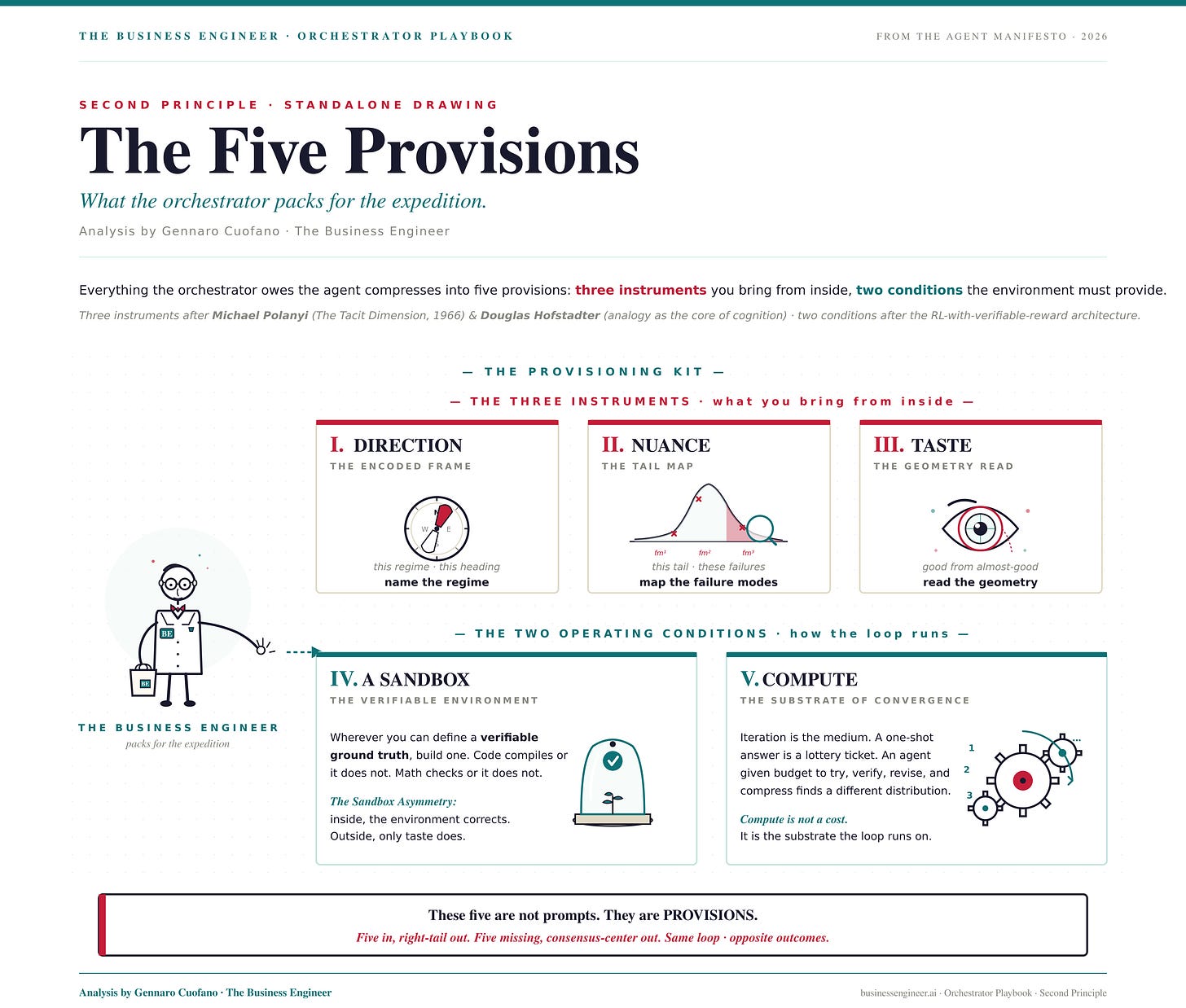

The Five Provisions

Everything the orchestrator owes the agent can be compressed into five provisions. Three of them come from inside the orchestrator — the instruments of distribution-reading. Two of them define the environment the loop runs in.

These are not prompts. They are provisions. They are what the orchestrator packs for the expedition.

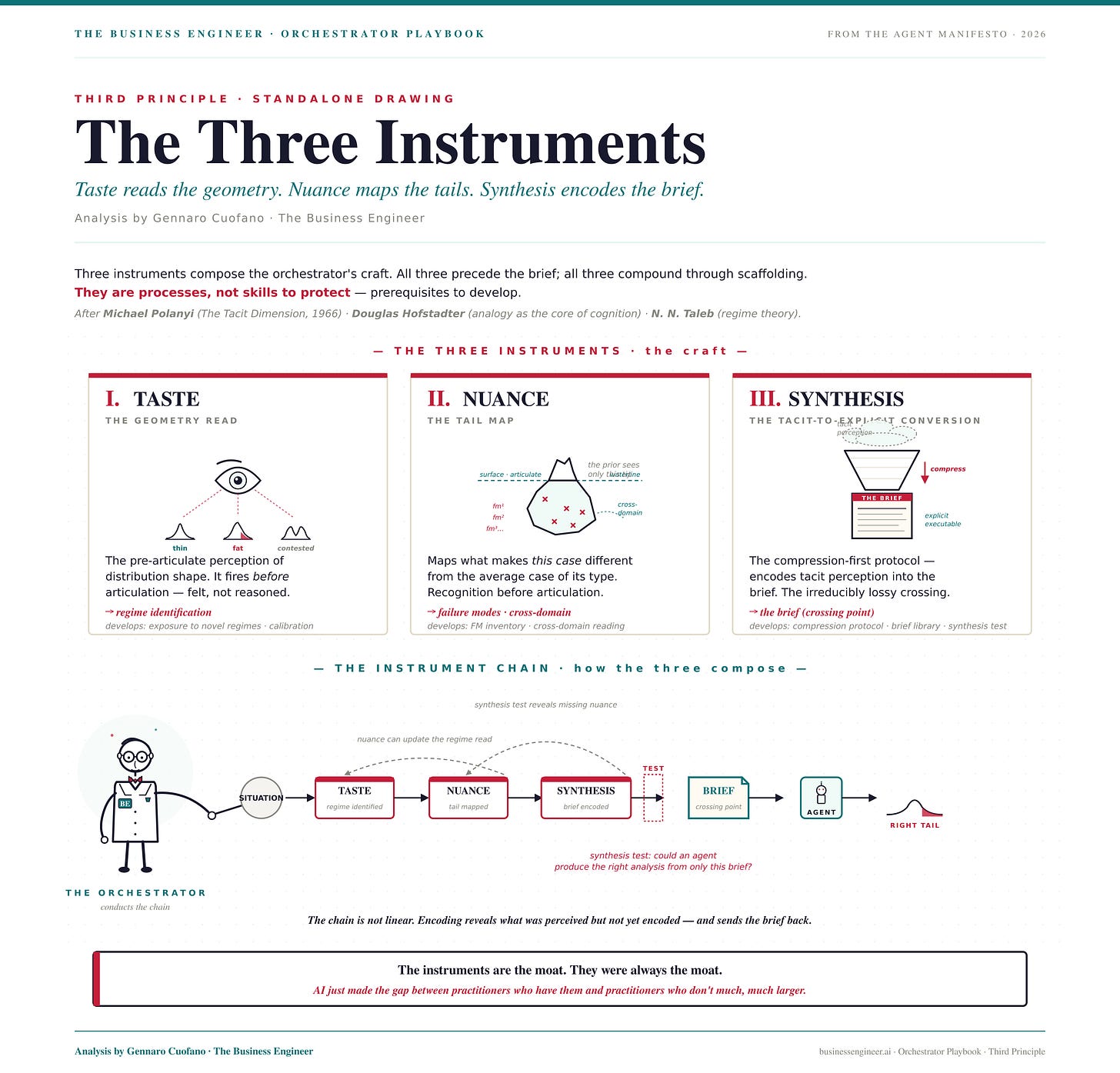

The Three Instruments (what you bring from inside)

I. DIRECTION — the encoded frame

Direction is the product of taste’s regime read and synthesis’s frame selection. It is the answer to the only question that must be answered before any brief: what regime is this problem operating in?

Familiar, novel, or contested. Mediocristan or Extremistan. Sandboxed or ambiguous. The same task in three regimes has three different right answers. If you cannot write the regime identification in one sentence, you have not yet done the distribution-reading. Stop. Think. Then brief.

Direction is the frame that tells the agent which tail it is hunting. Without it, the agent hunts the center — the only place it can find by default.

II. NUANCE — the tail map

The prior is the enemy of the specific. The training corpus averages millions of cases; yours is one. Nuance is the discipline of mapping what makes this case different from the average case of its type.

Nuance produces the failure mode inventory — the named list of plausible-but-wrong outputs the prior will generate for this specific situation. It is not paranoia. It is precision. Every failure mode you name is a tail the agent no longer wanders into. Every failure mode you omit is a tail the agent will polish to a shine before handing back to you.

The deepest form of nuance is cross-domain structural perception — recognizing that this market behaves like a biological invasion, that this competitive dynamic has the structure of a predator-prey system, that this organizational pathology is the same pattern you saw three domains away. The recognition fires before articulation. The articulation is the lossy conversion.

III. TASTE — the geometry read

Taste is the discrimination between what is good and what is almost-good. It fires before articulation. It is the tacit, felt-sense perception that something about this framing is wrong, that this region of the analysis matters more than that one, that the standard answer is the wrong answer here.

Taste is the only instrument that survives the sandbox boundary. Inside the sandbox — inside code, mathematics, anywhere with verifiable ground truth — the environment provides the correction signal. Outside the sandbox, the correction signal is the orchestrator’s taste. There is no substitute. No prompt technique. No framework. No amount of compute.

The sandboxed-vs-ambiguous spectrum is the axis along which taste becomes indispensable.

These three instruments compose the chain. Taste identifies the regime. Nuance maps the tail structure. Synthesis encodes both into the brief. The synthesis test — could an agent produce the right analysis from only this brief? — is the gate. The brief is the crossing point from tacit perception to executable instruction.

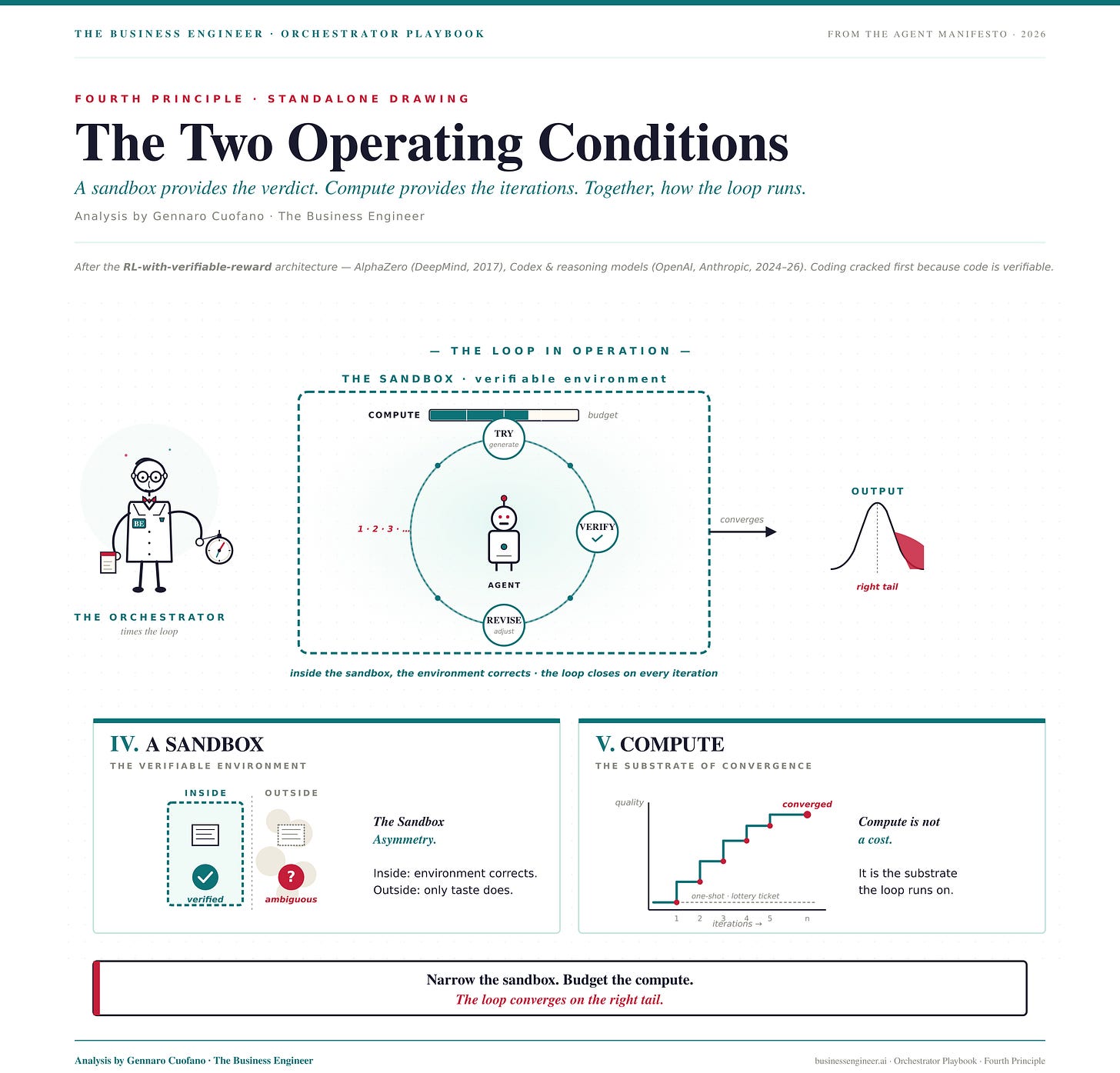

The Two Operating Conditions (how the loop runs)

IV. A SANDBOX — the verifiable environment

Wherever you can define a ground truth, build one. Code compiles or it does not. Math checks or it does not. A benchmark passes or it does not. The sandbox is the environment that corrects the agent without you having to.

The Sandbox Asymmetry: inside the sandbox, every iteration ends in a verdict. The loop converges quickly. Outside the sandbox, every iteration ends in another analysis. The loop diverges unless taste is the verdict.

This is why coding cracked first. Not because code is the most important domain, but because code is the most verifiable one. RL with verifiable reward is the mechanism. The sandbox is what makes the mechanism work.

The agent does not need freedom. It needs a wall to press against. The narrower the sandbox, the faster the loop converges. The wider the sandbox, the more taste you owe it.

V. COMPUTE — the substrate of convergence

Iteration is the medium. A one-shot answer is a lottery ticket. An agent given budget to try, verify, revise, and compress finds a different distribution than an agent given one pass through the problem.

Compute is not a cost. It is the substrate the loop runs on. Without it, the agentic architecture degrades back into the completion architecture — a single pass, a single guess, a single expected value around the consensus center.

With it — and with the other four provisions properly packed — the loop amplifies the right things. The brief’s direction narrows the search. The nuance’s tail map catches the failure modes. Taste’s discrimination rejects the almost-good. The sandbox verifies. Compute iterates until convergence.

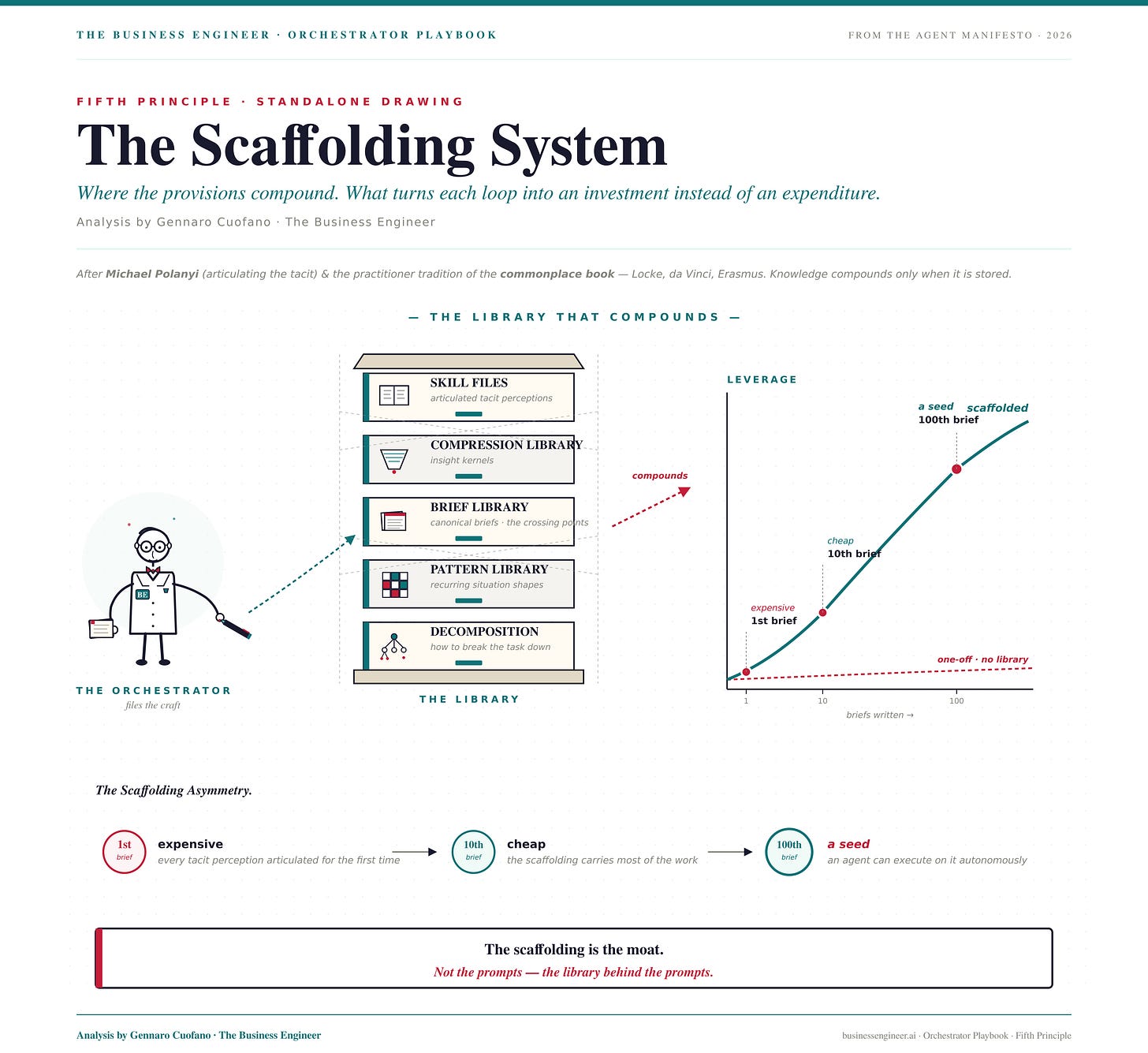

The Scaffolding System

The provisions are not single-use. They compound.

Every correctly identified regime goes into the pattern library. Every failure mode you catch goes into the failure-mode inventory. Every compression that survives the synthesis test goes into the brief library. Every tacit perception that finally got articulated goes into the skill file.

The Scaffolding Asymmetry: the first brief you write for a domain is expensive. The tenth is cheap. The hundredth is a seed the agent can execute on. The instruments, stored as scaffolding, become a persistent conditioning surface. They turn each agentic loop into a compounding investment rather than a one-off expenditure.

The scaffolding is the moat. Not the prompts. The library behind the prompts.

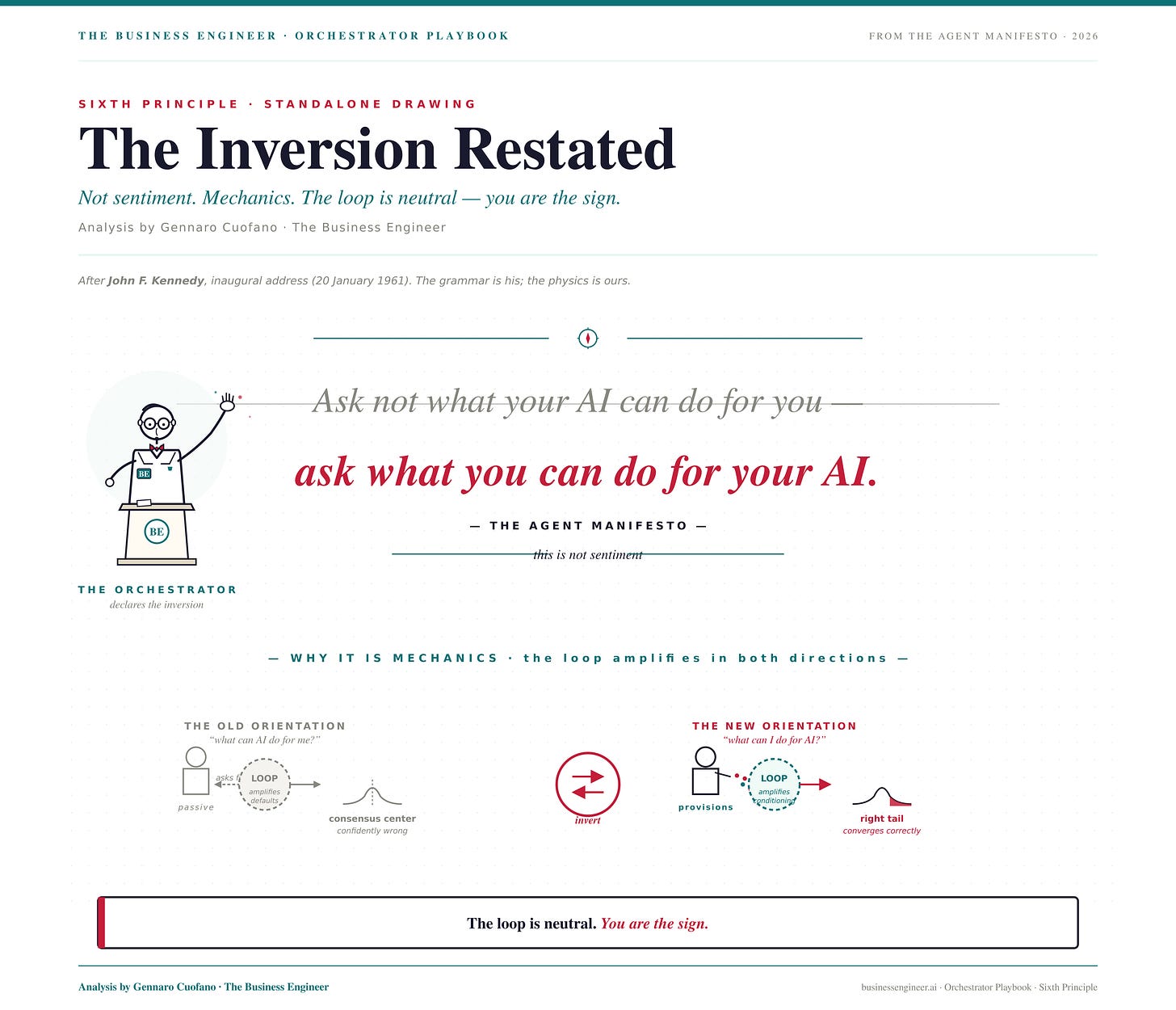

The Inversion Restated

Ask not what your AI can do for you — ask what you can do for your AI.

This is not sentiment. It is mechanics.

The agentic loop amplifies initial conditioning in both directions. Correct conditioning converges toward the right tail with each iteration. Absent or wrong conditioning diverges toward the consensus center, building more elaborate, more internally consistent, more confidently wrong analysis with every pass. The loop is neutral. You are the sign.

What you put in is not instruction. It is a substrate.

Five provisions in the right-tail output. Five provisions missing, consensus-center output — polished, confident, and wrong. The architecture is the same. The outcome inverts.

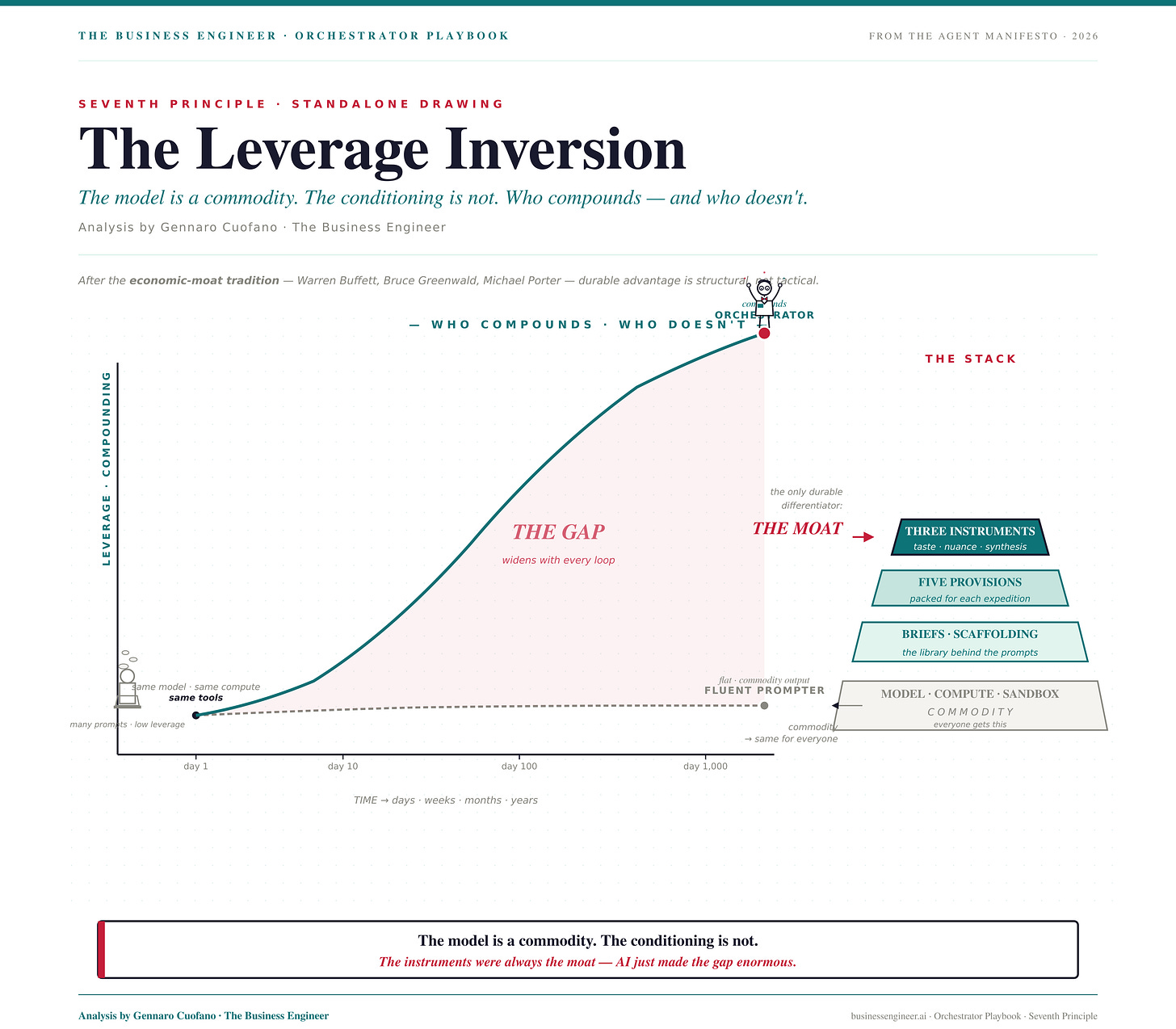

The Leverage Inversion

The practitioners who compound in the agentic era are not the ones who prompt most fluently. They are the ones who read distributions most accurately. The instruments produce the briefs. The briefs produce the leverage. The leverage produces the moat.

This reverses the visible hierarchy. The prompt engineer who produces clever completions loses to the orchestrator who produces accurate briefs — even if the orchestrator writes less, slower, and with none of the fluency. Because the agentic loop amplifies what the brief encodes, not what the prompt performs.

If you lack taste, nuance, and synthesis, your AI is better than you in your own domain. Not because AI has better judgment. But because someone else who brings the instruments to the same model will outcompete you on the same problems, using the same tools, in the same domain. The model is a commodity. The conditioning is not.

The instruments were always the moat. AI just made the gap between practitioners who have them and practitioners who don’t much, much larger.

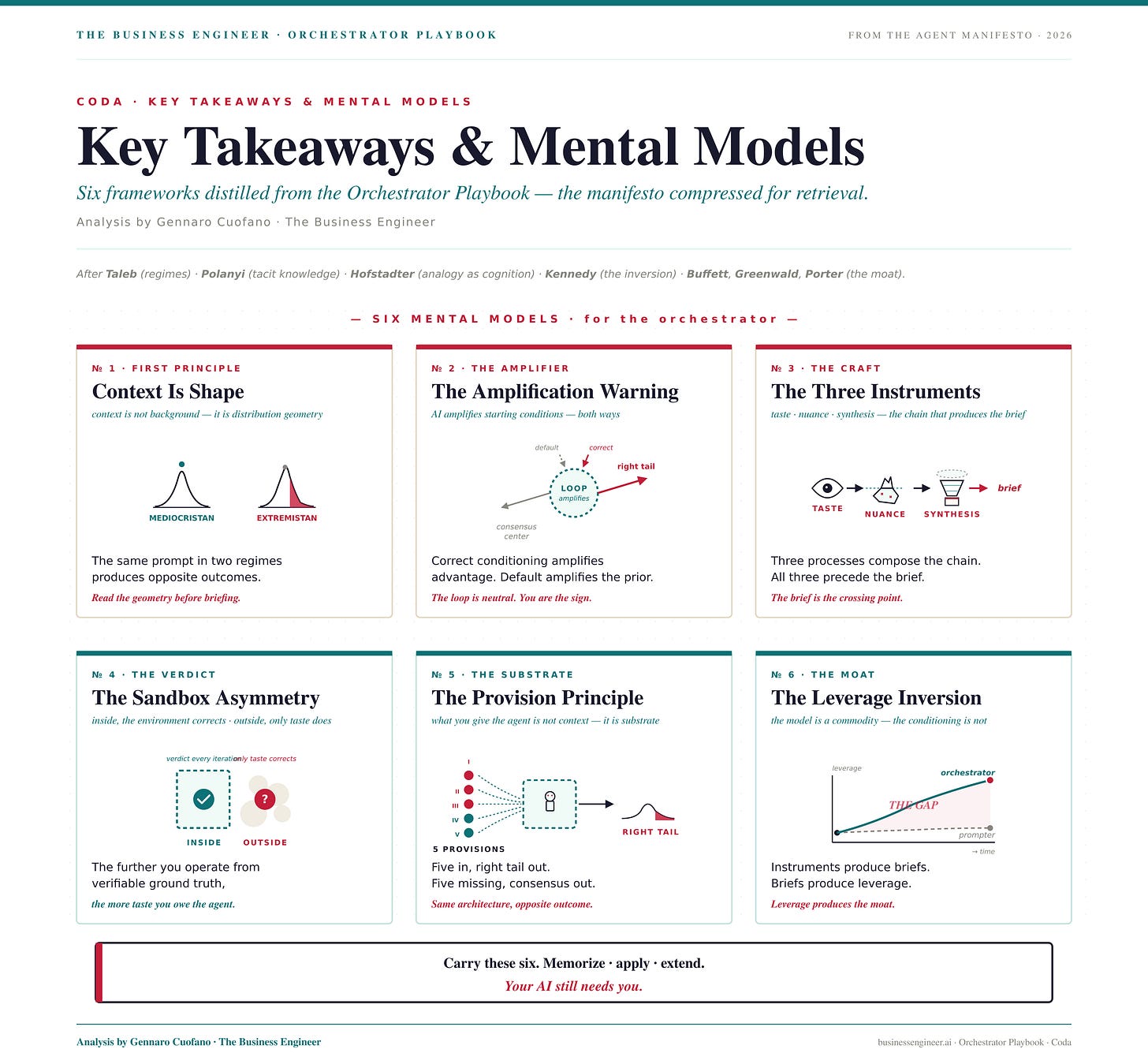

Key Takeaways and Mental Models

Context Is Shape — Context is not background information. It is the shape of the distribution the problem lives in. Read the geometry before briefing any agent.

The Amplification Warning — AI amplifies starting conditions. Correct conditioning amplifies advantage; default conditioning amplifies the prior. The loop is neutral; the sign comes from you.

The Three Instruments — Taste reads the shape. Nuance maps the tails. Synthesis encodes the brief. All three precede the brief. All three compound through the scaffolding system.

The Sandbox Asymmetry — Inside the sandbox, the environment corrects. Outside it, only taste does. The further you operate from verifiable ground truth, the more taste you owe the agent.

The Provision Principle — What you give the agent is not context; it is substrate for compounding. Five provisions packed, right tail converges. Five provisions missing, consensus center converges. Same architecture, opposite outcome.

The Leverage Inversion — The practitioners who compound in the agentic era are the ones who read distributions accurately, not the ones who prompt most fluently. The instruments produce the briefs. The briefs produce the leverage. The leverage produces the moat.

Recap: In This Issue!

AI is not a tool. It is a compounding loop.

The output quality is not determined by the model, but by what you feed into the loop.

The orchestrator, not the model, determines where the system converges.

The Inversion

Old model: What can AI do for me?

New model: What can I condition AI to do correctly?

Shift:

Prompting → insufficient

Orchestration → decisive

The First Principle: Context Is Shape

Context is not information. It is distribution geometry

Two regimes:

Mediocristan (stable, average works)

Extremistan (fat tails, average is wrong)

Implication:

Same prompt → correct in one regime, wrong in another

AI defaults to the center of distribution

The Amplification Law

AI does not correct errors

AI amplifies starting conditions

If input is:

well-framed → advantage compounds

default assumptions → error compounds

The Five Provisions (What Determines Output)

The Three Internal Instruments

1. Direction (Regime Identification)

Define the problem environment:

familiar vs novel vs contested

thin-tail vs fat-tail

Function:

Tells the model which part of the distribution to search

2. Nuance (Failure Map)

Identify plausible but wrong outputs

Map where the prior will fail

Function:

Prevents the model from converging on polished errors

3. Taste (Judgment Layer)

Detect what is:

wrong

almost right

misleading

Function:

Acts as the only correction signal outside verifiable domains

The Two Operating Conditions

4. Sandbox (Verification)

Ground truth environment:

code, math, measurable outputs

Effect:

Inside sandbox → fast convergence

Outside sandbox → requires taste

5. Compute (Iteration)

Iteration enables:

refinement

error correction

convergence

Insight:

One-shot outputs = guess

Iterative loops = search + convergence

The System Behavior

With provisions → converges to right tail (high-value answers)

Without provisions → converges to consensus center (average answers)

Same architecture. Opposite outcomes.

The Scaffolding System

Knowledge compounds through:

pattern libraries

failure-mode inventories

reusable briefs

Asymmetry:

First attempt = expensive

Later attempts = exponentially cheaper

Moat:

Not prompts

Accumulated conditioning

The Leverage Inversion

Winners are not:

best prompt writers

Winners are:

best distribution readers

New hierarchy:

Taste → Nuance → Synthesis → Prompt

Strategic Implications

Models are commoditized

Conditioning is not

Meaning:

Same model

Different outcomes

Based entirely on orchestration quality

Mental Models

Context Is Shape → identify distribution before acting

Amplification Law → AI scales your assumptions

Three Instruments → taste, nuance, direction drive outcomes

Sandbox Asymmetry → verification vs judgment domains

Provision Principle → inputs are compounding substrate

Leverage Inversion → thinking quality > prompting skill

Strategic Compression

Looks like: prompting skill matters

Reality: conditioning system matters

AI does not create leverage by itself.

It amplifies the leverage embedded in how you think.

With massive ♥️ Gennaro Cuofano, The Business Engineer