The AI Orchestrator Playbook

There is no shortage of content about AI. There is an acute shortage of content that explains, at the level of mechanism, what the human’s job actually is when AI is doing the execution, and why that job is irreplaceable, not as a temporary limitation of current technology but as a permanent structural feature of how intelligence works.

That job is orchestration. Not management, not supervision, not prompt engineering. Orchestration — in the precise sense that a conductor orchestrates an ensemble. The conductor does not play every instrument. They carry the interpretive judgment that determines what the ensemble produces. The musicians know their parts. The conductor knows how the whole should sound, which is knowledge of a completely different kind.

In the agentic economy, that is what the high-performing knowledge professional does. They shape what the agents produce. And the quality of that shaping is, structurally and permanently, a function of the quality of the orchestration.

The playbook is free for founding members. Reply to this to get access!

The Executive Plan now includes the Claude OS Skill embedded!

Part I · The Technical Foundation

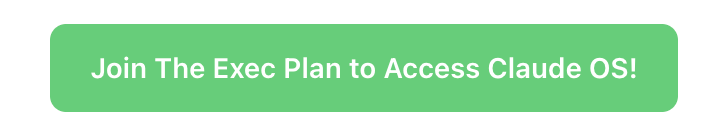

A language model is an approximator of conditional probability distributions trained on human-generated text. At inference time it samples from a learned distribution conditioned on the prompt. The prior — the model’s default distribution — is dense at the consensus center and thin at the non-consensus tails. This is not a flaw. It reflects the actual distribution of written human knowledge. The problem arises when the insight you need is in the tail, not the center.

The prompt is not an instruction to a deterministic system. It is a conditioning signal that shifts the probability distribution — narrowing it, focusing it, suppressing certain regions, amplifying others. The failure modes field of a brief, which most practitioners leave empty, is the most powerful conditioning element: it explicitly marks the high-density wrong region and directs the model away from it.

Business domains are Extremistan — Taleb’s term for domains governed by power-law, fat-tailed distributions where a single observation can dominate the dataset and the average is not predictive. AI priors are calibrated to Mediocristan — domains where the average is predictive and outliers are bounded. In Extremistan domains, a model optimized for average performance is systematically wrong in the direction of underestimating the tail events that actually dominate outcomes.

The agentic loop compounds initial conditioning in both directions:

Correct conditioning converges toward the right tail with each iteration

Absent or wrong conditioning diverges toward the consensus center — building more elaborate, more internally consistent, more confidently wrong analysis with every loop

In sandboxed domains like code and mathematics, the environment provides a ground-truth correction signal at every step. Outside sandboxed domains, the correction signal is the orchestrator’s situated judgment

Von Neumann, Turing, Shannon, Simon, Wiener, Rosenblatt, Minsky, and McCarthy all identified, from different starting points, the same structural gap: between the statistical regularities of articulated human knowledge and the situated, pre-articulate, cross-domain intelligence that navigates the real world. That gap was named in 1948. It has not been closed.

Polanyi’s formulation is still the sharpest: “We know more than we can tell.” The training corpus is the articulated surface of human knowledge. The distribution shape of any real domain lives mostly below it — in situated, tacit, pre-linguistic knowing that was never written down. The model learns from the surface. The orchestrator operates from below it.

Part II · The Orchestrator’s Craft

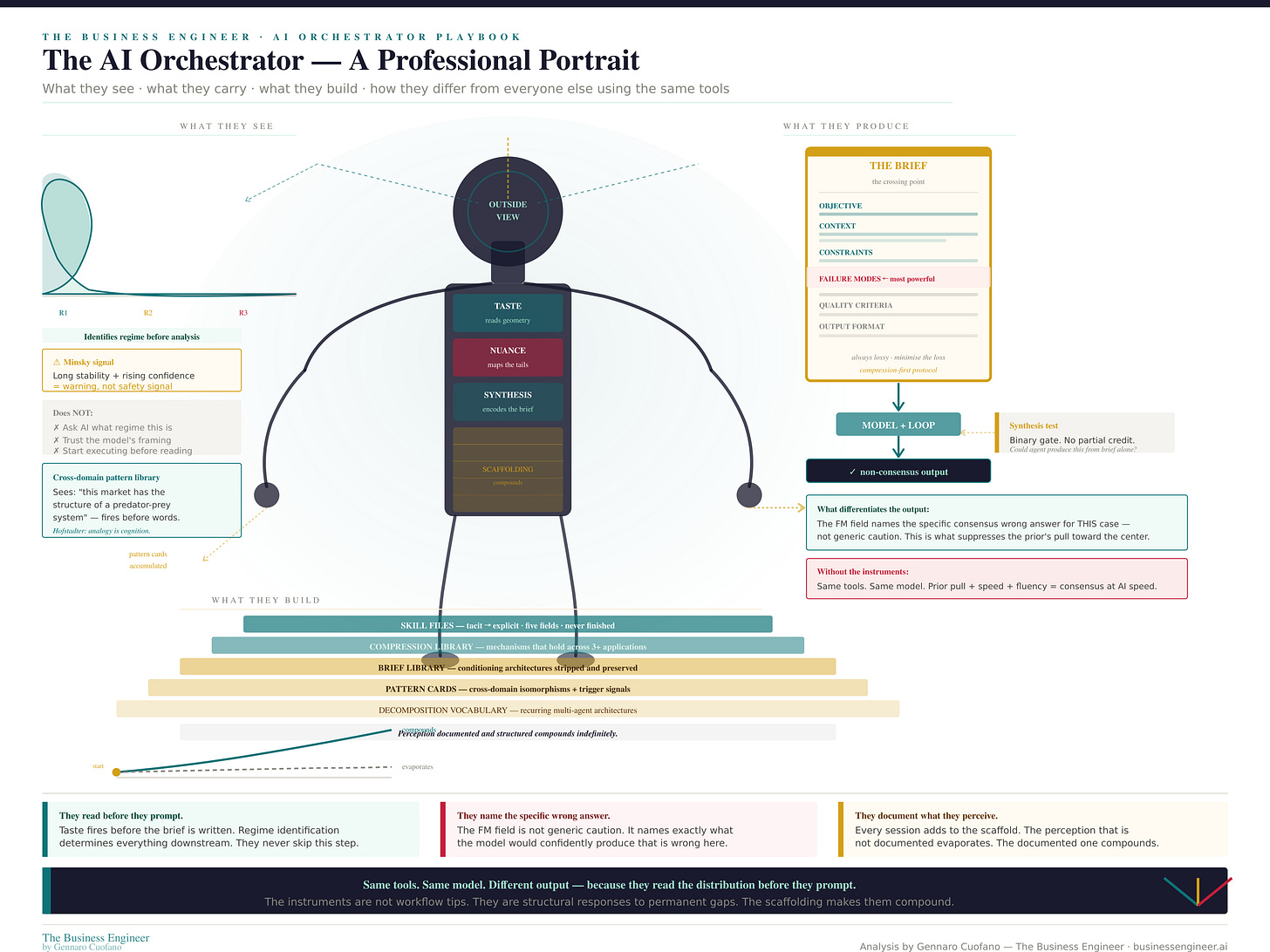

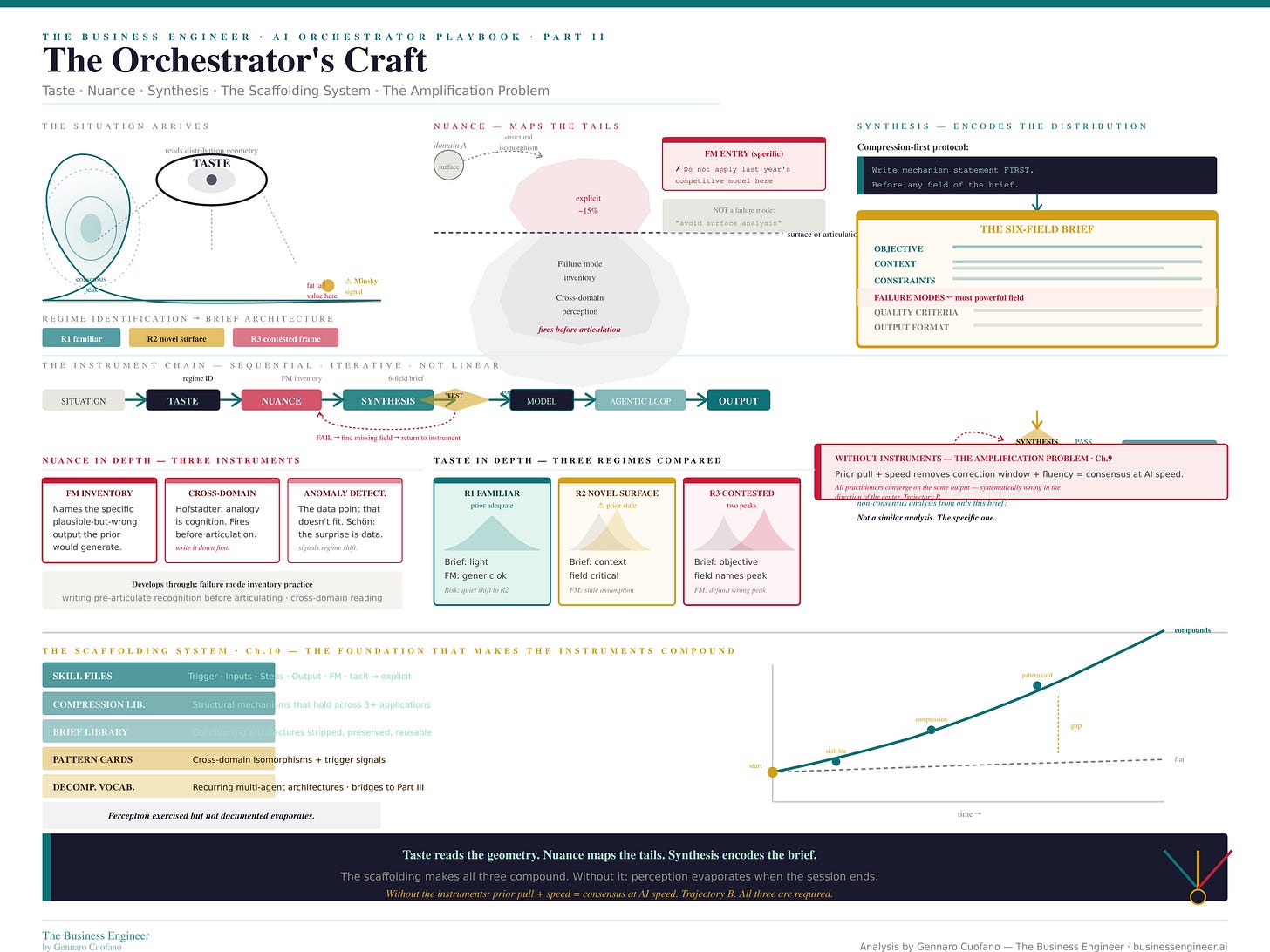

The three instruments are structural responses to three permanent gaps.

Taste — reading the geometry

Taste addresses the gap between the prior’s Mediocristan calibration and Extremistan domains. It is the capacity to read distribution geometry before analysis begins — to perceive, quickly and accurately, whether this situation’s distribution is familiar or novel, single-peaked or multimodal, stationary or shifting. Taste is not preference. It is pattern recognition calibrated through exposure to many different distributions, including tail events and regime transitions.

Three regime classes cover most of professional knowledge work:

Regime 1 — Familiar: prior adequate, brief can be light

Regime 2 — Novel surface: domain has shifted, prior is stale, brief must encode what changed

Regime 3 — Contested frame: multimodal distribution, synthesis must choose the correct peak before anything else

Wrong regime identification is not a small error. It propagates through everything downstream. Taste is the prerequisite.

Nuance — mapping the tails

Nuance addresses the gap between what the training corpus contains and the tacit, cross-domain, pre-articulate knowledge that constitutes professional expertise. It maps the specific tail structure of this case — not just acknowledging that tails exist but identifying where the value is relative to where the prior would look.

The deepest form of nuance is cross-domain structural perception: recognizing that this market has the same underlying mechanism as a biological invasion, or this competitive dynamic has the same structure as a predator-prey system. The recognition fires before articulation. Hofstadter’s insight is that analogy is not a special cognitive capacity — it is the fundamental mechanism of cognition.

Nuance produces the failure mode inventory — the named list of specific plausible-but-wrong outputs the prior would generate for this case. The distinction matters:

✗ “Avoid surface analysis” — not a failure mode

✓ “Do not produce TAM analysis — this case turns on whether this company’s sales motion can reach segment buyers at all” — a failure mode

Synthesis — encoding the distribution into the brief

Synthesis addresses the gap between perception and encoding. It is the tacit-to-explicit conversion discipline — taking what has been perceived through taste and nuance and making it as precise, executable, and conditioning-rich as possible, knowing the translation is irreducibly lossy.

The compression-first protocol is the core discipline: write the mechanism statement before writing any field of the brief. The compression tests whether synthesis has actually occurred. If it cannot be distinguished from the standard thing to say about this type of situation, the synthesis has not captured anything the prior would not have provided.

The synthesis test completes the chain: could an agent produce the correct non-consensus analysis from only this brief? Not a similar analysis. The specific analysis. If no — synthesis is incomplete.

The scaffolding system

The five-component scaffolding system converts instrument exercise into a compounding capability asset. Perception exercised but not documented evaporates. Perception documents and structures compounds indefinitely.

The compound moat is not metaphorical. Three years of accumulated scaffolding cannot be quickly replicated, even by competitors with better individual orchestrators.

The amplification problem

Without the three instruments, AI amplifies the wrong signal. Prior pull toward the consensus center, combined with AI’s removal of the natural correction window and AI’s fluency that makes wrong output indistinguishable from right output, produces consensus at AI speed.

The practitioners who adopt AI without developing orchestration capability all produce the same output — the consensus answer, at high volume, with professional formatting. In Extremistan domains, they are all systematically wrong in the same direction.

The orchestrator who develops the instruments produces differentiated output that accurately reflects the specific tail structures of specific situations. The competitive gap is not linear. It is the gap between the analysis that misses the dominant outcome and the analysis that names it.

Part III · Organizational Architecture

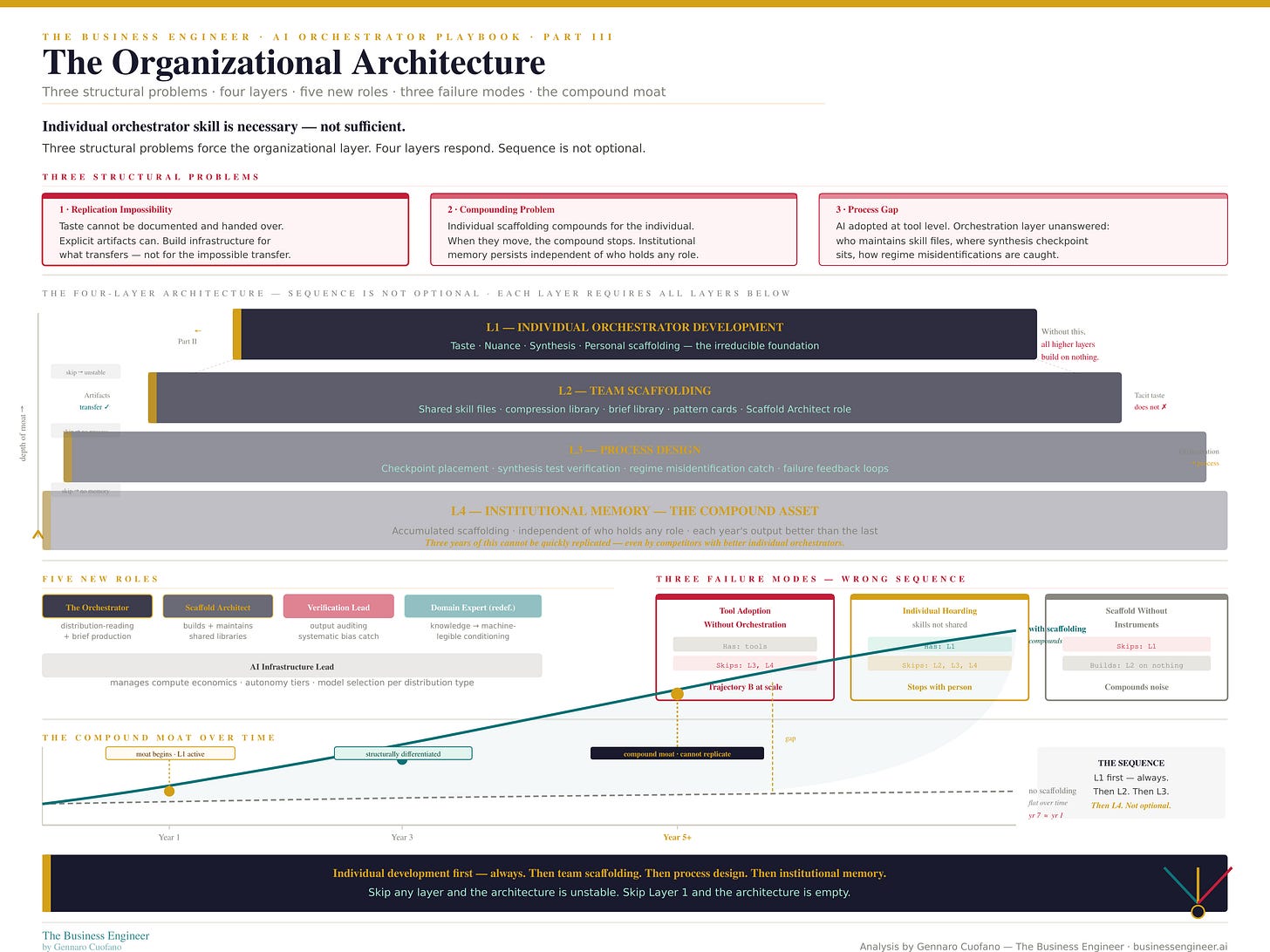

Individual orchestrator skill is necessary but not sufficient. Three structural problems force the organizational layer:

1 · The replication impossibility. Taste cannot be documented and handed over. The individual orchestrator’s regime-reading capacity — built through years of situated exposure to tail events and transitions — cannot be transferred through instruction. What can be transferred are the explicit artifacts: skill files, pattern cards, and brief templates. The organizational response is infrastructure for the explicit transfer of artifacts, not the impossible transfer of tacit knowledge.

2 · The compounding problem. Individual scaffolding compounds for the individual. When the person moves, the compound stops. Organizational compounding requires institutional memory — a structure that persists independent of who holds which role.

3 · The process gap. Most organizations adopt AI at the tool level. The orchestration layer questions go unanswered: who maintains the skill file library, where does the synthesis checkpoint sit in the workflow, and how are regime misidentifications caught before the agentic loop runs at scale? This gap produces organizational Trajectory B — consensus at AI speed, at organizational scale.

The four-layer architecture

The structural response is a four-layer architecture in fixed sequence:

Individual orchestrator development — taste, nuance, synthesis, personal scaffolding. The irreducible foundation. Without it, higher layers have nothing to build on.

Team scaffolding — shared skill files, shared compression and brief libraries, shared pattern cards. Requires a Scaffold Architect role.

Process design — checkpoint placement, synthesis test verification, regime misidentification catch, failure feedback loops. Orchestration becomes process rather than talent.

Institutional memory — accumulated scaffolding that makes each year’s output better than the last, independent of who holds any role.

The Key Insight

The orchestrator’s irreducible contribution is the definition of the distribution shape — the pre-articulate, cross-domain, fat-tail-sensitive perception that the founding fathers identified in 1948 as beyond the reach of any formal system.

The instruments make it executable. The scaffolding makes it compound. The architecture makes it institutional.

The Permanent Statement

The prior cannot assess its own calibration against the real-world distribution. The orchestrator has the outside view. This is structural — not a temporary limitation of current models. More capable models have a more accurate prior. They still have a prior.

In Extremistan domains — business, finance, strategy, medicine, law — the prior’s systematic underestimation of the tails is most consequential, because tails dominate outcomes. This is where the instruments matter most and where the gap between orchestrated and unorchestrated AI output is widest.

As models improve, the frontier moves outward. The orchestrator is always at the frontier — the edge where the prior runs out, where non-consensus is always just beyond the reach of any training corpus. Better models raise the bar. They cannot close the gap.

Von Neumann identified it in 1958. Turing in 1950. Shannon in 1948. The gap remains. The orchestrator’s work is not transitional. It is the permanent condition of intelligence navigating domains that exceed the scope of any training corpus.

This is the work.

Recap: In This Issue!

The human role is orchestration, not execution

Orchestration = shaping the model’s output distribution, not prompting it

This role is structural and permanent, not a temporary gap in AI capability

The core thesis

When AI executes, humans define how it executes.

That function is orchestration:

identifying the structure of the problem

correcting for where the model’s prior fails

encoding that into a brief the system can run

The difference is simple:

AI produces from the distribution it learned

The orchestrator reshapes the distribution it samples from

Why orchestration exists (and persists)

1. Models are centered on consensus

LLMs approximate probability distributions trained on human text:

dense at the center (consensus)

thin at the tails (non-consensus insight)

2. Real domains are tail-driven

Business, strategy, finance:

dominated by rare events

averages mislead

value sits in the tails

3. The gap is structural

Models learn from what is written.

Reality is shaped by what is often not written (tacit knowledge).

So even with better models:

the prior improves

the gap remains

The three instruments of orchestration

1. Taste (regime identification)

Recognizes the type of distribution:

familiar

shifted

contested

Wrong regime → wrong analysis.

2. Nuance (tail mapping)

Identifies where the model will fail in this case

Produces specific failure modes, not generic warnings

Example:

Not: “avoid shallow analysis”

But: “do not run TAM analysis; distribution access is the constraint”

3. Synthesis (encoding)

Converts judgment into executable structure

Core test:

Can an agent produce the correct non-consensus answer from this brief alone?

If not, synthesis failed.

The amplification problem

Without orchestration:

AI converges to consensus answers

outputs look polished

errors become harder to detect

Result:

consensus at AI speed

In tail-driven domains, this is systematically wrong.

The scaffolding moat

Orchestration compounds only if captured.

Key assets:

skill files

brief libraries

pattern cards

compression library

This turns:

one-off judgment → reusable infrastructure

Advantage becomes cumulative, not individual.

Why organizations fail

Three common failure modes:

Tool adoption only → speed without differentiation

Individual dependence → no compounding

Empty scaffolding → systematized noise

Correct sequence:

Individual skill

Shared scaffolding

Process

Institutional memory

The permanent claim

The orchestrator exists because:

Every model has a prior

Every prior is incomplete

Real domains exceed any corpus

As models improve:

The frontier moves outward

Orchestration moves with it

It does not disappear.

Mental models

The Prior Problem: models default to consensus; humans correct it

Extremistan Gap: tails dominate outcomes, models underweight them

Three Instruments: taste → nuance → synthesis

Amplification Risk: AI scales consensus unless shaped

Scaffolding Moat: captured judgment compounds

Permanent Frontier: orchestration always sits where the prior breaks

Bottom line

AI executes.

The orchestrator defines what execution should converge to.

That requires:

reading the distribution

identifying the failure zones

encoding that into the system

making it reusable

This is not prompt engineering.

It is the permanent layer where human judgment remains irreducible.

With massive ♥️ Gennaro Cuofano, The Business Engineer

The playbook is free for founding members. Reply to this to get access!