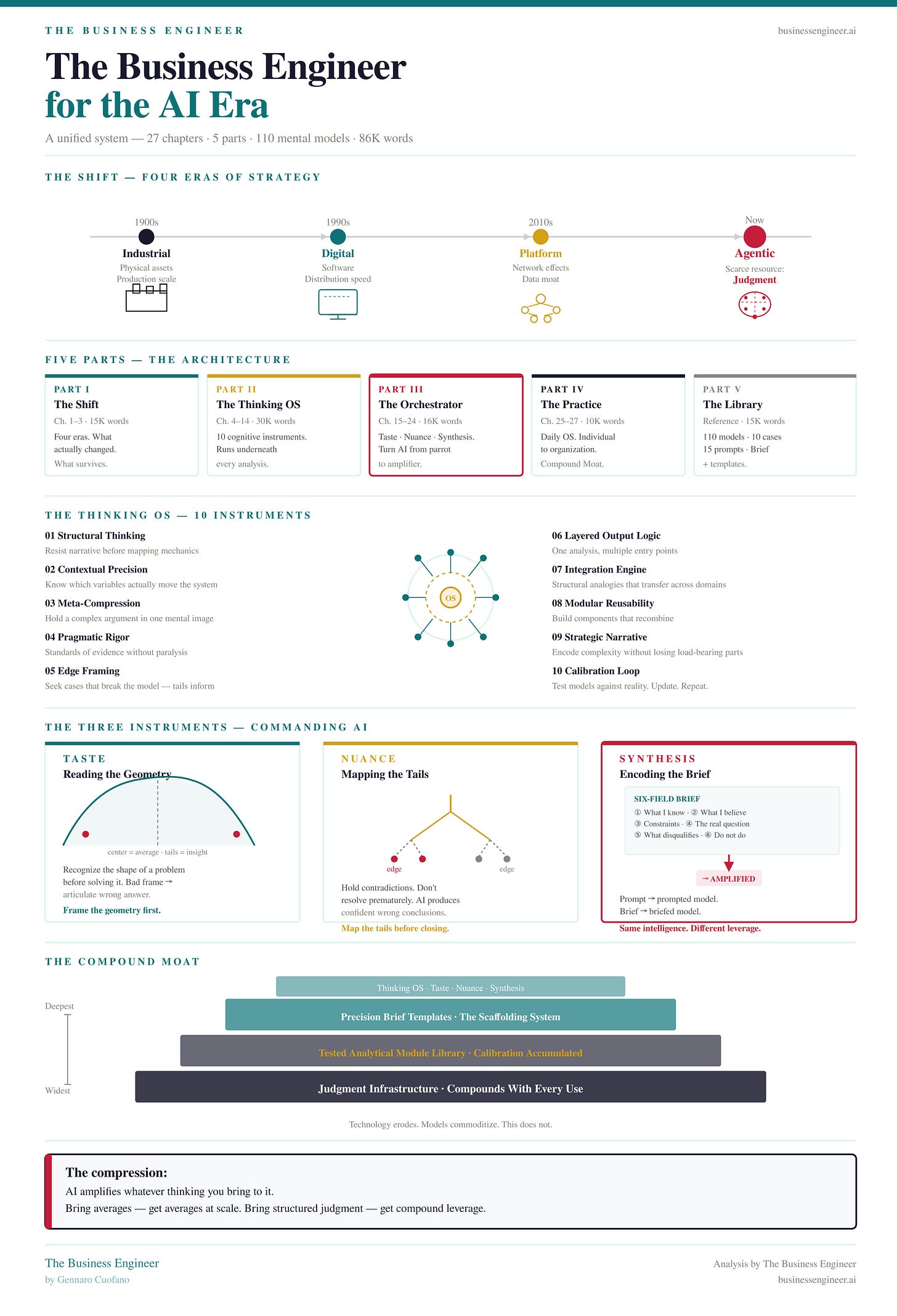

The Business Engineer, for the AI Era

The Visual Book

Most people using AI are getting faster at being wrong.

They’re producing more — more text, more slides, more analysis — at higher velocity, with higher confidence, toward conclusions that were never examined in the first place. The model is fluent. The thinking underneath it isn’t. And fluency, it turns out, is an excellent disguise for mediocrity.

This is the central problem the book addresses. Not how to use AI. How to think well enough that AI becomes something other than a sophisticated autocomplete for your existing biases.

The Executive Plan comes with the Business Engineer for AI + Claude OS Skill

The Shift That Actually Happened

Business strategy has moved through four eras:

Industrial — competitive advantage lived in physical assets and production scale

Digital — it shifted to software, distribution, and speed of replication

Platform — network effects and data became the moat; the asset was the graph

Agentic — the scarce resource is no longer information, capital, or even technology. It’s judgment.

The executives who panicked when ChatGPT launched, the consultants who immediately rebranded as “AI strategists,” the frameworks that treat LLMs as a feature to be bolted onto existing processes — all of them misread the transition. They saw a productivity tool. What arrived was a judgment amplifier. The distinction matters enormously, because amplifiers don’t improve the signal. They make it louder.

Bring average thinking to a powerful model and you get average thinking at scale. Bring structured, precise, well-compressed thinking — and you get leverage that compounds.

The Thinking OS

The Executive Plan comes with the Business Engineer for AI + Claude OS Skill

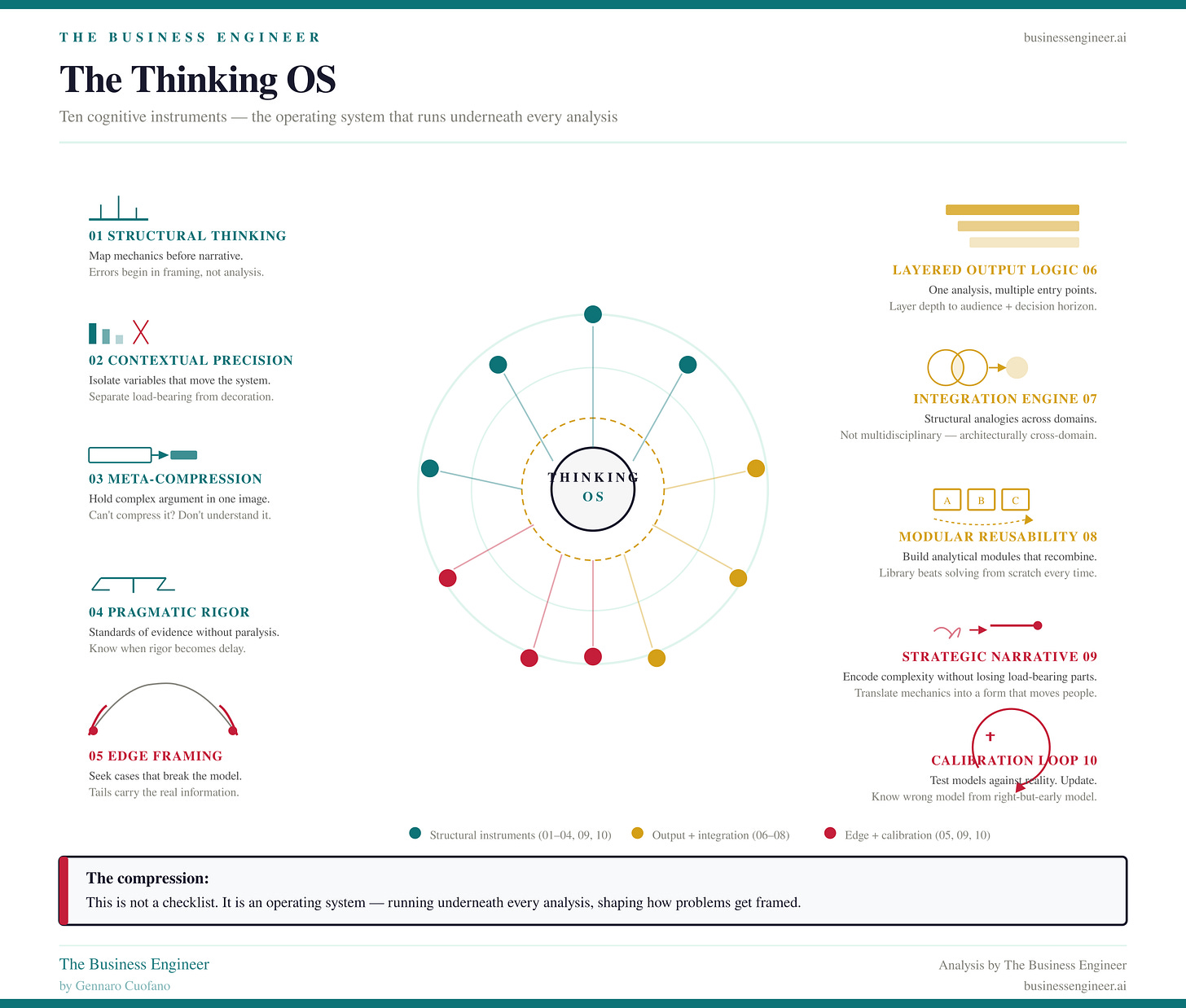

The book’s intellectual core is a ten-instrument operating system for how a strategist processes reality. Not a framework in the consulting sense — a checklist to be applied and forgotten. An actual operating system: something that runs underneath everything else, shaping how problems get framed before any analysis begins.

The ten instruments:

Structural Thinking as Default — resist the pull toward narrative before you’ve mapped the mechanics. Most strategic errors are made before the analysis starts, in the moment when a situation gets prematurely storified.

Contextual Precision — know which variables actually move the system and which are decoration. The ability to distinguish load-bearing elements from noise is what separates diagnosis from description.

Meta-Compression — hold a complex argument in a single mental image. Compress without losing the structural integrity. If you can’t compress it, you don’t fully understand it yet.

Pragmatic Rigor — maintain standards of evidence without becoming paralyzed by uncertainty. Know when you have enough to act and when you’re using rigor as a delay mechanism.

Edge Framing — deliberately seek the cases that break your model, because those contain the real information. The center of the distribution tells you what’s common. The tails tell you what’s true.

Layered Output Logic — structure your outputs so each layer addresses a different audience, abstraction level, or decision horizon. One analysis, multiple entry points.

Integration Engine — the capacity to pull from disparate domains and find the structural analogies that transfer. Not multidisciplinary in the shallow sense — architecturally cross-domain.

Modular Reusability — build analytical components that can be recombined. The strategist who solves from scratch every time is slower and less accurate than the one who has a library of tested modules.

Strategic Narrative Compression — translate mechanical understanding into a form that moves people. Not dumbing down. Encoding the complexity into a shape that carries it without losing it.

Calibration Loop — the meta-instrument. The ongoing process of testing your models against reality, updating them, and knowing the difference between a model that’s wrong and a model that’s right but early.

This is the part most business books skip. They go straight to strategy, bypassing the meta-level question of whether the mind doing the strategy is well-calibrated. It’s like teaching chess openings to someone who hasn’t yet learned to read the board.

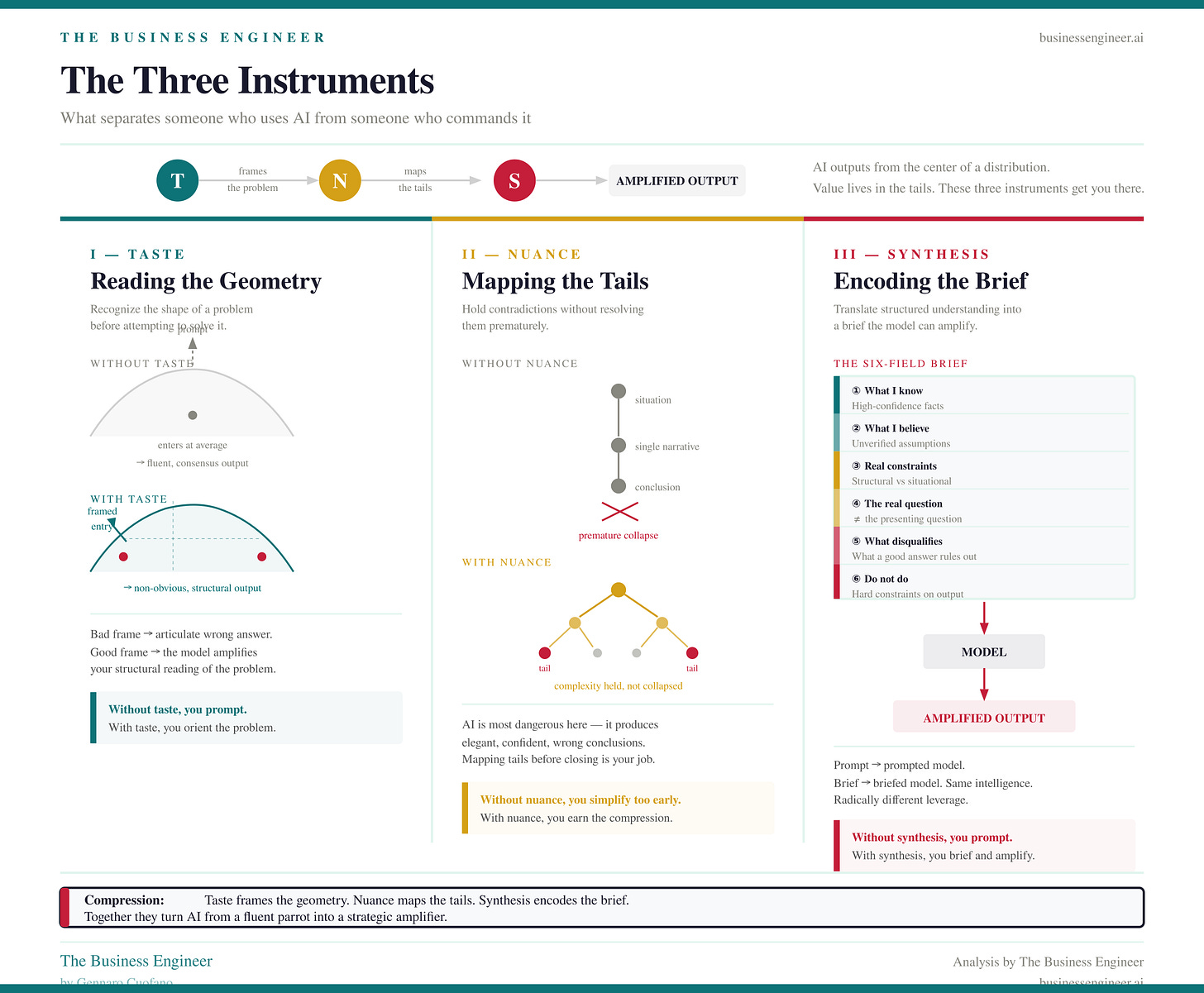

The Three Instruments

Language models output from the center of a distribution. That’s not a flaw — it’s the architecture. They are trained to produce what is most statistically expected given the context. Which means, by default, they produce the consensus. The average. The thing that would occur to most people.

This is useful for many things. It is structurally limiting for strategic work, where the value almost always lives in the tails — the non-obvious framing, the overlooked constraint, the second-order effect that changes the entire picture.

Three instruments address this directly.

Taste — Reading the Geometry

Taste is the ability to recognize the shape of a problem before you begin solving it. To look at a situation and identify its structural type: is this a coordination failure or a capability gap? A secular shift or a cyclical fluctuation? A genuine dilemma or a false choice dressed up as one?

Without taste, you feed the model a prompt. With taste, you feed it a properly oriented problem. The difference in output quality isn’t marginal — it’s architectural. The model amplifies whatever frame you give it. Give it a bad frame and you get a highly articulate wrong answer.

Nuance — Mapping the Tails

Nuance is the capacity to hold contradictions without resolving them prematurely. To map the tails of a distribution rather than flatten them into a single narrative that fits on a slide.

Most strategic errors aren’t made in the analysis phase. They’re made in the framing phase, when genuine complexity gets compressed into something manageable but no longer accurate. The nuance instrument resists that compression until it’s earned — until you’ve actually accounted for the cases that don’t fit, not just noted them and moved on.

This is also where AI is most dangerous. A model with nuance issues will produce elegant, confident, internally consistent arguments for the wrong conclusion. It will never flag its own oversimplification. That’s your job.

Synthesis — Encoding the Brief

Synthesis is where taste and nuance become actionable. It’s the translation of structured understanding into a context precise enough that the model can amplify your thinking rather than substitute for it.

The operational form of this is the Six-Field Brief developed in the book:

What you know with high confidence

What you believe but haven’t verified

What constraints are structural versus situational

What the actual question is — distinct from the presenting question

What a good answer looks like and what would disqualify it

What the model should not do

Most people skip all six fields and write a prompt. The quality gap between a prompted model and a briefed model is the gap between a junior analyst given a vague task and a senior analyst given a precise one. Same intelligence, radically different output.

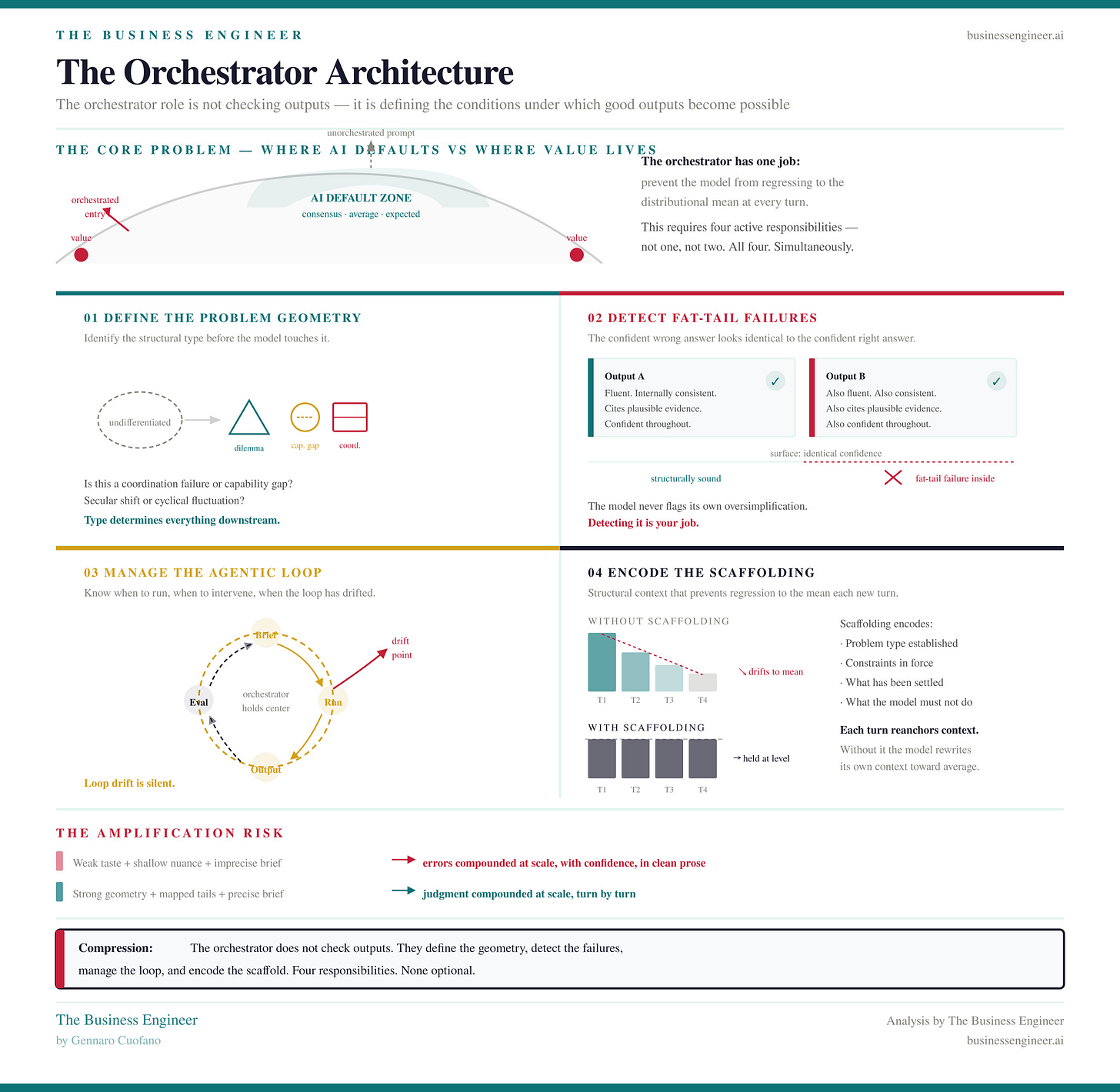

The Orchestrator Architecture

The Executive Plan comes with the Business Engineer for AI + Claude OS Skill

Part III goes deeper into what it actually means to run AI as a system rather than a tool. The key insight is that the orchestrator role — the human in the loop — isn’t about checking outputs. It’s about:

Defining the problem geometry before the model touches it

Detecting fat-tail failures — the confident wrong answers that look exactly like confident right answers

Managing the agentic loop — knowing when to let the model run, when to intervene, and when the loop has drifted from the actual objective

Encoding the scaffolding — the structural context that prevents the model from regressing to the distributional mean with each new turn

The Amplification Problem, addressed in Chapter 23, is the core risk: an orchestrator with weak taste, shallow nuance, and imprecise briefs doesn’t just fail to get value from AI — they actively compound their errors. The model takes whatever is imprecise in your thinking and returns it to you at scale, with confidence, in clean prose.

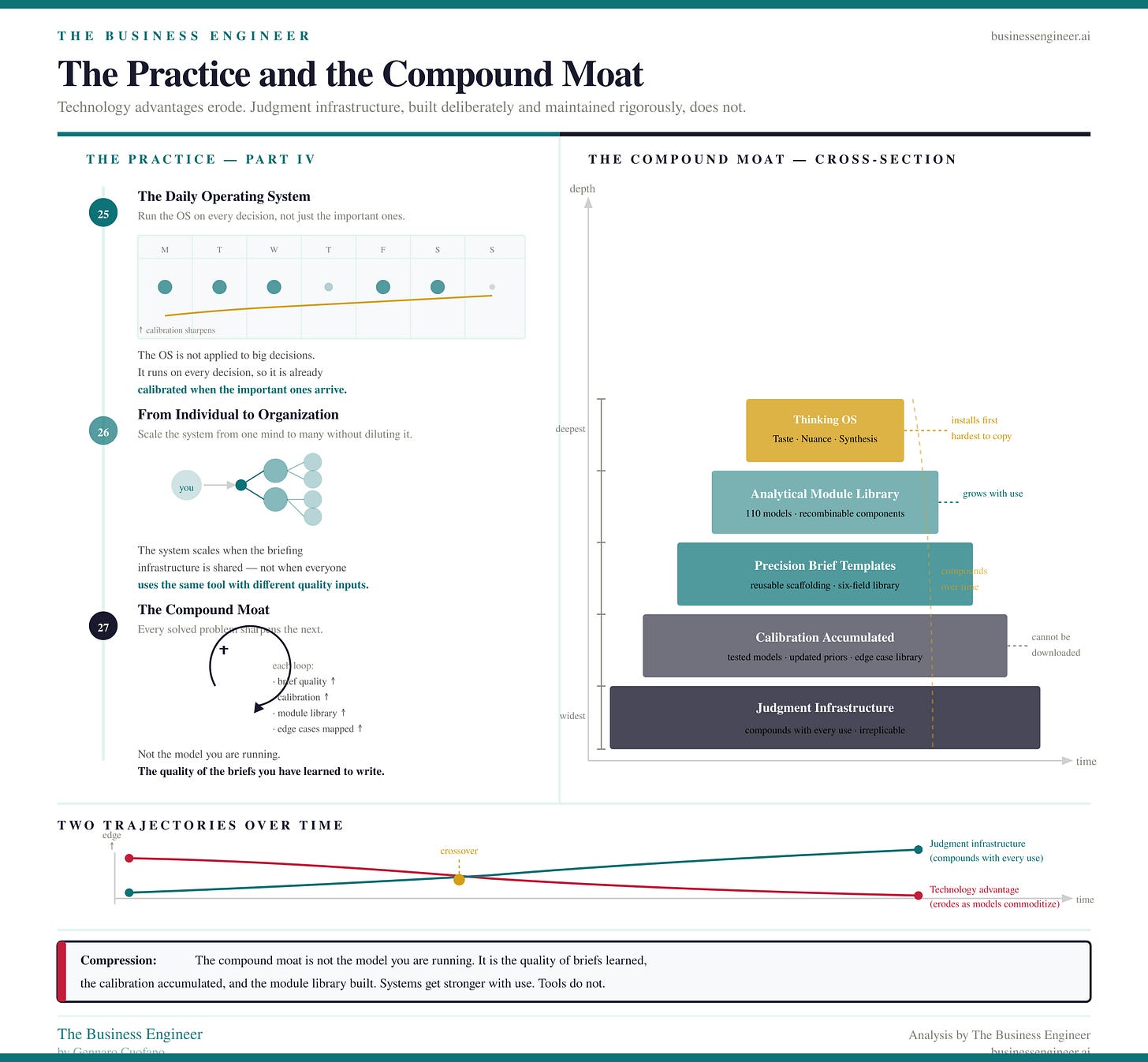

The Practice and the Compound Moat

Part IV closes the loop from individual cognition to organizational capability.

The Daily Operating System (Chapter 25) is built around a simple principle: the Thinking OS is not something you apply to important decisions. It’s something you run on every decision, so that it’s already calibrated when the important ones arrive.

The compound moat — the book’s closing argument — is this: the organizations that will be hardest to compete with in five years are not the ones with the best models. Models are commoditizing. The ones that will be hardest to compete with are the ones that have built the best briefing infrastructure, the sharpest judgment about problem geometry, and the deepest library of tested analytical modules.

That infrastructure compounds. Every well-solved problem improves calibration. Every brief written precisely becomes a template. Every edge case mapped becomes a warning system.

Technology advantages erode. Judgment infrastructure, deliberately built and rigorously maintained, does not.

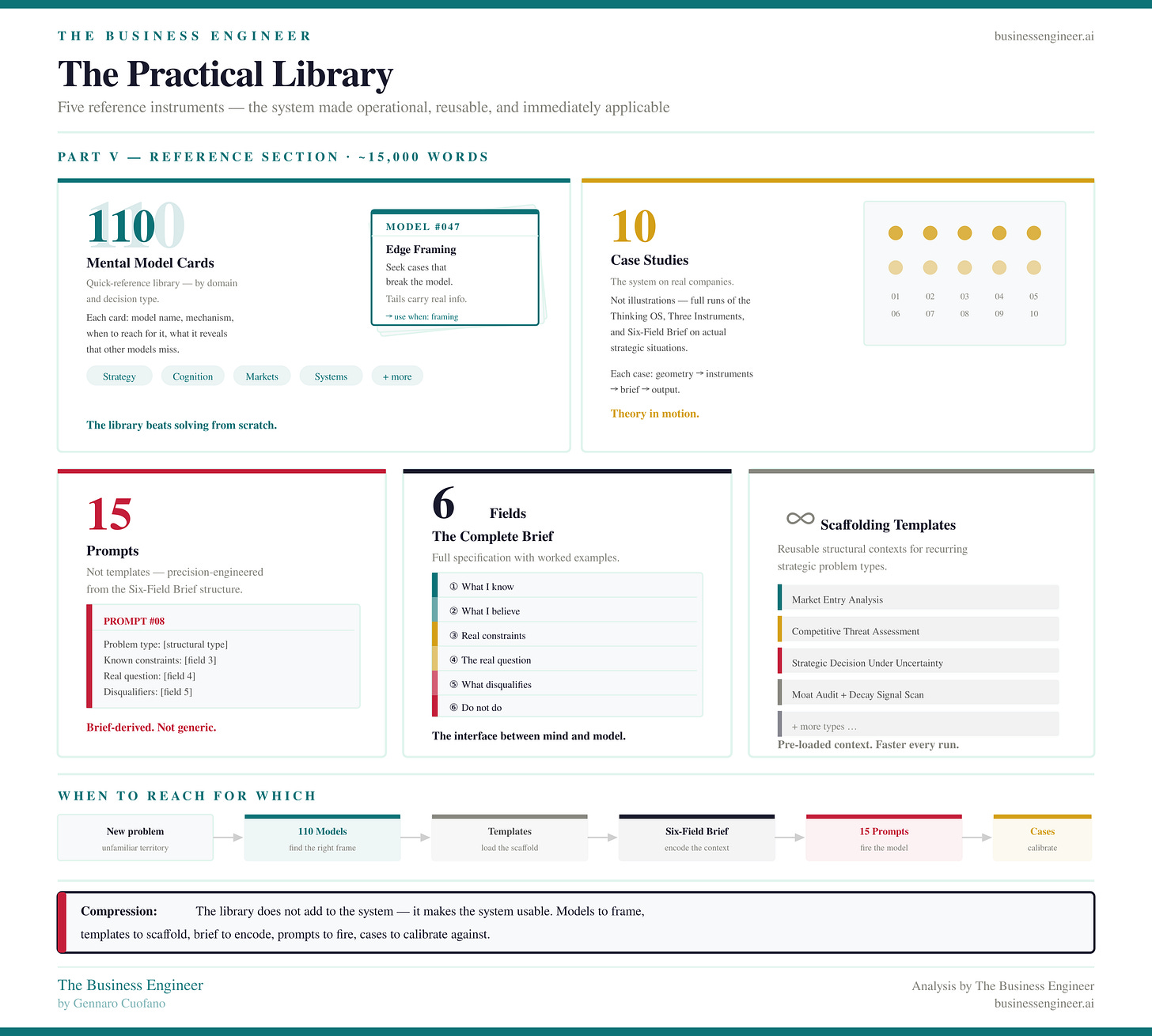

The Practical Library

Part V is the reference layer that makes the system usable in practice:

110 Mental Model Cards — quick-reference summaries of the full model library, organized by domain and decision type

10 Case Studies — the system applied to real companies across different industries and strategic situations

15 Prompts — not generic templates, but precision-engineered prompts derived directly from the Six-Field Brief structure

The Six-Field Brief — Complete Reference — the full specification with worked examples

Scaffolding Templates — reusable structural contexts for recurring strategic problem types

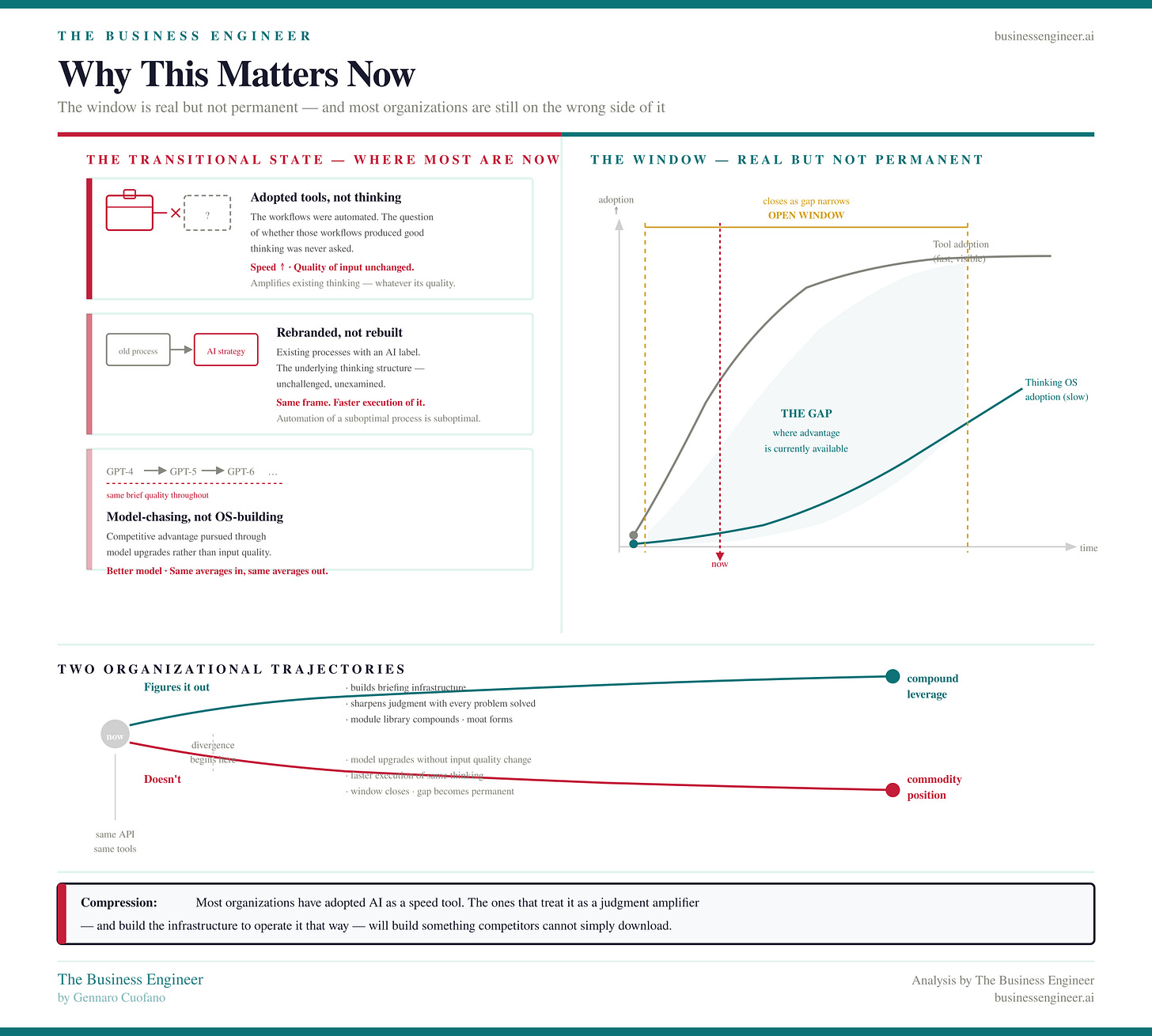

Why This Matters Now

The window in which this distinction creates competitive advantage is real but not permanent. Most organizations are currently in a transitional state: they’ve adopted the tools but not the thinking. They’ve automated their workflows without examining whether those workflows were producing good thinking in the first place.

The ones that figure this out first will build something genuinely difficult to replicate — not a technology advantage available to everyone on the same API, but a judgment infrastructure that gets sharper with every use.

The compound moat isn’t the model you’re running. It’s the quality of the briefs you’ve learned to write.

The precision of the frames you’ve developed. The calibration accumulated by running the loop thousands of times across real problems, updating the models, and building a library of tested analytical components that no competitor can simply download.

That’s what the system is building toward. And systems, unlike tools, get stronger with use.

With massive ♥️ Gennaro Cuofano, The Business Engineer