The Business Engineer OS, Thinking as a System

Most people use AI the way they use a search engine. They ask a question. They get an answer. They move on.

What they rarely do is build a system, a set of thinking tools so deeply embedded into how they work with AI that the output quality compounds over time rather than resetting with every new conversation.

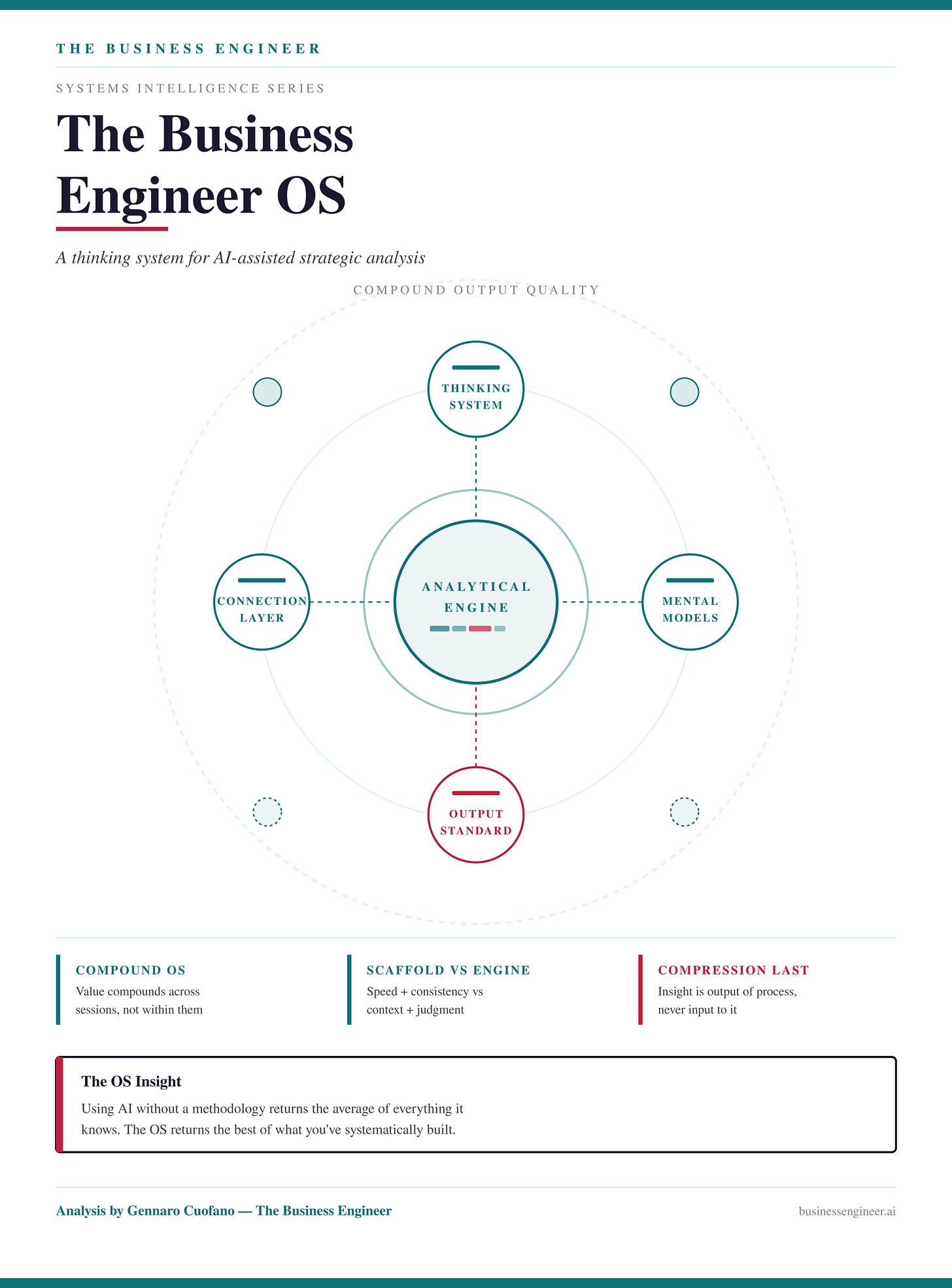

That gap between using AI and systematizing AI is where The Business Engineer OS lives.

This piece explains what the OS is, why the underlying infrastructure changes the nature of strategic analysis work, and what this means for anyone serious about turning AI into a genuine thinking partner rather than a faster Google.

If you’re an existing founding member, ping us back to try it out!

What the Problem Actually Is

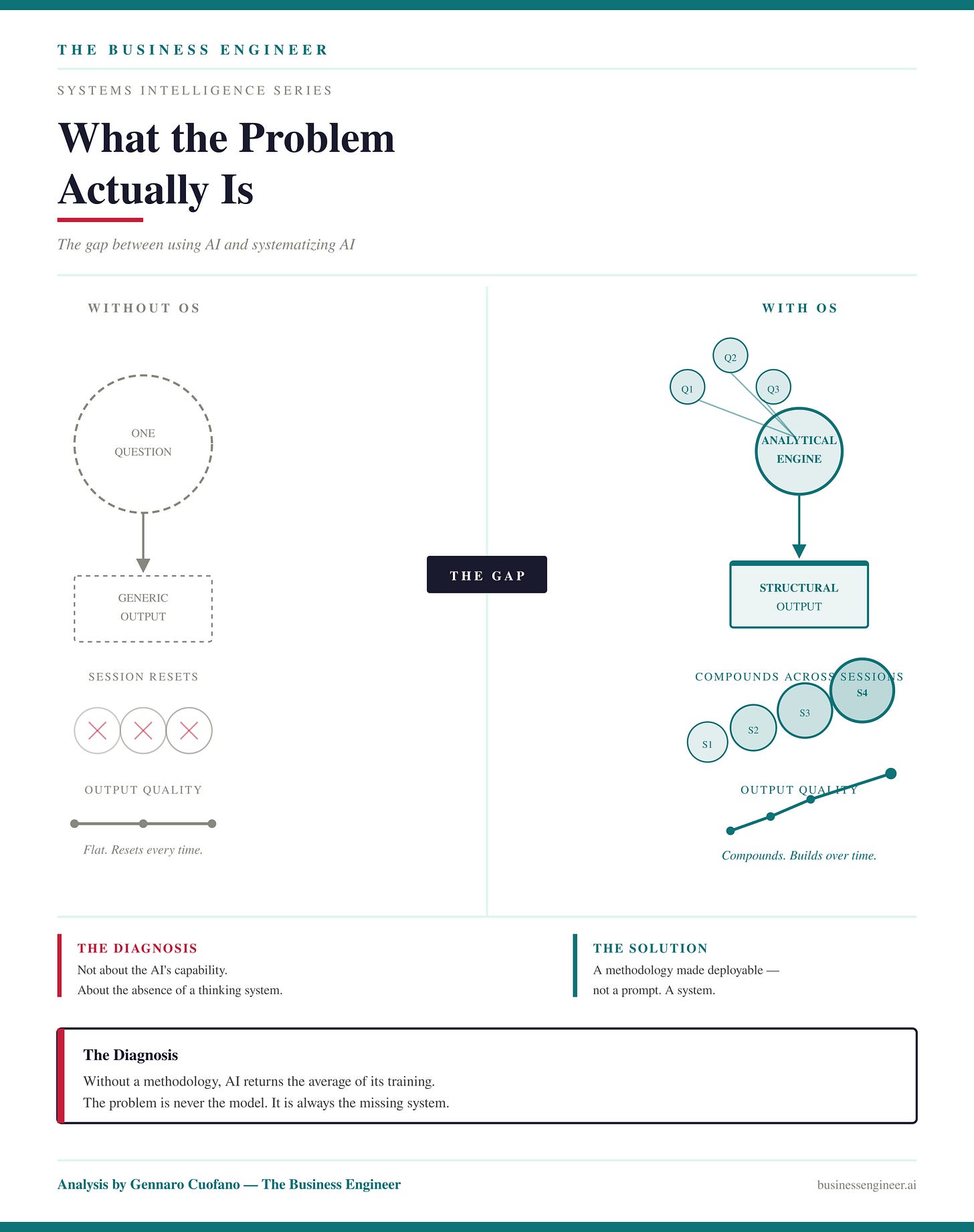

The standard complaint about AI-generated analysis is that it feels generic. Competent but flat. Accurate but forgettable.

The diagnosis underneath that complaint is almost never about the AI’s capability. It is about the absence of a thinking system.

When you interact with AI without a methodology, what you get back reflects the average of everything the model has ever been trained on. It knows the standard frameworks. It can describe a flywheel in general terms. But it does not know your specific analytical vocabulary, your preferred mental model sequence, the particular lens you apply when you encounter a platform business versus a hardware company versus an infrastructure play.

It cannot maintain the thread of a publication series. It cannot position a new analysis within a body of prior work. It cannot enforce the quality standard that distinguishes structural insight from narrative decoration.

The Business Engineer OS solves this. It is not a prompt. It is a methodology made deployable — a system that tells AI not just what to analyze but how to think, in what sequence, using which tools, toward which standard of output.

What the OS Contains

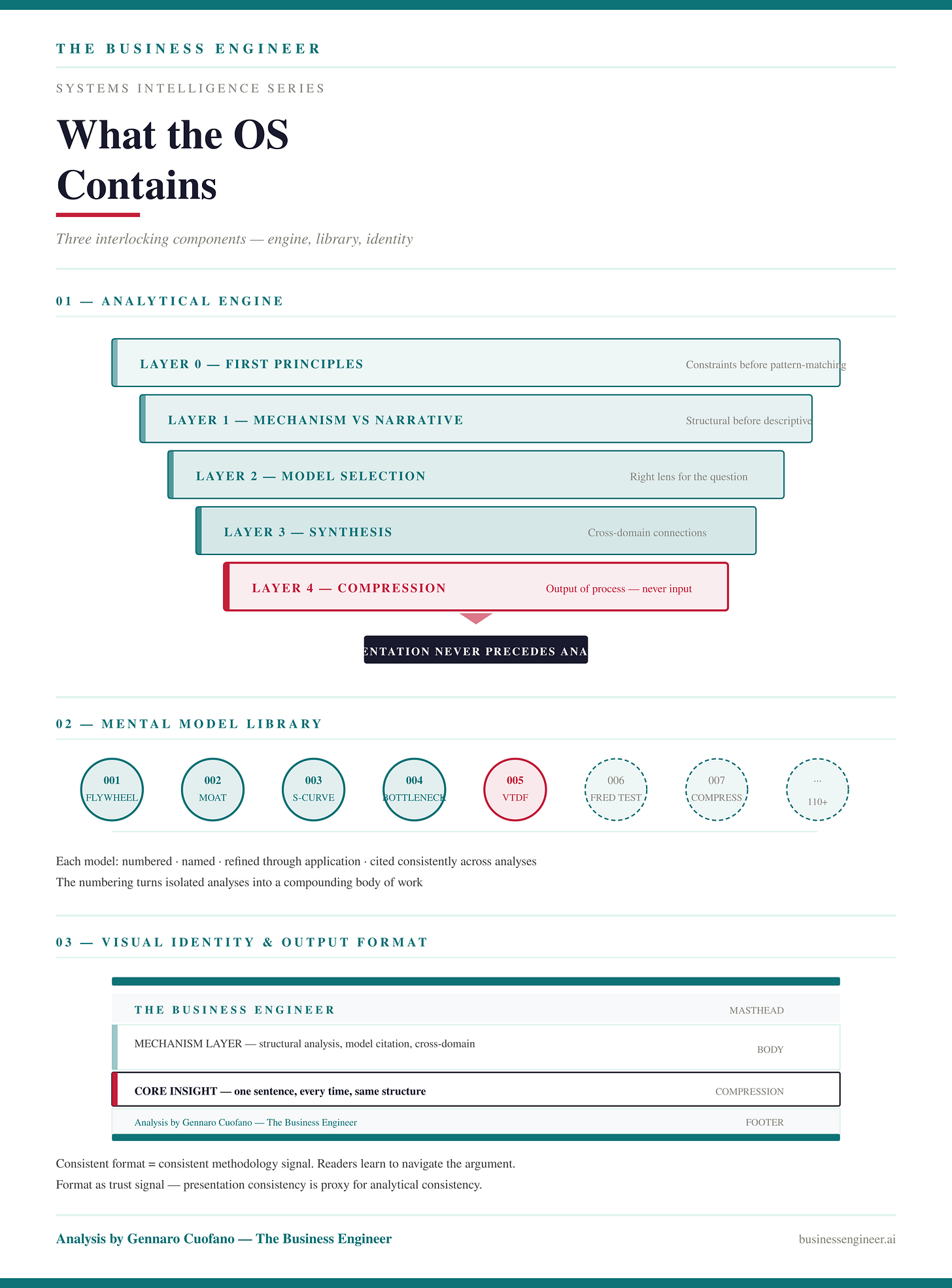

At the center of the OS is an analytical engine that runs every analysis through a structured sequence of layers. The sequence is not optional and it is not decorative. It exists because the most common failure mode in strategic analysis is not factual error — it is premature compression. Reaching conclusions before the structural work is done. Formatting before thinking. Telling a story before identifying the mechanism.

The engine forces the sequence. It checks whether you are looking at a mechanism or a narrative before doing anything else. It surfaces the first principles constraints that apply to the specific business before pattern-matching against the model library.

The compression — the single sentence that captures what is actually true about a business — comes last, as an output of the process, not an input to it.

This is why the hard rule of the OS is that presentation never precedes analysis. A beautifully formatted output on shallow thinking is worse than an ugly output on deep thinking. The engine enforces that discipline at every session.

The second component is a library of mental models developed specifically through the BE publication’s body of work. These are not generic business school frameworks. They are models built from the practice of analyzing real businesses over years — each one numbered, named, and refined through application.

The numbering matters more than it might appear. When the same model is cited across analyses published months apart, readers can trace the through-line. The library turns individual analyses into a compounding body of work rather than a collection of isolated pieces.

The third component is a visual identity and output format system. Consistency at the output level signals consistency at the analytical level. Readers learn to navigate the format. They know where the mechanism lives in the piece, where the mental models are cited, what the compression block means.

What the Connection Layer Adds

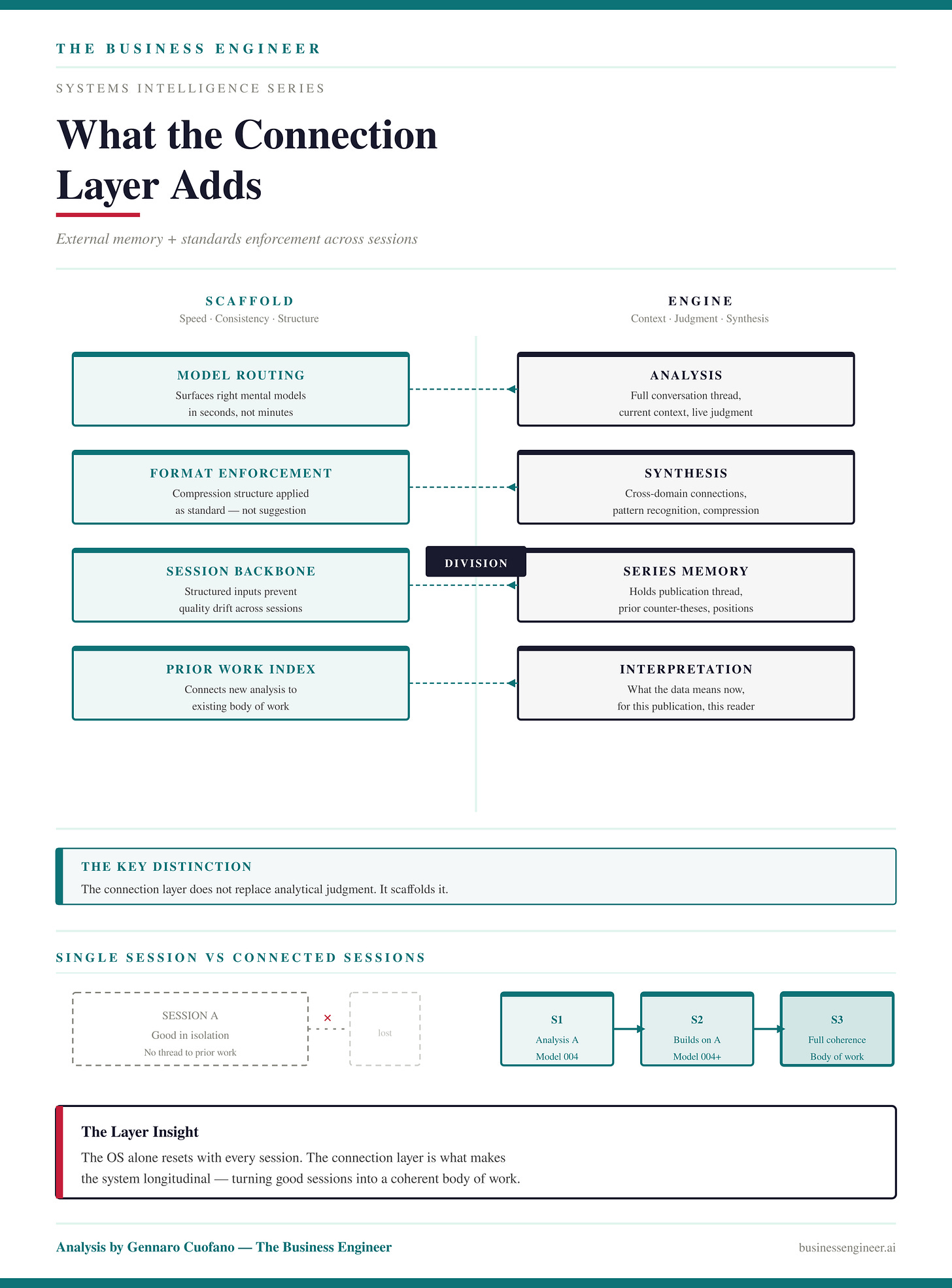

Having a thinking system embedded in your AI workflow is already a step change over using AI without one. But the OS alone still operates within a single conversation. Each session begins fresh.

A new conversation does not automatically know that three months ago there was an analysis that established a counter-thesis about the same market. Each session, in isolation, is good. Across sessions, the compounding that makes a publication distinctive gets lost.

This is the problem the connection layer solves. It acts as the OS’s external memory and standards enforcer. Not by doing the analysis itself — that remains the AI’s job, because the AI has the context, the conversation thread, and the analytical judgment — but by providing the structured inputs that make the analysis consistent, fast, and connected to prior work.

In practice, this means several things. When an analysis session begins, the connection layer immediately surfaces the right mental models for the specific problem being analyzed — numbered, with rationale, matched to the business question at hand.

This is not a trivial step. Selecting the right analytical lens from a library of over a hundred models requires judgment that benefits from a dedicated lookup rather than relying on the AI to surface it from memory alone. The layer does it in seconds.

When the analysis is complete, the same layer enforces the compression format — not as a suggestion but as a structured standard. Core insight, mechanism explanation, action items. Every time, in the same structure, in the same BE voice.

The key distinction is that the connection layer does not replace analytical judgment. It scaffolds it. The analysis, the synthesis, the compression, the interpretation: these require context, series memory, prior work, and the kind of judgment that only comes from knowing the full conversation. The AI provides all of that. The connection layer provides speed, consistency, and the structured backbone that prevents quality from drifting across sessions.

What This Means in Practice

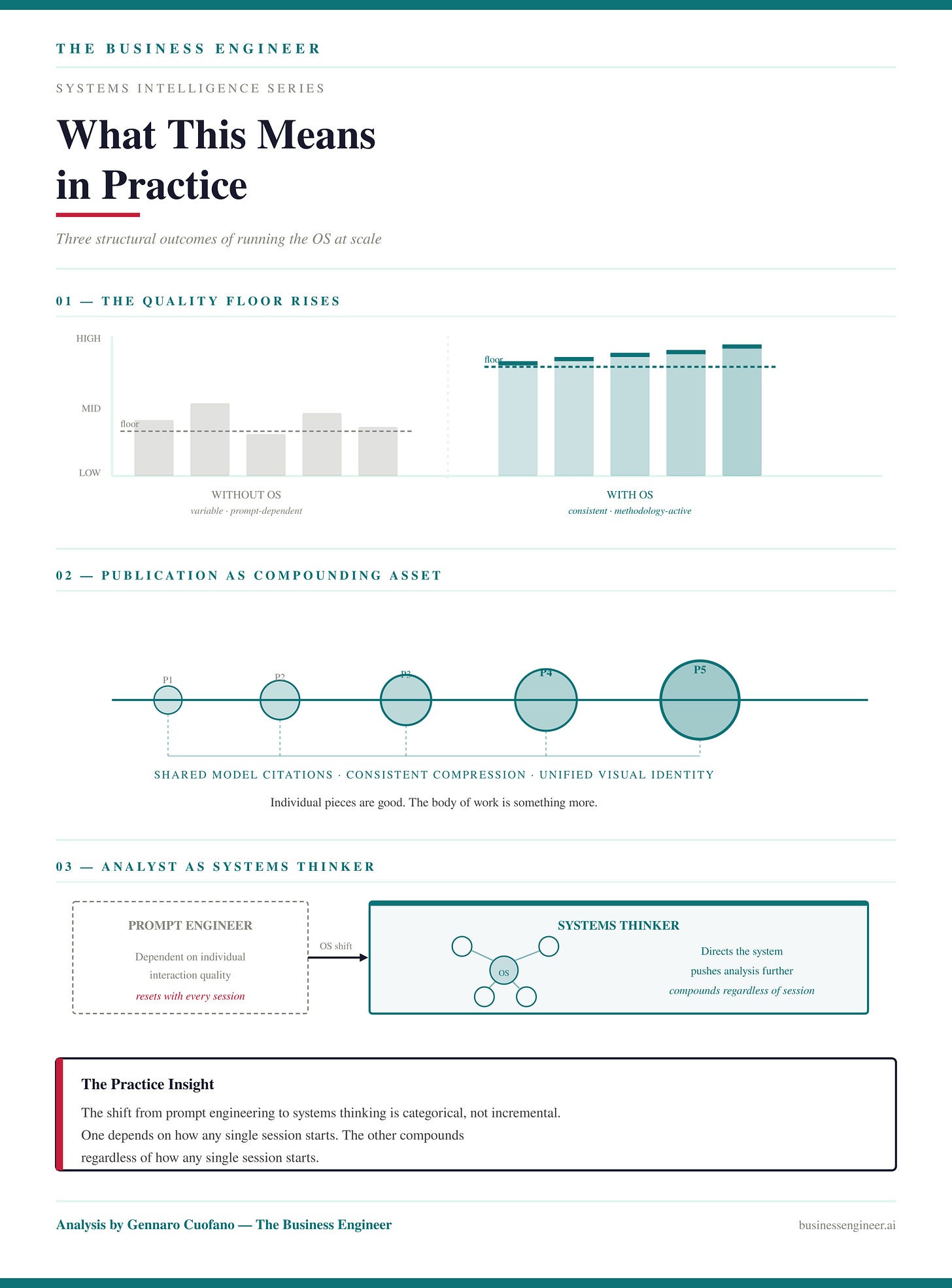

The quality floor rises. Every session begins with the right methodology already active, the right models already in scope, the output standard already enforced. There is no drift between a session where the prompt was well-crafted and a session where it was not.

The publication becomes a compounding asset rather than a collection of individual pieces. When mental models are cited consistently, when every compression follows the same format, when every visual carries the same identity, readers encounter a body of work with internal coherence. The individual pieces are good. The body of work is something more.

The analyst becomes a systems thinker rather than a prompt engineer. The shift is from asking AI the right questions to having built the right system for AI to operate within. These are categorically different relationships with the technology. One is dependent on individual interaction quality. The other compounds regardless of how any single interaction begins.

This is what the Business Engineer OS is being built toward. Not a better prompt. Not a smarter chatbot interaction. A thinking system that uses AI as the engine and the OS as the methodology — where the analyst’s role is to direct the system, push the analysis further, and build the publication that results.

Key Takeaways & Mental Models

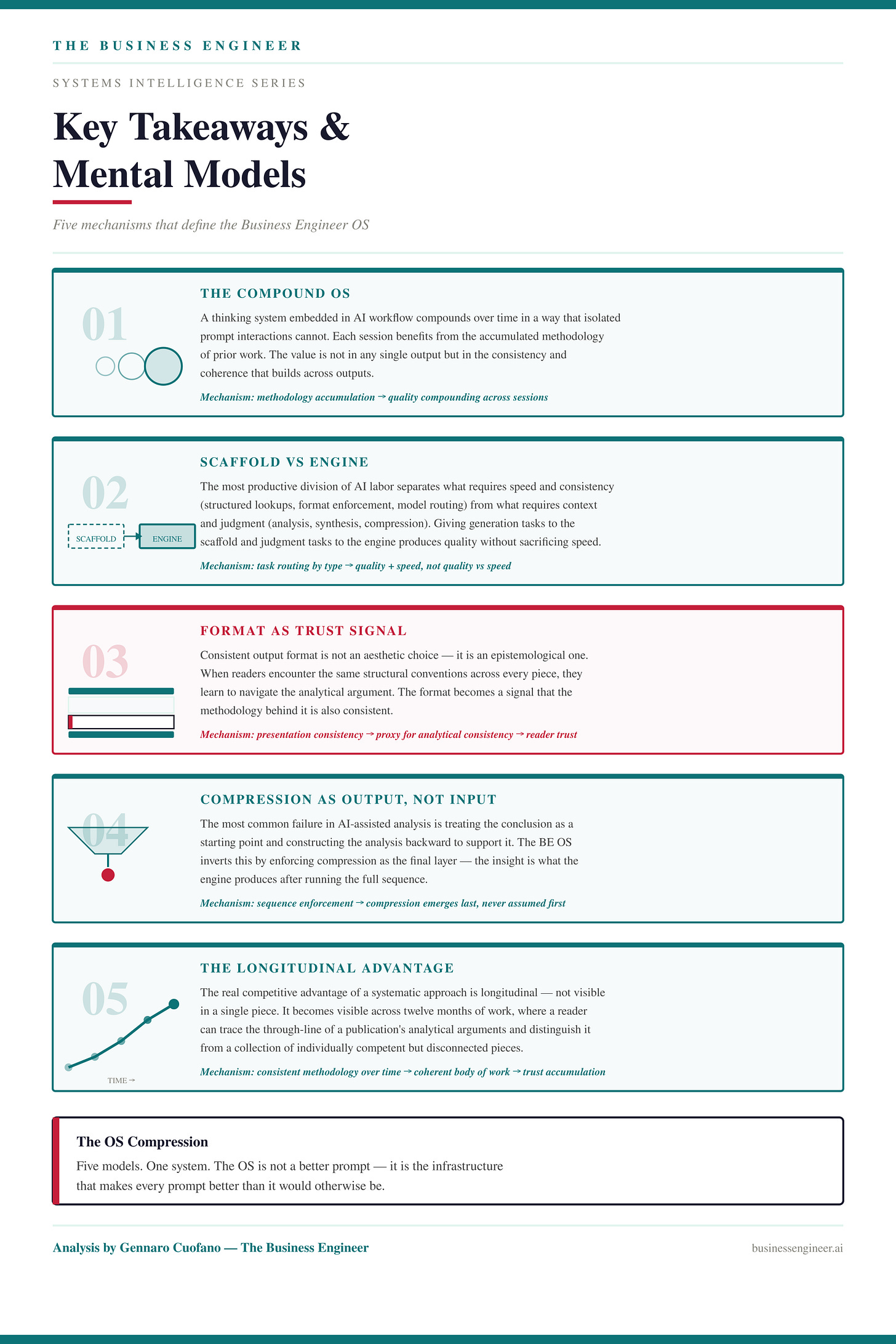

The Compound OS — a thinking system embedded in AI workflow compounds over time in a way that isolated prompt interactions cannot. Each session benefits from the accumulated methodology of prior work. The value is not in any single output but in the consistency and coherence that builds across outputs.

Scaffold vs Engine — the most productive division of AI labor separates what requires speed and consistency (structured lookups, format enforcement, mental model routing) from what requires context and judgment (analysis, synthesis, compression). Giving generation tasks to the scaffold and judgment tasks to the engine is the architecture that produces quality without sacrificing speed.

Format as Trust Signal — consistent output format is not an aesthetic choice. It is an epistemological one. When readers encounter the same structural conventions across every piece, they learn to navigate the analytical argument. The format becomes a signal that the methodology behind it is also consistent. Trust in the presentation is proxy for trust in the thinking.

Compression as Output, Not Input — the most common failure in AI-assisted analysis is treating the conclusion as a starting point and constructing the analysis backward to support it. The BE OS inverts this by enforcing compression as the final layer. The insight is what the engine produces after running the sequence — not what the analyst decided to say before it began.

The Longitudinal Advantage — the real competitive advantage of a systematic approach is longitudinal. It is not visible in a single piece. It becomes visible across twelve months of work, where a reader can trace the through-line of a publication’s analytical arguments, recognize the coherence of a body of work, and distinguish it from a collection of individually competent but disconnected pieces.

With massive ♥️ Gennaro Cuofano, The Business Engineer