The Harness as the Agentic Moat

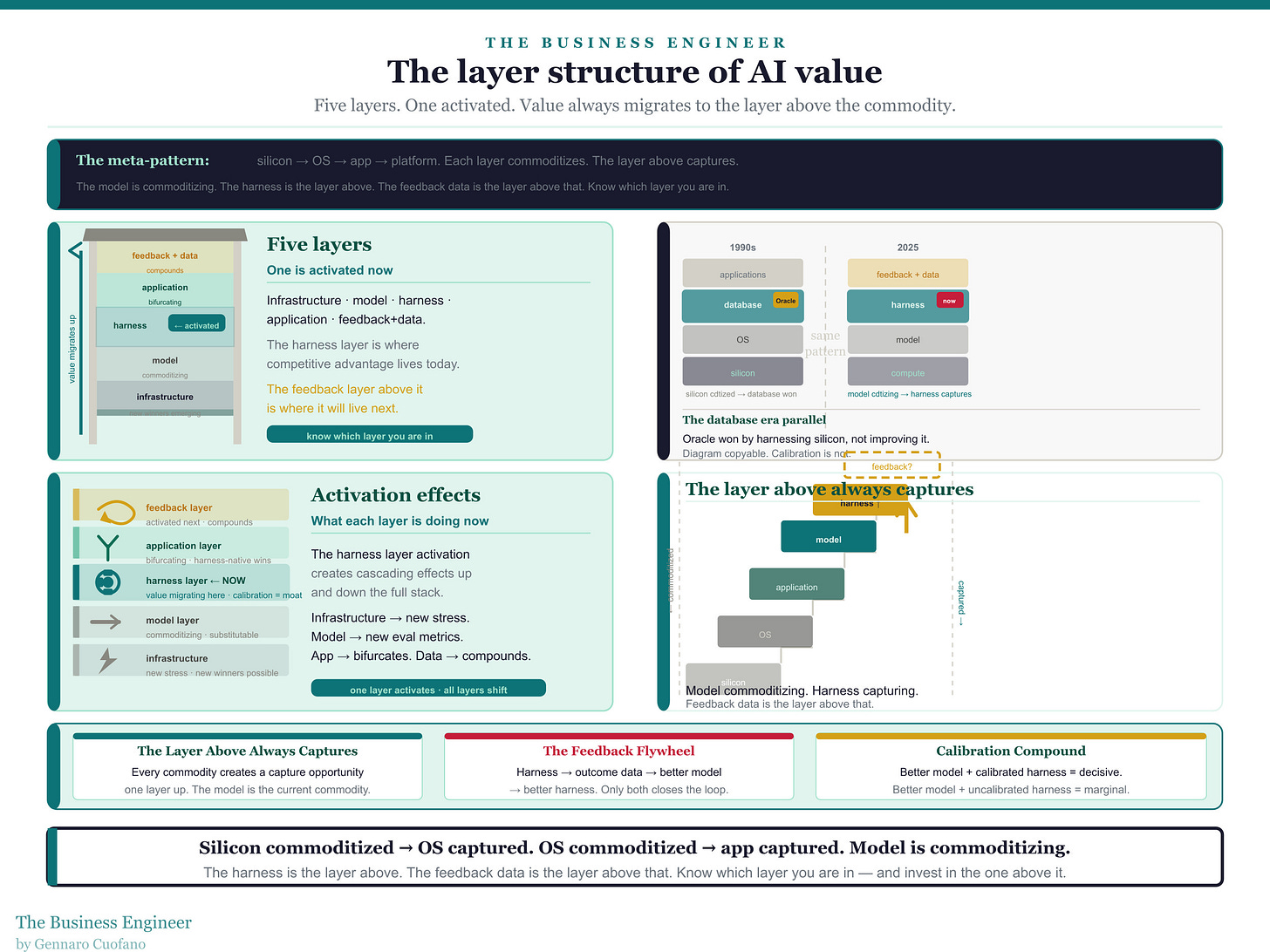

For most of the past decade, the dominant logic of AI competition was simple: scale wins. More compute, more data, more parameters — and the model gets better. The companies that understood this earliest and executed it most aggressively built enormous leads. The frontier model was the product. Everything else was secondary.

That logic has not disappeared. But it has been joined by a second logic that is now equally consequential. Models have crossed a capability threshold where the binding constraint is no longer what the model can do in a single turn — it is what a system built around the model can do over time. The shift from scaling to deployment is the shift from “how good is the model?” to “how well can you harness it?”

To see what this shift looks like from the inside, consider what Anthropic’s internal Labs team has been doing: building and running production-quality multi-agent harnesses designed to push Claude beyond its baseline on long-running autonomous coding tasks. The architecture they developed uses a generator-evaluator structure inspired by Generative Adversarial Networks — one agent produces output, a separate calibrated agent evaluates it, and the system runs in sprint-based loops with explicit context management and handoff logic. The decisive result in these experiments came not from the model alone, but from the harness around it. A frontier lab has acknowledged, in concrete architectural detail, that the system produced the decisive result — not the model. That is a meaningful signal about where the value is moving.

This piece maps the full structure of that shift: the layer architecture of the AI stack, what the harness era is activating at each layer, how different players are positioned, and the mental models for what comes next.

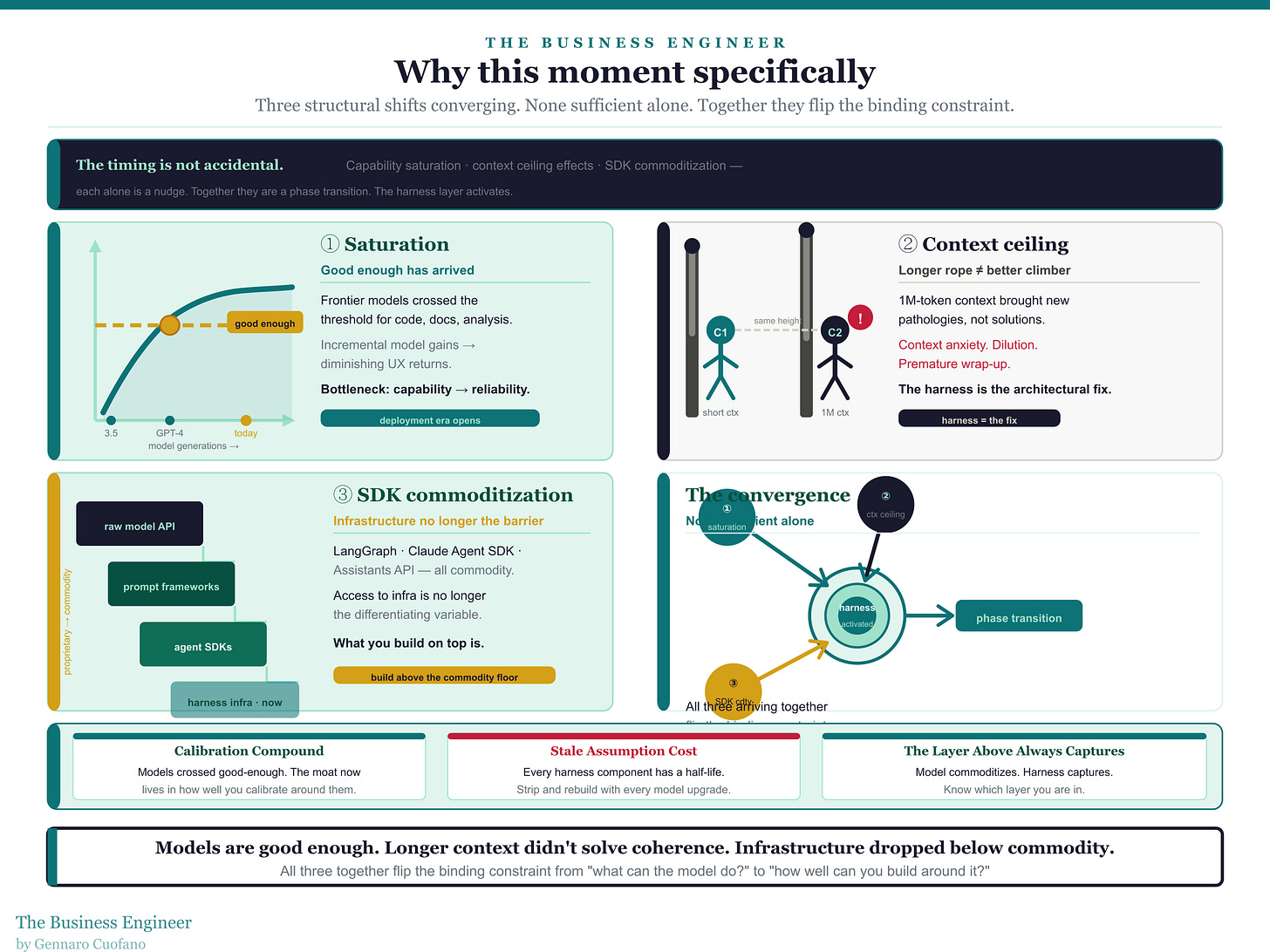

Why this moment specifically

The timing is not accidental. Three structural shifts are converging simultaneously.

The first is capability saturation at the task level. For a growing range of tasks — code generation, document drafting, basic analysis — the best frontier models are already good enough that incremental model improvements produce diminishing returns in user experience. The bottleneck is no longer model capability on the task. It is reliability, coherence, and output quality over extended autonomous runs.

The second is context window expansion hitting its ceiling effects. Longer context windows were intended to address the coherence problem in long-running tasks. They helped, but they introduced new pathologies — what Anthropic’s engineers call context anxiety, where models begin wrapping up work prematurely as they approach what they believe is their context limit. Attention dilutes. The model loses track of what it was doing four hours earlier. The harness is the engineering response to those pathologies. It is what you build when you discover that a longer rope is not the same as a better climber.

The third is the agent SDK layer maturing below the commodity threshold. With Anthropic’s Claude Agent SDK, OpenAI’s Assistants API, and LangGraph all now stable enough to build production systems on top of, the infrastructure for multi-agent harnesses is no longer a barrier. What separates teams is no longer access to the infrastructure. It is what they build on top of it — and how carefully they have calibrated it against real production workloads.

The combination of these three shifts means harness design has moved from a research interest to a competitive variable. The teams that understood this in 2023 and 2024 are now compounding on a two-year lead.