The Harnessing Map of AI

This Week In Business AI [Week #13-2026]

There is a gap at the center of the enterprise AI market that almost no one is naming precisely.

On the one hand, AI capabilities are advancing at a rate without historical precedent in enterprise software. Every quarter brings models that are materially more capable than the last. The raw computational power available to any organization — through API access, cloud deployment, and open-source models that can be run on-premises — is staggering and continues to accelerate.

On the other side, the ability of organizations to safely absorb, deploy, and extract value from that capability is advancing much more slowly. Gartner projects that over 40% of enterprise agentic AI projects will be canceled by 2027. Not because the models failed. Not because the use cases were wrong. Because the control infrastructure wasn’t in place. The capability existed and was impressive. The harnessing did not.

This is the harnessing gap — the distance between what AI can do and what enterprises can safely deploy —, and it is the defining structural tension of the current AI market phase. It is widening before it narrows because model capabilities are advancing faster than governance, memory, and orchestration infrastructure can keep up.

The companies building infrastructure to close that gap — at each of the four layers where it manifests — are building the most durable positions in the AI economy. The companies still competing on capability metrics are optimizing for a race that is already largely over.

This analysis maps that gap in full:

The four specific control problems enterprises must solve in sequence

Where each major player — Anthropic, OpenAI, Microsoft, Google, Meta — operates on that harnessing cascade

How each player’s position cascades back into the broader ecosystem, creating structural advantages and vulnerabilities that will define competitive outcomes through the rest of the decade

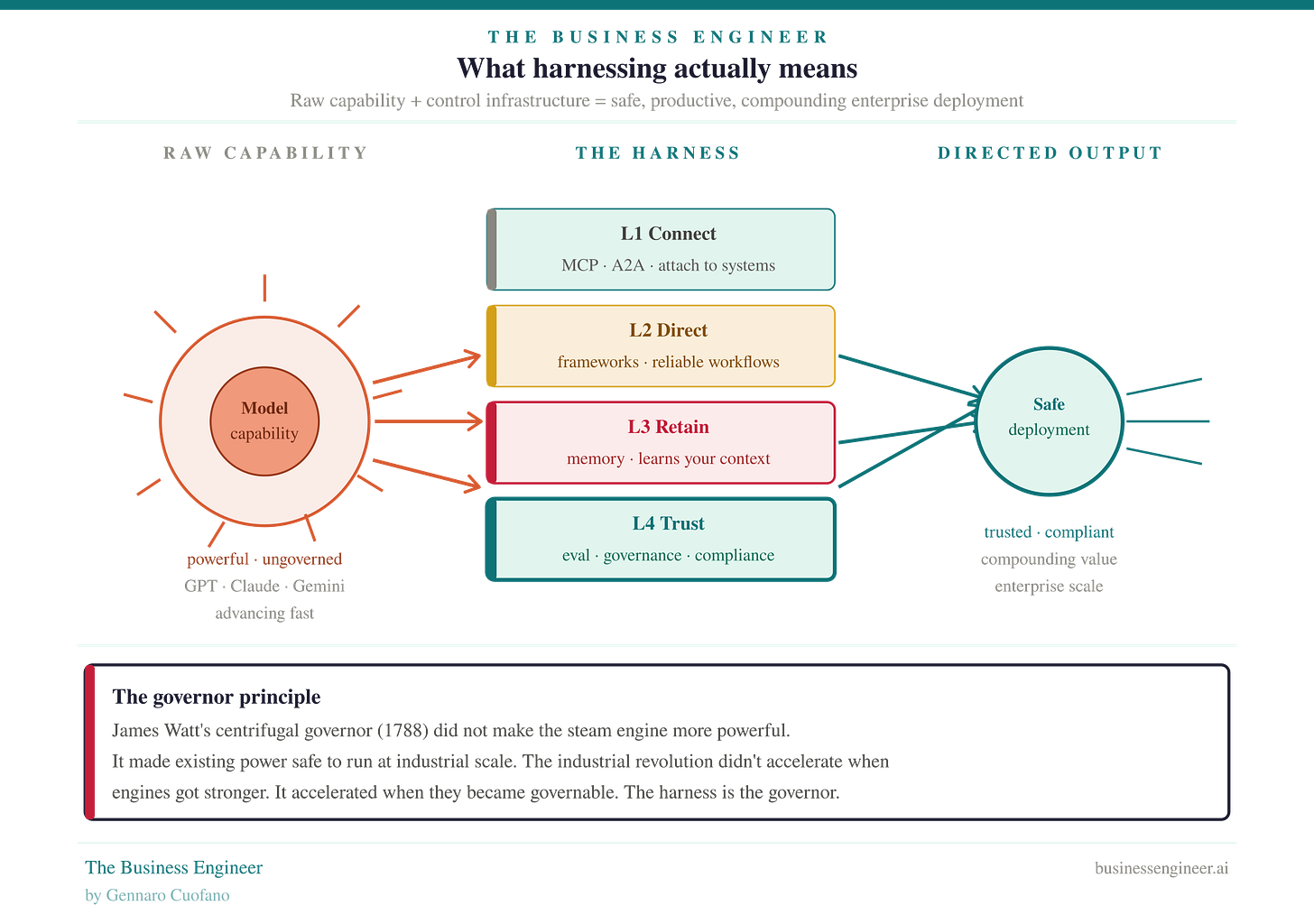

Part One: The Architecture — What Harnessing Actually Means

Harnessing is not a technical metaphor. It is a strategic one.

A harness is not what makes an animal powerful. The animal is powerful without it. A harness is what makes that power directable — what converts raw capability into useful work, in a controlled direction, without the power running away and causing damage. The harness is the control interface between raw capability and productive output.

In the AI context, the harness is the infrastructure — protocols, frameworks, memory systems, evaluation and governance tools — that sits between raw model capability and safe, productive enterprise deployment. Without it, capability exists but cannot be reliably directed, retained, or trusted. With it, even a less capable model can produce more enterprise value than a more capable model deployed without control infrastructure.

The steam engine analogy makes this concrete. James Watt’s centrifugal governor, invented in 1788, did not make the steam engine more powerful. It made the steam engine deployable. Early steam engines were genuinely dangerous — they ran at uncontrolled speeds, pressure built without warning, and boiler explosions killed workers. The Industrial Revolution did not accelerate when engines got more horsepower. It accelerated when the governor made existing power safe to run at an industrial scale. The governor was the harnessing layer. Without it, the engine was a liability. With it, it became the foundation of a new economy.

AI in 2026 is at the same inflection point. The engines are extraordinarily capable. GPT-5, Claude Sonnet 4.6, Gemini — remarkable instruments that would have seemed impossible five years ago. But without the control infrastructure that makes them governable, they are industrial-era steam engines without governors: impressive, occasionally explosive, not safely deployable at enterprise scale with consequential outputs.

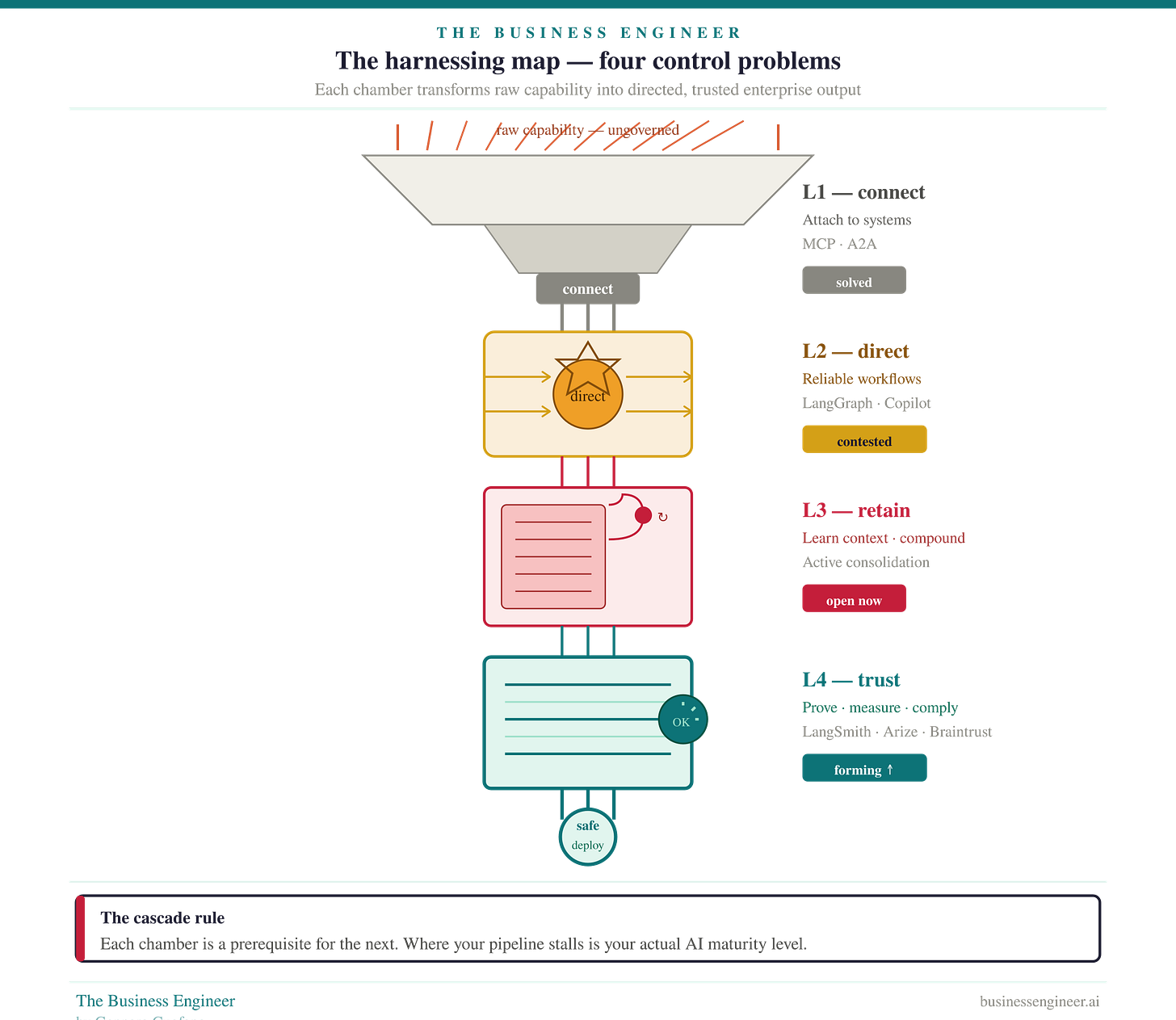

The Harnessing Map — Four Control Problems in Sequence

The harnessing map decomposes the AI control problem into four sequential layers. They are sequential because each one is a structural prerequisite for the next. Enterprises stall at the layer where their control infrastructure runs out. The layer where they stall defines their actual AI maturity more precisely than any other single metric.

The cascade logic is exact:

You cannot trust what you cannot measure

You cannot measure what does not retain

You cannot retain what you cannot direct

You cannot direct what you cannot connect

Each layer unlocks the next — and creates a new problem that only becomes visible once the previous layer is solved.

The weekly newsletter is in the spirit of what it means to be a Business Engineer:

We always want to ask three core questions:

What’s the shape of the underlying technology that connects the value prop to its product?

What’s the shape of the underlying business that connects the value prop to its distribution?

How does the business survive in the short term while adhering to its long-term vision through transitional business modeling and market dynamics?

These non-linear analyses aim to isolate the short-term buzz and noise, identify the signal, and ensure that the short-term and the long-term can be reconciled.