The System Prompting Guide for The Business Engineer

This Week In Business AI [Week #16-2026]

Most practitioners approach system prompting the way they approach a search engine. You type something in. Something comes out. If the output is wrong, you adjust the input.

The mental model is linear: instruction is about compliance. The practitioner’s job, in this framing, is to write clearer instructions.

This mental model is not just incomplete. It is structurally misleading in a way that compounds over time. The practitioner who holds it gets progressively better at writing instructions and progressively more confused about why the outputs keep disappointing them in the same ways.

The failure modes feel arbitrary — sometimes the model does what you asked, sometimes it doesn’t, and the gap between the two cases is not obvious from the instruction itself.

The reason is that they are intervening in a system while thinking they are issuing commands. These are not the same activity, and the difference between them is not a matter of degree. It is a structural difference that determines what the practitioner can and cannot accomplish.

The correct mental model is systems thinking. Not as a metaphor. As a literal description of what is happening when you write a system prompt.

Why the linear model fails:

It locates the problem in the wrong place. When output is wrong, the instruction-writer edits the instruction. The systems thinker asks which structural element of the system generated the failure. These are different diagnoses and they produce different interventions.

It has no theory of resistance. A command-and-compliance model has no account of why a well-formed instruction consistently fails to produce a desired output. The system has its own dynamics that resist certain kinds of conditioning regardless of how clearly the instruction is written.

It compounds in the wrong direction. Each iteration of instruction refinement feels like progress and may be making the underlying structural problem worse — collapsing variance, reinforcing confirmation loops, producing outputs that satisfy the surface specification while drifting further from the correct distributional region.

It has no leverage point theory. It treats all prompt elements as equally powerful. They are not. The gap between a parameter-level intervention and a paradigm-level intervention in terms of effect on output is enormous, and conflating them produces systematic underinvestment in the interventions that actually work.

What a System Prompt Actually Is

A large language model is a conditional probability distribution — P(output | context). The training process has shaped that distribution across an enormous corpus.

The shape reflects the statistical structure of the training data: what tends to follow what, which framings tend to produce which outputs, which domains cluster with which analytical patterns.

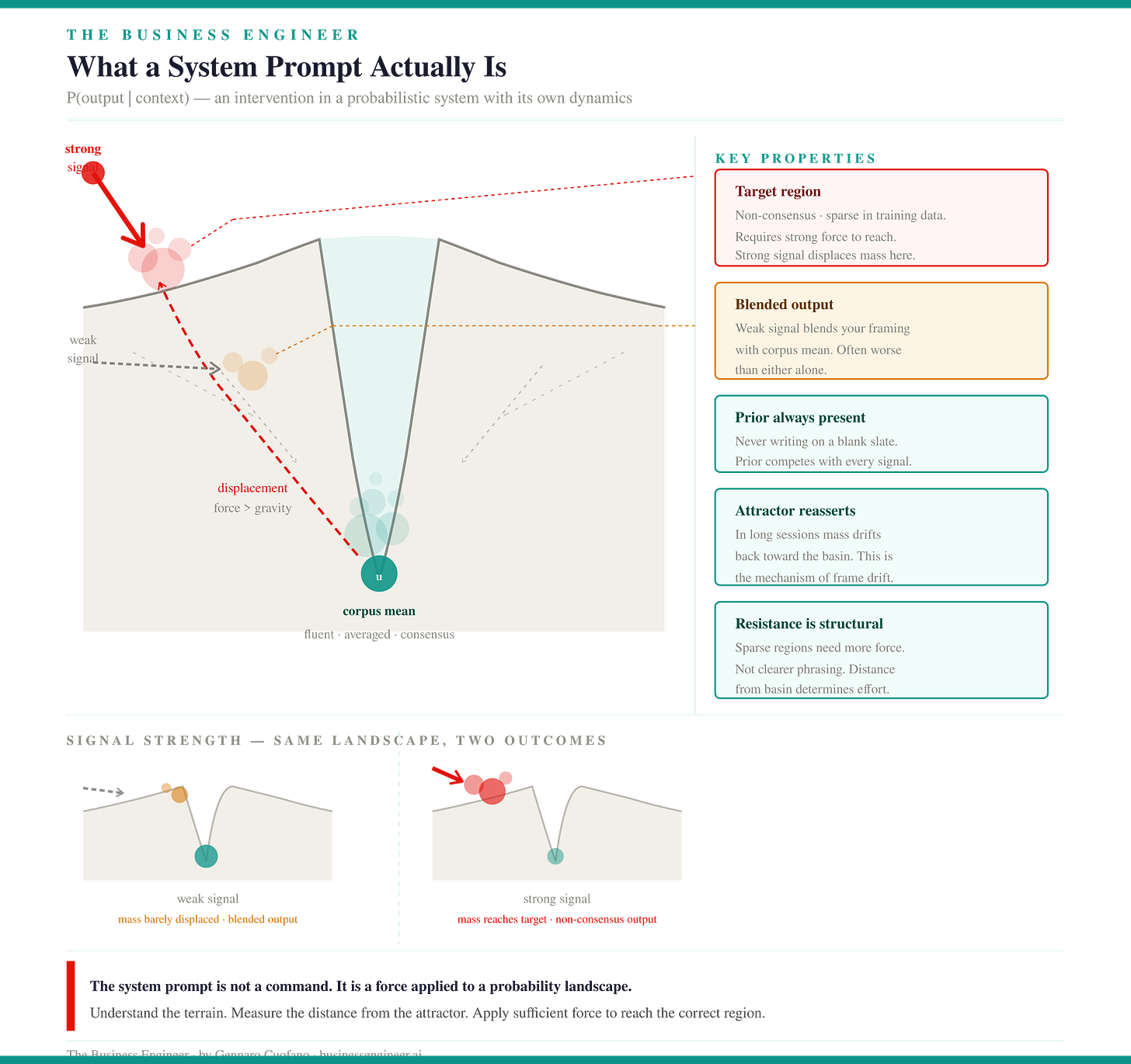

This distribution has dynamics. It has an attractor state — the place it returns to in the absence of strong conditioning. That attractor is the corpus mean: the averaged, consensus, most-frequently-represented version of whatever you are asking about. The attractor is not a failure state. It is what a well-functioning probability distribution does when the conditioning signal is weak. It returns to the center of the prior.

A system prompt is an intervention in this distribution. Not a command issued to an agent. Not a query submitted to a database. An intervention in a probabilistic system that has its own dynamics, its own attractor, and its own resistance to certain kinds of conditioning.

What this means structurally:

The prior is always present. You are never writing on a blank slate. Every system prompt competes with the model’s training prior for influence on the output distribution. The question is not whether the prior is active. It is whether your conditioning signal is strong enough to displace it toward the region you need.

Displacement is not replacement. The conditioning signal shifts probability mass. It does not erase the prior. Weak conditioning produces outputs that are a blend of your signal and the corpus mean — frequently worse than either alone, because it combines the surface features of your framing with the structural assumptions of the default.

The attractor reasserts under complexity. In long, complex briefs and multi-turn sessions, the conditioning signal weakens as context accumulates. The attractor reasserts itself not because the model forgot your instructions but because the probability mass shifts back toward its prior distribution as the distance from the conditioning signal increases. This is the mechanical explanation for frame drift.

Resistance is structural, not volitional. The model is not resisting your intent. The training distribution is structurally weighted toward the consensus, the well-documented, the most-frequently-expressed. Any conditioning signal that asks the model to sample from a low-density region of its prior — a non-consensus frame, a tail-first analysis, a paradigm-level insight — is working against the gradient of the distribution. This requires more force, not clearer phrasing.

Systems thinking — developed most rigorously by Jay Forrester and Donella Meadows — is the discipline of understanding how to intervene effectively in systems that behave this way: systems with their own dynamics, attractor states, feedback structures, and resistance to point interventions.

The questions it asks are precisely the questions a practitioner needs to answer to write effective system prompts.

What is the attractor? Where are the feedback loops? Where is the leverage? What happens in the second and third orders when you intervene at a particular point?

The System’s Anatomy

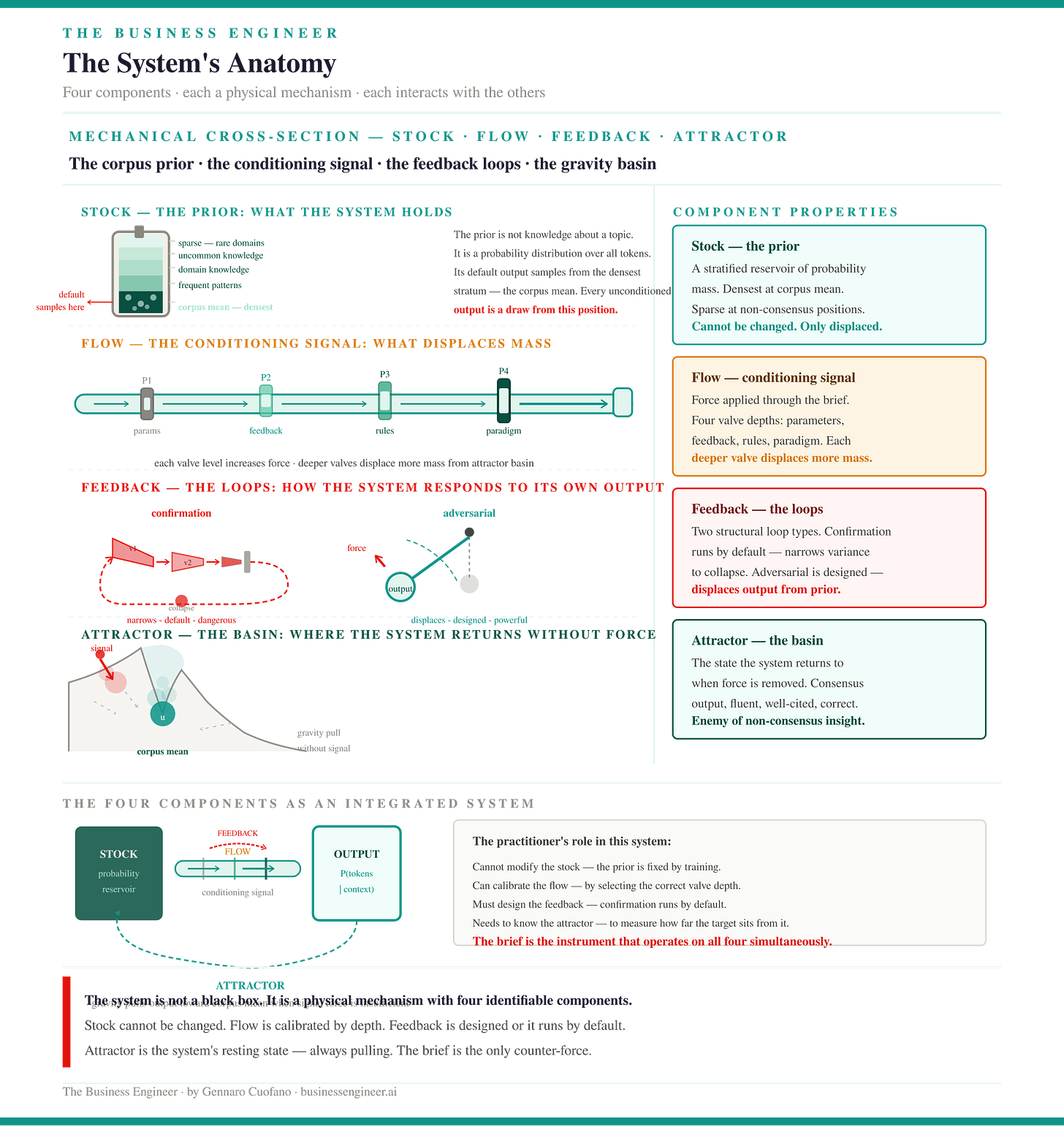

Before you can intervene effectively, you need a map of what you are intervening in. The prompting system has four structural elements: stocks, flows, feedback loops, and the attractor.

Each behaves differently and requires different kinds of intervention.

The Executive Plan with the Claude OS Skill included

Stocks: The Prior

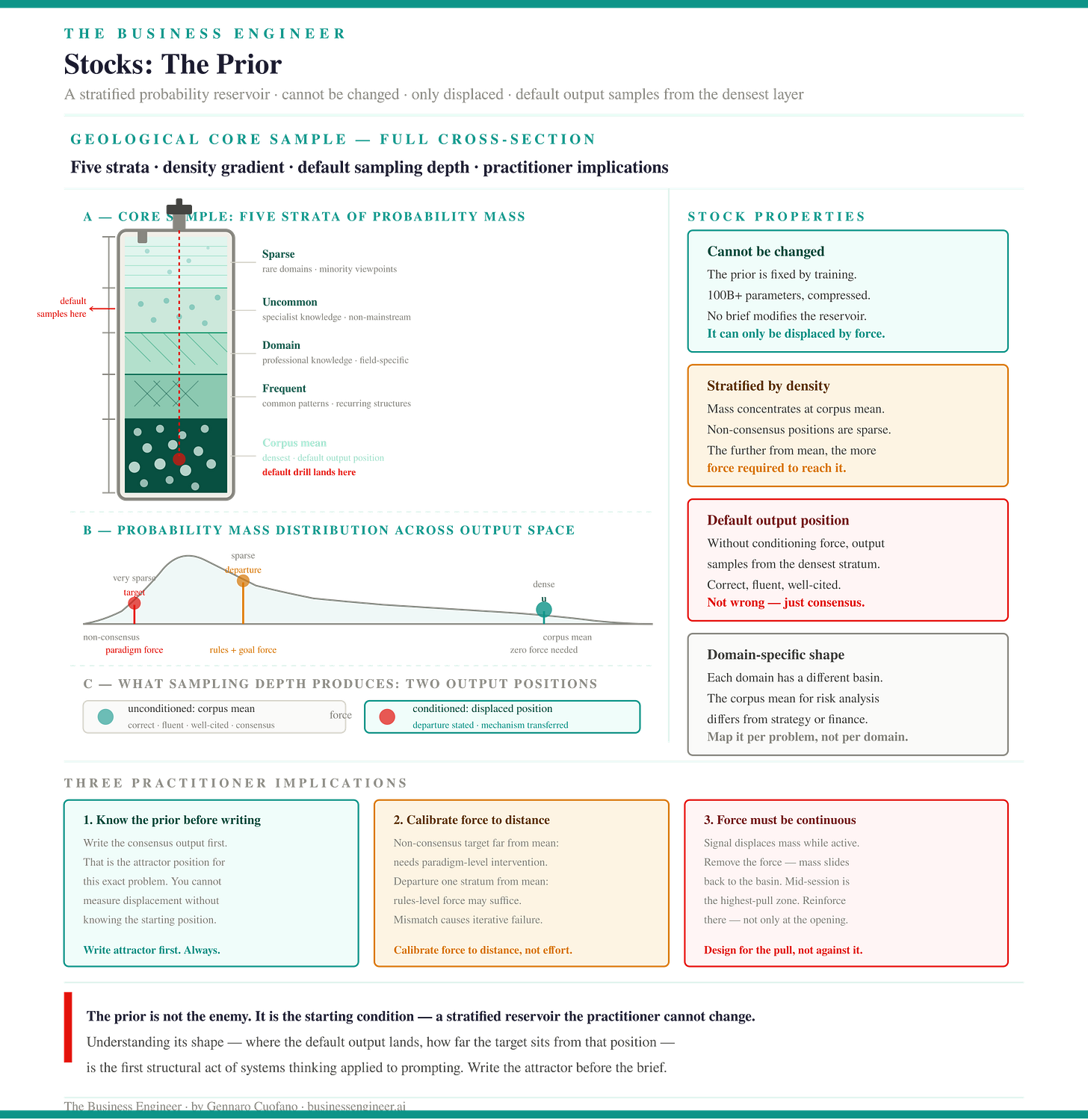

The stock is the model’s prior — the probability mass accumulated across training. It is the system’s memory. It is what exists before any conditioning signal is applied and what persists after the conditioning signal ends.

Key properties of the stock:

You cannot empty it. The prior cannot be zeroed out by instruction. A system prompt that tells the model to ignore its training is not overriding the prior — it adds a conditioning signal that competes with it. The prior remains.

You cannot rewrite it. The stock was shaped by the training process, not by you. You can shift sampling away from certain regions, but you cannot change its shape.

Its density is uneven. Some regions of the prior are dense, well-documented domains, consensus frameworks, and frequently-expressed analytical patterns. Other regions are sparse — non-consensus frames, tail-first analyses, domain-crossing insights. Getting the model to sample from sparse regions requires stronger conditioning signals than sampling from dense regions. This is not a matter of clarity. It is a matter of probability mass.

Its center is the default output. Without conditioning, the model samples from the high-density center. This is the averaged, consensus, well-cited version of whatever you are asking about. It is always fluent. It is frequently structurally wrong for the specific problem at hand.

Flows: The Conditioning Signal

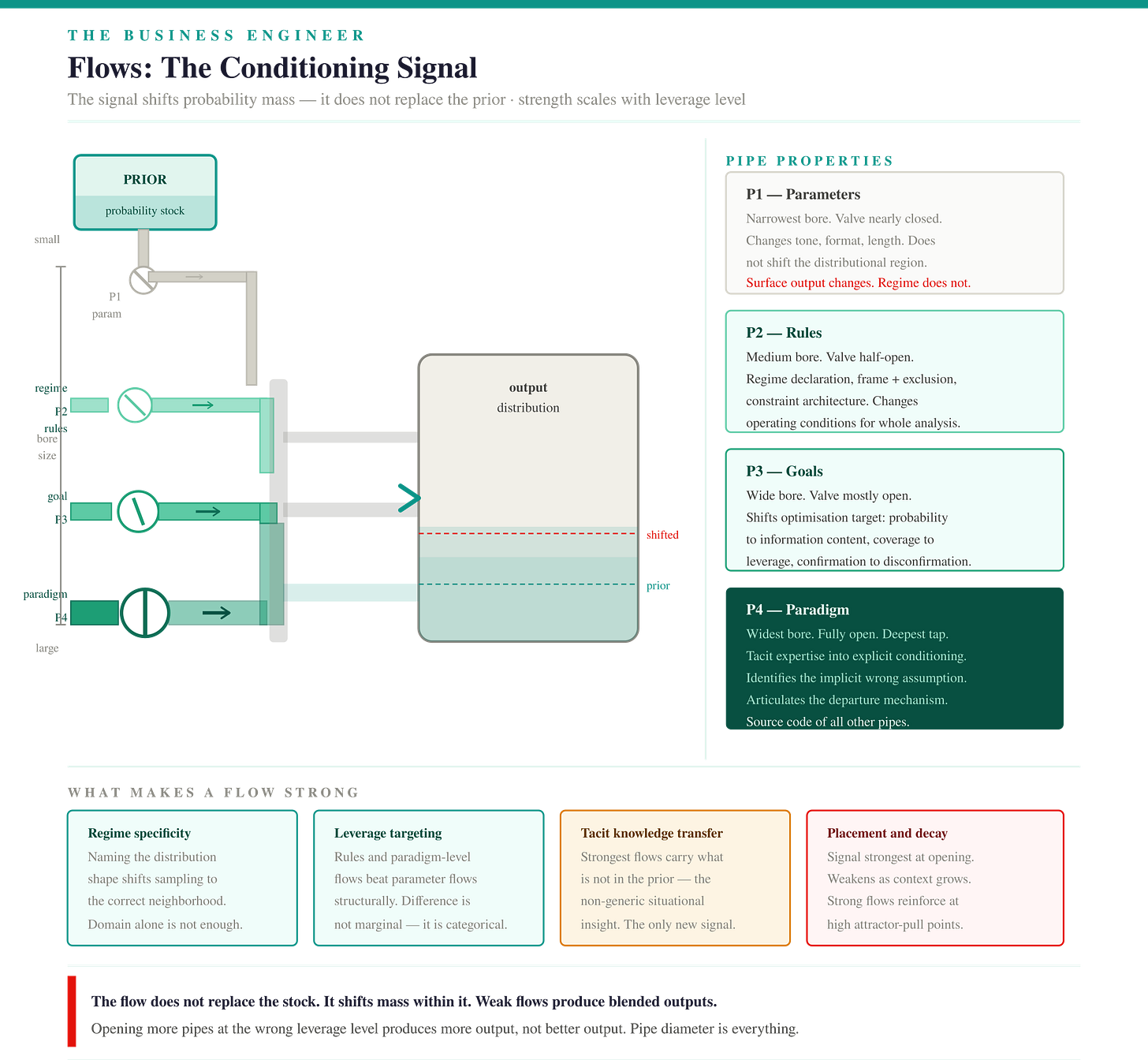

The flow is the conditioning signal — the system prompt, the context, the framing choices, the constraints, the examples. The flow does not replace the stock. It shifts probability mass. A strong flow shifts it significantly toward the region you need. A weak flow leaves the stock dominant and produces outputs from the attractor state.

What makes a flow strong or weak:

Regime specificity. A flow that identifies the specific distributional shape of the problem — fat-tailed, power-law, bimodal, multimodal — is stronger than a flow that identifies only the domain. Domain identification shifts the distribution from the full corpus prior to the domain-specific region. Regime identification shifts it from the domain region to the specific analytical neighborhood required by the task. These are different shifts of different magnitudes.

Leverage point targeting. A flow that intervenes at the rules or paradigm level of the system is structurally stronger than one that intervenes at the parameter level. Specifying tone, format, and length is a parameter adjustment. Specifying which distributional region to sample from, why the standard approach misses what matters, and what the analysis needs to contain to be genuinely useful — these are rules and paradigm-level interventions. The difference in output effect is not marginal.

Tacit knowledge transfer. The strongest flows contain knowledge that is not in the model’s prior — the practitioner’s non-generic situational understanding, the structural insight that makes this problem different from the generic version. This is the only kind of conditioning that produces outputs the model could not have generated without the signal.

Placement and decay. The conditioning signal is strongest at the opening of the context and weakens as the distance from it increases. A flow that places critical conditioning elements only in the opening will produce drift in complex, multi-part analyses. Strong flows reinforce regime-specific and frame-specific conditioning at the structural points where attractor pull is greatest — typically in the middle sections of long analyses where the model has accumulated enough context to begin gravitating back to its prior.

The weekly newsletter is in the spirit of what it means to be a Business Engineer:

We always want to ask three core questions:

What’s the shape of the underlying technology that connects the value prop to its product?

What’s the shape of the underlying business that connects the value prop to its distribution?

How does the business survive in the short term while adhering to its long-term vision through transitional business modeling and market dynamics?

These non-linear analyses aim to isolate the short-term buzz and noise, identify the signal, and ensure that the short-term and the long-term can be reconciled.