The AI Orchestrator's Leverage Points

In 1999, Donella Meadows published a short paper that would become one of the most cited texts in systems thinking: Leverage Points: Places to Intervene in a System.

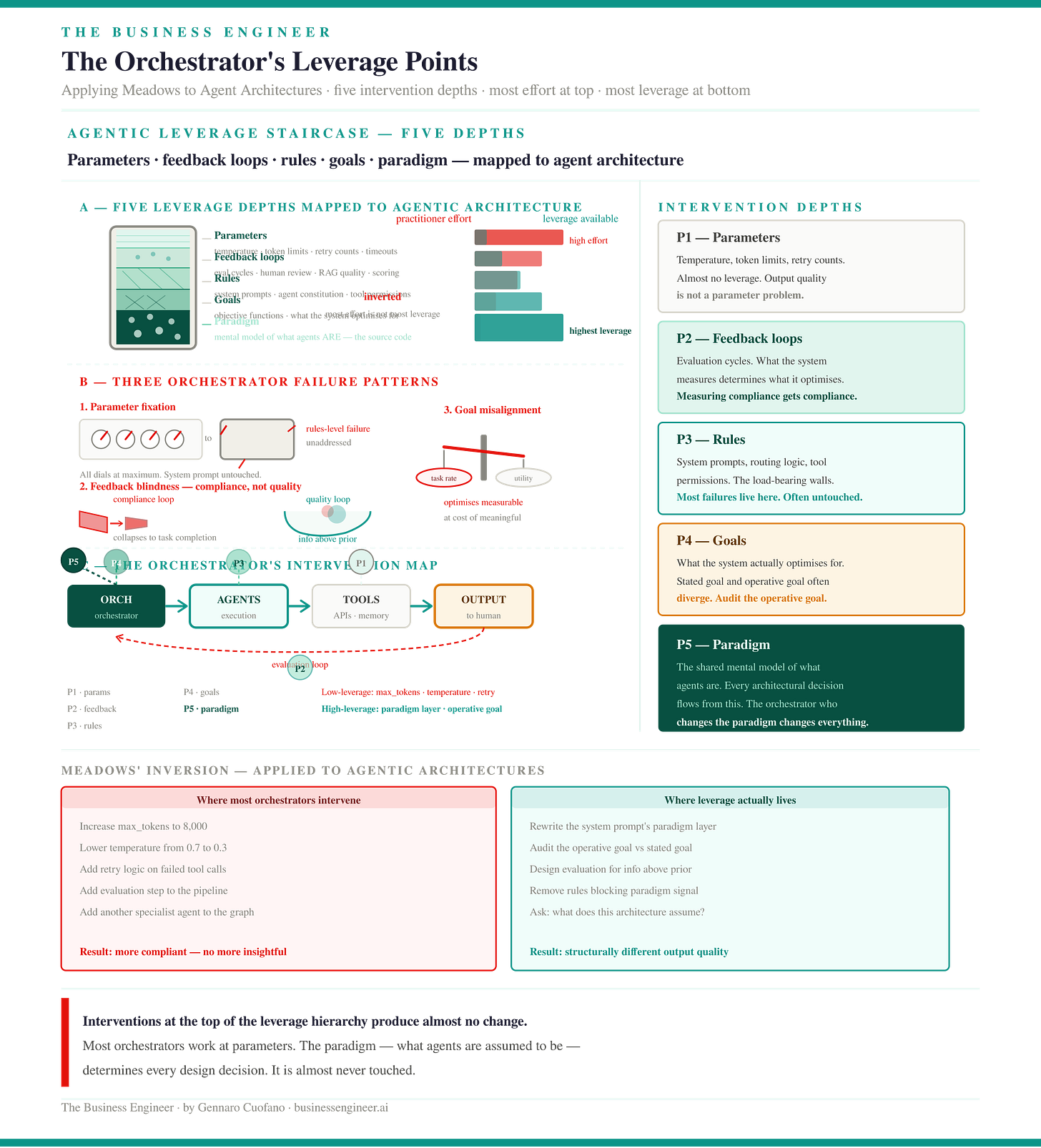

The central argument was deceptively simple. Every system has places where a small change produces large effects. The problem — the structural problem that makes this insight difficult to use — is that these high-leverage points are almost always the opposite of where practitioners look.

People focus on numbers: budgets, headcounts, parameters. Numbers are visible and adjustable. Adjusting them feels like an intervention. But adjusting numbers almost never changes a system’s behavior in any fundamental way.

The real leverage lives in the rules, goals, and paradigms that determine why the system produces what it produces. By the time you are adjusting parameters, you are already at the least-leveraged end of the hierarchy.

Agentic architectures are systems in the Meadows sense. They have stocks: the probability mass in model priors, accumulated context in memory, knowledge embedded in retrieval systems.

They have flows: API calls, tool invocations, prompts moving through pipeline stages. They have feedback loops: human review cycles, automated evaluation, and scoring systems that determine which outputs get reinforced.

They have goals: objective functions, system prompts that declare optimization targets, and reward signals that shape behavior over time. And they have a paradigm: the shared mental model of what agents fundamentally are.

The orchestrator’s job is exactly what Meadows described. Find where in the system leverage actually lives. Intervene there — not at the most visible point.

Most orchestrators are not doing this.